The latest (somewhat random) collection of recent essays and stories from around the web that have caught my eye and are worth plucking out to be re-read.

Massacre in Myanmar

Wa Lone, Kyaw Soe Oo, Simon Lewis & Antoni Slodkowsk,

Reuters, 8 February 2018

Bound together, the 10 Rohingya Muslim captives watched their Buddhist neighbors dig a shallow grave. Soon afterwards, on the morning of Sept. 2, all 10 lay dead. At least two were hacked to death by Buddhist villagers. The rest were shot by Myanmar troops, two of the gravediggers said.

‘One grave for 10 people,’ said Soe Chay, 55, a retired soldier from Inn Din’s Rakhine Buddhist community who said he helped dig the pit and saw the killings. The soldiers shot each man two or three times, he said. ‘When they were being buried, some were still making noises. Others were already dead.’

The killings in the coastal village of Inn Din marked another bloody episode in the ethnic violence sweeping northern Rakhine state, on Myanmar’s western fringe. Nearly 690,000 Rohingya Muslims have fled their villages and crossed the border into Bangladesh since August. None of Inn Din’s 6,000 Rohingya remained in the village as of October.

The Rohingya accuse the army of arson, rapes and killings aimed at rubbing them out of existence in this mainly Buddhist nation of 53 million. The United Nations has said the army may have committed genocide; the United States has called the action ethnic cleansing. Myanmar says its ‘clearance operation’ is a legitimate response to attacks by Rohingya insurgents.

Rohingya trace their presence in Rakhine back centuries. But most Burmese consider them to be unwanted immigrants from Bangladesh; the army refers to the Rohingya as ‘Bengalis.’ In recent years, sectarian tensions have risen and the government has confined more than 100,000 Rohingya in camps where they have limited access to food, medicine and education.

Reuters has pieced together what happened in Inn Din in the days leading up to the killing of the 10 Rohingya – eight men and two high school students in their late teens.

Until now, accounts of the violence against the Rohingya in Rakhine state have been provided only by its victims. The Reuters reconstruction draws for the first time on interviews with Buddhist villagers who confessed to torching Rohingya homes, burying bodies and killing Muslims.

This account also marks the first time soldiers and paramilitary police have been implicated by testimony from security personnel themselves. Members of the paramilitary police gave Reuters insider descriptions of the operation to drive out the Rohingya from Inn Din, confirming that the military played the lead role in the campaign.

The slain men’s families, now sheltering in Bangladesh refugee camps, identified the victims through photographs shown to them by Reuters. The dead men were fishermen, shopkeepers, the two teenage students and an Islamic teacher.

Read the full article in Reuters.

The new conspiracists

Russell Muirhead & Nancy Rosenblum, Dissent, Winter 2018

Conspiracism is not new, of course, but the conspiracism we see today does introduce something new—conspiracy without the theory. And it betrays a new destructive impulse: to delegitimate the government. Often the aim is merely to deligitimate an individual or a specific office. The charge that Obama conspired to fake his birth certificate only aimed at Obama’s legitimacy. Similarly, the charge that the National Oceanic and Atmospheric Administration publicized flawed data in order to make global warming look more menacing was designed only to demote the authority of climate scientists working for the government. But the effects of the new strategy cannot be contained to one person or entity. The ultimate effect of the new conspiracism will be to delegitimate democracy itself.

Conventional conspiracism makes sense of a disorderly and complicated world by insisting that powerful human beings can and do control events. In this way, it gives order and meaning to apparently random occurrences. And in making sense of things, conspiracism insists on proportionality. JFK’s assassination, this type of thinking goes, was not the doing of a lone gunman—as if one person acting alone could defy the entire U.S. government and change the course of history. Similarly, the brazen attacks on the World Trade Center and the Pentagon on 9/11 could not have been the work of fewer than two dozen men plotting in a remote corner of Afghanistan. So conspiracist explanations insist that the U.S. government must have been complicit in the strikes.

Conspiracism insists that the truth is not on the surface: things are not as they seem, and conspiracism is a sort of detective work. Once all the facts—especially facts ominously withheld by reliable sources and omitted from official reports—are scrupulously amassed and the plot uncovered, secret machinations make sense of seemingly disconnected events. What historian Bernard Bailyn observed of the conspiracism that flourished in the Revolutionary era remains characteristic of conspiracism today: ‘once assumed [the picture] could not be easily dispelled: denial only confirmed it, since what conspirators profess is not what they believe; the ostensible is not the real; and the real is deliberately malign.’

When conspiracists attribute intention where in fact there is only accident and coincidence, reject authoritative standards of evidence and falsifiability, and seal themselves off from any form of correction, their conspiracism can seem like a form of paranoia—a delusional insistence that one is the victim of a hostile world. This is not to say that all conspiracy theories are wrong; sometimes what conspiracists allege is really there. Yet warranted or not, conventional conspiracy theories offer both an explanation of the alleged danger and a guide to the actions necessary to save the nation or the world.

The new conspiracism we are seeing today, however, often dispenses with any explanations or evidence, and is unconcerned with uncovering a pattern or identifying the operators plotting in the shadows. Instead, it offers only innuendo and verbal gesture, as exemplified in President Trump’s phrase, ‘people are saying.’ Conspiracy without the theory can corrode confidence in government, but it cannot give meaning to events or guide constructive collective action.

Read the full article in Dissent.

Inside the ‘Muslim Factory’

Nedjib Sidi Moussa & Felix Baum,

Brooklyn Rail, 7 February 2017

Felix Baum (Rail): In your book, you describe how in France categories such as ‘immigrant worker’ or ‘North African worker’ have more recently become increasingly replaced by the category ‘Muslim.’ What are the main driving forces of this process?

Nedjib Sidi Moussa: In this small book, which is not an academic work but a political essay, I look at the last ten to fifteen years in order to demonstrate some of the mechanisms of what I call ‘the Muslim factory.’ The main protagonists of this factory include the state and civil associations; they range from the far right to the radical left [gauche de la gauche]; there are those described as Muslims and those who stigmatize them. However, I mostly focus on the debates within the radical left, not because they are the most important protagonists in this story, but because that’s my political family. In theory, the radical left should be part of the solution and not part of the problem.

The Muslims produced by this social factory are not necessarily believers, they don’t necessarily practice Islam, go to the mosque, eat halal etc.—they are rather a kind of sub-nationality, similar to the Muslims in ex-Yugoslavia or those in Lebanon at the time of the French protectorate. They are seen as having intrinsic cultural references that set them apart from the majority population in France. Already in the 1990s, parts of the state showed an interest in creating some institution that would ‘represent’ the supposedly Muslim population, and in 2003 the Conseil français du culte musulman (CFCM) was created. Its role is to act as an interlocutor for the state, to ‘manage’ this group via religion. It is worth noting that in French Algeria the Muslim religion was administered by the colonial power, in total contradiction to the principle of laicism, which applied only to metropolitan France, not to the colonies. The CFCM is a new communitarian institution of representation among others (such as the Conseil représentatif des associations noires de France, CRAN) and involves a power struggle among various Muslim federations seeking to control as many mosques as possible in order to be accepted as legitimate interlocutors by the French state.

Rail: From what I’ve read it seems that many people of Maghrebin origin in France are not too religious in fact. How do they relate to this process of subsumption under the category ‘Muslim’?

NSM: In France, to speak of ‘Muslims’ is also a way of talking about immigration from the Maghreb, most importantly Algeria, without saying so. It is undeniable that behind this category is hidden a vast diversity. There are those who have become more and more religious; they demonstrate their religiosity and are hence the most visible, like the Salafists. But what is most visible is not necessarily what is most pertinent or threatening. Among people of Maghrebin origin, there are also atheists, and not all of them come from atheist families—sometimes they have detached themselves from religious traditions and then they face a double exclusion: On the one hand, it is extremely difficult for them to talk to their families about their atheism, their total lack of faith and religious practice, and on the other hand, in French society at large they are associated with a religion which is no longer theirs. And then there are many who in fact believe and practice their religion, but in very different ways—they drink alcohol from time to time, for example, or don’t practice Ramadan; they maybe pray, but not in the mosque. They reject any kind of religious authority and hence the CFCM. They don’t want to be part of this power struggle over representation.

Read the full article in Brooklyn Rail.

The First Amendment transcends the law.

It gives us strength in dark times.

Jamie Kalven, The Intercept, 7 February 2018

On December 13, I entered the Cook County Courthouse in Chicago prepared to be taken into custody and jailed for contempt. At issue was a subpoena demanding I answer questions about the whistleblower whose tip prompted me to investigate the fatal 2014 police shooting of 17-year-old Laquan McDonald.

Were it not for that individual, who had disclosed the existence of dashcam video of the incident and provided a lead that enabled me to locate a civilian witness, we would not know the name Laquan McDonald. Once I had secured the autopsy report that revealed the boy had been shot 16 times, I published an article in Slate challenging the police account of the shooting. Months later, when the video was finally released, a cascade of events ensued: The superintendent of police was fired, as was the head of the agency that investigates police shootings; the state’s attorney was voted out of office; the United States Department of Justice initiated an investigation of the Chicago Police Department; and the officer who shot McDonald, Jason Van Dyke, was charged with first-degree murder.

It was in the context of the murder case that I was subpoenaed. The judge, Vincent Gaughan, permitted Van Dyke’s lawyers to seek to compel me to testify on the basis of their claim, for which they offered no evidence, that the source had given me documents protected under the Garrity rule, which protects public employees from being compelled to incriminate themselves during internal investigations conducted by their employers.

It was only during a hearing in open court on December 6 that the press and public became aware of the ‘fake news’ thesis Dan Herbert, Van Dyke’s lead attorney, was advancing. He claimed I was not a reporter, but an activist — hence unprotected by reporter’s privilege — and had been engaged from the start in an anti-police campaign that did grievous harm to his client’s constitutional right to a fair trial. In the course of that campaign, he alleged, I had colluded with FBI agents who allowed me to sit in on interviews with two civilian witnesses to the shooting, and I had also conspired with the lawyers for McDonald’s family. Central to Herbert’s argument — if something so hallucinatory can be said to have a center — was the claim that I had drawn on knowledge gained from Garrity–protected statements to shape the accounts of civilian witnesses in a coordinated strategy designed to convict Van Dyke.

Herbert’s thesis about my reporting may prove to have been a dress rehearsal for the trial. It reflects the general stance of the Fraternal Order of Police (which is widely believed to be paying for Van Dyke’s defense) with respect to any and all allegations of police misconduct. The FOP asserts in the strongest possible terms that the police are victims of a conspiracy in which the civil rights bar is aided and abetted by the media. In any particular case of alleged abuse, the accused officer is thus the real victim.

Herbert closed his argument by invoking Brown v. Mississippi, a 1936 case in which the Supreme Court set aside the convictions of three black sharecroppers for the murder of a white farmer on the grounds that their confessions had been coerced by police torture. The three men had been repeatedly and mercilessly whipped; and one had been hung from a tree limb in a mock lynching that left rope scars on his neck. Van Dyke, Herbert argued, had suffered comparable harms from my reporting. ‘It’s the same thing legally,’ he said, ‘as those sharecroppers that were tortured.’

It was a stunning moment. The Laquan McDonald case invites comparison to the lynching of Emmett Till, another child of Chicago, in Mississippi in 1955. The dashcam video can be seen as the counterpart to the fateful decision of Till’s mother to have an open casket at her son’s funeral: a window through which a mutilated black body illuminates the violence that enforces structures of exclusion and inequality. Whatever the outcome of the murder trial, that is the light in which many Chicagoans are struggling to come to terms with the incident. So it was startling, even in the adversarial setting of the courtroom, to hear the assertion that it is Van Dyke who is in danger of being lynched.

Read the full article in Intercept.

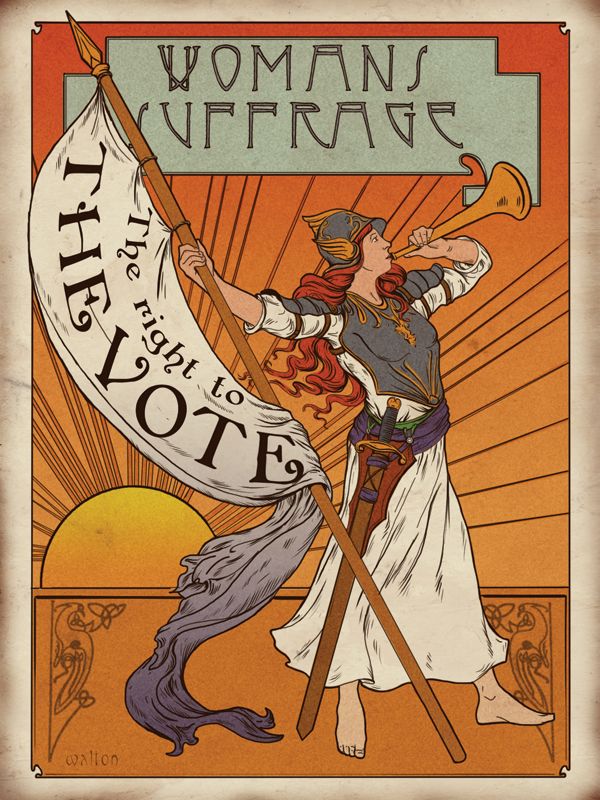

Sanitising the Suffragettes

Fern Riddell, History Today, 27 January 2018

Historians have a unique opportunity in 2018 – the centenary of British women gaining the right to vote – to re-examine a pervasive silence at the heart of the story: that of the nationwide bombing and arson campaign carried out by the Pankhursts’ Women’s Social and Political Union (WSPU).

Previous attempts to examine this phenomenon have been met with criticism and personal attacks, as traditional idealists refused to allow an in-depth critique of the leadership of the suffragettes. But the evidence is clear. Between 1912 and 1915, hundreds of bombs were left on trains, in theatres, post offices, churches, even outside the Bank of England; while arson attacks on timber yards, railway stations and private houses inflicted an untold amount of damage. Yet the lives of the women who did this have been largely forgotten and erased from history, as a long-standing desire to sanitise the actions of suffragettes and portray them as perfect activists, or perfect martyrs, has altered our perception of even those whose names we know. While some historians have begun to acknowledge the violence and extremism of the WSPU, there remains a dominant belief that its violence amounted to little more than firecrackers in tins, or a few well aimed stones. This long-running historical myth has its roots among the suffragettes themselves.

While planning a potential memoir of Emily Wilding Davison in the early 1930s, Edith Mansell-Moullin wrote to Edith How-Martyn, one of the founders of the Suffragette Fellowship, asking for her advice on a difficult issue. Should she, ‘[as I do] … leave out the bombs?’ Similarly, Sylvia Pankhurst’s claim, made in her 1931 memoir, The Suffragette Movement: An Intimate Account of Persons and Ideals, that it was Emily Wilding Davison who bombed Lloyd George’s cottage, is rarely acknowledged. Laura E. Nym Mayhall, in her work on the militant suffrage movement, identified the importance of ‘a small group of former suffragettes’ in the 1920s and 1930s, who ‘created a highly stylised story’ of the WSPU and the history of suffrage in England, which emphasised ‘women’s martyrdom and passivity’. The group set about compiling the documents, memoirs and memorabilia that now form the basis of the Suffragette Fellowship Collection held by the Museum of London. Although this is a remarkable collection, Nym Mayhall points out that it has ‘come to serve as a basis for much of the current scholarship on the women’s suffrage movement’. The Fellowship decided what constituted appropriate suffrage history and which stories should be minimised or left out, creating a ‘master narrative of the militant suffrage movement’ and those it involved. At its most extreme, the Fellowship ‘lobbied to have incorrect passages excised from forthcoming memoirs or removed from subsequent editions of accounts already published’. As the suffragettes sanitised their own history, the real lives and experiences of the woman who had fought so hard and risked so much became the reason for their exclusion. The bombs were deemed inappropriate and those who carried out these violent actions have been hidden from view. Kitty Marion, a prolific bomber and arsonist for the suffragettes – and later an important part of the founding of Planned Parenthood, then the Birth Control League – has been consistently ignored. In her autobiography, written in the late 1930s, Marion left one of the most detailed accounts of the personal beliefs and actions of those who travelled across the country on the orders of the WPSU’s leadership to enact their self-proclaimed ‘Reign of Terror’. Her later role in the international campaign for birth control, operating between the US and the UK, offers one of the most interesting lives of an early feminist campaigner.

Read the full article in History Today.

The fundamental uncertainty of Mueller’s Russia indictment

Masha Gessen, New Yorker, 20 February 2018

Trump’s tweet about Moscow laughing its ass off was unusually (perhaps accidentally) accurate. Loyal Putinites and dissident intellectuals alike are remarkably united in finding the American obsession with Russian meddling to be ridiculous. The intellectuals are amused to see Americans so struck by an indictment that adds virtually nothing to a piece published in the Russian media outlet RBC, back in October; I wrote at the time that the article showed the Russian effort to be more of a cacophony than a conspiracy. The Kremlin and its media are, as Joshua Yaffa writes, tickled to be taken so seriously. Their sub-grammatical imitations of American political rhetoric, their overtures to the most marginal of political players, are suddenly at the very heart of American political life. This is the sort of thing Russians have done for decades, dating back at least to the early days of the Cold War, but those efforts were always relegated to the dustbin of history before they even began.

Goldman, the Facebook V.P., has seen more of the Russian ads and posts than most Americans, and his imagination clearly strains to accommodate the push to take them seriously. It’s hard to square words like ‘sophisticated’ (frequently used by the Times to describe the Russian campaign) with posts like one from an apparently fake L.G.B.T. group promoting something called ‘Buff Bernie: A Coloring Book for Berniacs‘ with catchy English-language copy: ‘The coloring is something that suits for all people.’ It’s hard to apply the description ‘bold covert effort’ (used by Politico) to the enormous amount of social-network static that Russian trolls produced. To Goldman, it may all look like a giant gray mass in which only a few colorful ads and posts have any meaning—and that meaning is hard to discern.

The need to see the Russian effort as somehow meaningful and masterly has produced its own experts in the field. Molly McKew, who identifies as an ‘information warfare expert,’ has said that, back in the day, Soviet intelligence designed a ‘ninety/ten’ approach in order to ‘embed’ its agents in political communities: ninety per cent of what they produced mirrored what they saw, so that they could blend in before starting to sow discord. This idea makes so much sense that it doesn’t seem to matter that McKew offers no source for it or, indeed, any credentials for her own expertise. She is the CEO of a company called Fianna Strategies, which seems to be a tiny Washington-based lobbying operation that has worked for the opposition parties in Georgia and Moldova. McKew’s ‘information warfare expertise’ appears entirely self-styled, yet so great is our need for a rational interpretation of incomprehensible events that recently she has published extensively in Politico and appeared on Slate’s Trumpcast. Russians, meanwhile, have laughed parts of their anatomy off over her coverage of the Gerasimov Doctrine, which is a thing that, well, doesn’t exist.

The phantom Gerasimov Doctrine, described in Politico as a ‘new chaos theory of political warfare,’ sounds more sinister—but also, comfortingly, more serious—than the picture that emerges from the indictment, of Russian agents staging an elaborate production to travel to the United States to gather valuable intelligence, such as advice to ‘focus their activities on ‘purple states like Colorado, Virginia and Florida.’ ‘ Americans’ apparent need to imagine a Russian adversary as cunning, masterly, and strategic is matched only by the Russians’ own belief in a solid, stable, unshakable American society. Stability is what Vladimir Putin has been promising Russians for eighteen years and still hasn’t delivered, making Russians all the more resentful of what they imagine as a predictable, safe American society. Americans, on the other hand, increasingly imagine American society as unstable and deeply at risk. While most people believe themselves to have a solid grip on reality, they imagine their compatriots to be gullible and chronically misinformed. This, in turn, means that we no longer have a sense of shared reality, a common imagination that underlies political life. In a society with a strong sense of shared reality, a bunch of sub-literate tweets and ridiculous ads would be nothing but a curiosity. Even the fact that Russians put money into organizing rallies and demonstrations across the political spectrum would be absurd: surely they didn’t force people to join these rallies. If sincerely held beliefs brought people to the rallies, then it makes no difference to the broader political life whether someone paid for an actress to take part, too (even if that or similar payments were themselves illegal).

Read the full article in the New Yorker.

Black politics after 2016

Adolph Reed Jr, Nonsite, 11 February 2018

Since the election, antiracist commentators and internet activists in particular have become even more aggressive in red-baiting Sanders and a politics centered on economic redistribution, and to do so they rely on a new vector of race-baiting—attacking advocacy of social-democratic politics as intrinsically racist or white supremacist. The move, as Reid implied in her response to Noah’s query, links advocacy of economic redistribution to the rightist turn urged by Penn and Stein and others, thus casting argument for appeal to working-class concerns as ipso facto an apology for white racism. Clintonites’ fabrication of the ‘Bernie bros’ bugbear is a cynical bourgeois (or ‘lean-in’) feminist version of that smear intended to paint advocacy of a redistributive politics as sexist.

This political tendency has congealed around a perspective that renders ‘working class’ as a white racial category and synonym for backwardness and bigotry and condemns working-class whites who voted for Trump as loathsome racists with whom political solidarity is indefensible. Antiracists and other identitarian Democrats reject suggestions that motives other than racism, sexism, homophobia, transphobia, or xenophobia may have been significant in generating the Trump vote. In this struggle for interpretation, which is also a struggle over strategic direction, antiracist identitarians and the Clintonite right-wing deny that many working people, including those whom Les Leopold (‘How to Win Back Obama, Sanders, and Trump Voters,’ Commondreams.org, February 10, 2017) describes as Obama/Sanders/Trump voters—who were not exclusively white—may have good reasons to feel betrayed by both parties and that those reasons may have been a significant factor in their decisions to vote for Trump. In the antiracist line of argument, although having voted previously for Obama once or even twice cannot be taken as evidence that white voters were not racist, or motivated primarily by race, having voted for Trump is incontrovertible evidence that they are.

The upshot of that view is to write off what, according to Larry Sabato’s estimate (‘Just How Many Obama 2012-Trump 2016 Voters Were There?‘ Sabato’s Crystal Ball, June 1, 2017), may amount to between nearly seven million and more than nine million voters, many of whose support for Trump surely stemmed from concerns more complex than commitment to white supremacy. Some percentage of those people, and there is no way to know how many until we try to connect with them politically, voted for Trump at least partly for reasons similar to those for which they voted for Obama (see, for example, Leslie Lopez, ‘‘I Believe Trump Like I Believed Obama’: A Case Study of Two Working-Class ‘Latino’ Voters, My Parents,’ nonsite.org, November 28, 2016). Dismissing those voters, and, more to the point, dismissing appeals to broad economic and social wage concerns, leaves as the only political possibility for the left a version of the coalition Reid adduced as the Democrats’ proper base, including the key component she evoked but did not mention explicitly—Wall Street and Silicon Valley money.

Read the full article on Nonsite.

Toughing it out in Cairo

Yasmine El Rashidi, New York Review of Books, 22 February 2018

More and more, on the streets of Cairo, in government offices, and in informal settlements on the outskirts of the city, I heard references to Syria: ‘We could have ended up like them.’ Disappointment seemed to be bracketed by this comparison. Islamist supporters with whom I had long-standing relations were harder to engage; those I did get through to generally shook their heads, shrugged, and said there was ‘nothing to say.’

Egyptians were commonly criticized as ‘apathetic’ or ‘inert,’ but they might more accurately have been described as ‘passive.’ Government employees, people tending to their lives, those who spoke and those who did not—all were making a calculated choice. Passivity has been their particular mode of survival.

This same passive disposition had come to mark many of the rest of us too—activists, intellectuals, and people on the left. I kept tabs on the shrinking number of people who showed up to protest, and then on the decreasing number of protests. Only a handful of people still voiced their dissent, including Laila Soueif, the matriarch of a family of longtime activists, whose son Alaa Abdel Fattah is serving a five-year prison sentence on trumped-up charges; or the team behind the online paper Mada Masr, led by the journalist and editor Lina Attalah, who continued to publish despite scrutiny and censorship (the paper’s website was eventually blocked, along with 127 others). The risks of human rights work had become almost prohibitive, with arrests, disappearances, and travel bans all commonplace. I counted the number of activists, academics, and artists who had left the country, and friends who were emigrating. Regeni’s name often came up in conversations—his murder lingered in our minds.

More and more of my friends and acquaintances were expressing discomfort, even fear, over the punitive and increasingly severe measures taken by the state. A friend’s activist neighbor was dragged from his home in the night and disappeared for four days on allegations of being an ‘Islamist sympathizer’ (he was not); a writer was imprisoned, on grounds of ‘offending public morals,’ for sexually explicit scenes in a novel; gay men were being hunted by undercover police on the hookup app Grindr; a poet was jailed on charges of ‘blasphemy’ and ‘contempt of religion’ for calling the slaughter of sheep during a Muslim feast ‘the most horrible massacre committed by humans’; two women were threatened with jail for allegedly ‘kissing’ in a car (they were not). It was around this time that I started to become conscious of what I kept on my phone and downloaded a virtual private network to divert my Web presence from Cairo to Italy.

I also observed, in myself and my friends, how inured we had become to the events of our own recent history, which were landmarked by the sites where they had occurred: this was where the Copts got trampled by army tanks; on this street corner I saw a pile of dead bodies; here supporters of Morsi opened fire on young activists; there two hundred people were killed at the hands of the police; and this was where the prosecutor general was assassinated by a car bomb. It was only as I made these mental notes that I realized how I, too, had slipped into some variation of the so-called inertia. A friend one evening described our often-dulled responses to news and events that once enraged us as a type of PTSD.

Read the full article in New York Review of Books.

Surveillance Valley

Yasha Levine, The Baffler, 6 February 2018

Google is one of the wealthiest and most powerful corporations in the world, yet it presents itself as one of the good guys: a company on a mission to make the world a better place and a bulwark against corrupt and intrusive governments all around the globe. And yet, as I traced the story and dug into the details of Google’s government contracting business, I discovered that the company was already a full-fledged military contractor, selling versions of its consumer data mining and analysis technology to police departments, city governments, and just about every major U.S. intelligence and military agency. Over the years, it had supplied mapping technology used by the U.S. Army in Iraq, hosted data for the Central Intelligence Agency, indexed the National Security Agency’s vast intelligence databases, built military robots, colaunched a spy satellite with the Pentagon, and leased its cloud computing platform to help police departments predict crime. And Google is not alone. From Amazon to eBay to Facebook—most of the Internet companies we use every day have also grown into powerful corporations that track and profile their users while pursuing partnerships and business relationships with major U.S. military and intelligence agencies. Some parts of these companies are so thoroughly intertwined with America’s security services that it is hard to tell where they end and the U.S. government begins.

Since the start of the personal computer and Internet revolution in the 1990s, we’ve been told again and again that we are in the grips of a liberating technology, a tool that decentralizes power, topples entrenched bureaucracies, and brings more democracy and equality to the world. Personal computers and information networks were supposed to be the new frontier of freedom—a techno-utopia where authoritarian and repressive structures lost their power, and where the creation of a better world was still possible. And all that we, global netizens, had to do for this new and better world to flower and bloom was to get out of the way and let Internet companies innovate and the market work its magic. This narrative has been planted deep into our culture’s collective subconscious and holds a powerful sway over the way we view the Internet today.

But spend time looking at the nitty-gritty business details of the Internet and the story gets darker, less optimistic. If the Internet is truly such a revolutionary break from the past, why are companies like Google in bed with cops and spies?

Read the full article in the Baffler.

When government drew the color line

Jason DeParle, New York Review of Books, 22 February 2018

In 2007 a sharply divided Supreme Court struck down plans to integrate the Seattle and Louisville public schools. Both districts faced the geographic dilemma that confounds most American cities: their neighborhoods were highly segregated by race and therefore so were many of their schools. To compensate, each district occasionally considered a student’s race in making school assignments. Seattle, for instance, used race as a tie-breaking factor in filling some oversubscribed high schools. Across the country, hundreds of districts had similar plans.

Justice Stephen G. Breyer, writing for the court’s liberal wing in the case, Parents Involved in Community Schools v. Seattle School District No. 1, argued that the modest use of race served essential educational and democratic goals and kept faith with the Court’s ‘finest hour,’ its rejection of segregation a half-century earlier in Brown v. Board of Education. But Chief Justice John G. Roberts Jr., representing a conservative plurality, called any weighing of race unconstitutional. ‘The way to stop discrimination on the basis of race is to stop discriminating on the basis of race,’ he wrote. Crucial to his reasoning was the assertion that segregation in Seattle and Louisville was de facto, not de jure—a product of private choices, not state action. Since the state didn’t cause segregation, the state didn’t have to fix it—and couldn’t fix it by sorting students by race.

Richard Rothstein, an education analyst at the left-leaning Economic Policy Institute, thinks John Roberts is a bad historian. The Color of Law, his powerful history of governmental efforts to impose housing segregation, was written in part as a retort. ‘Residential segregation was created by state action,’ he writes, not merely by amorphous ‘societal’ influences. While private discrimination also deserves some share of the blame, Rothstein shows that ‘racially explicit policies of federal, state, and local governments…segregated every metropolitan area in the United States.’ Government agencies used public housing to clear mixed neighborhoods and create segregated ones. Governments built highways as buffers to keep the races apart. They used federal mortgage insurance to usher in an era of suburbanization on the condition that developers keep blacks out. From New Dealers to county sheriffs, government agencies at every level helped impose segregation—not de facto but de jure.

Rothstein calls his story a ‘forgotten history,’ not a hidden one. Indeed, part of the book’s shock is just how explicit the government’s racial engineering often was. The demand for segregation was made plain in workaday documents like the Federal Housing Administration’s Underwriting Manual, which specified that loans should be made in neighborhoods that ‘continue to be occupied by the same social and racial classes’ but not in those vulnerable to the influx of ‘inharmonious racial groups.’ A New Deal agency, the Home Owners’ Loan Corporation, drew color-coded maps with neighborhoods occupied by whites shaded green and approved for loans and black areas marked red and denied credit—the original ‘redlining.’ The FHA financed Levittown, the emblem of postwar suburbanization, on the condition that none of its 17,500 homes be sold to blacks. The policies on black and white were spelled out in black and white.

Read the full article in New York Review of Books.

For Haiti, the Oxfam scandal

is just the latest in a string of insults

Joel Dreyfus, Washington Post, 19 February 2018

Haitians have long known that foreign intervention — whether it be military or charitable — comes at a price to your sovereignty, as well as your dignity. The American military occupation of Haiti between 1915 and 1934 built much-needed infrastructure, but it created an indigenous military organization that would interfere in Haitian political life well into the 1990s. President Bill Clinton’s invasion in 1994 restored Haiti’s first democratically-elected president to power, but the United States extracted concessions from President Jean-Bertrand Aristide on import duties that destroyed much of Haiti’s agricultural self-sufficiency. United Nations peacekeepers were sent to maintain order in Haiti after the devastating earthquake of Jan. 12, 2010, but instead are believed to be responsible for an outbreak of cholera that has since killed thousands of Haitians.

The earthquake itself killed 225,000 Haitians and injured 300,000 others, and brought a flood of foreign assistance. By some estimates, as many as 10,000 nongovernmental organizations, or NGOs, provided emergency medical care, fed survivors and built temporary housing. Haitians were genuinely touched by the outpouring of support and the pledges of aid that reached $10 billion. It seemed that Haiti, the proud black republic and the first nation in the New World to abolish slavery, would get a well-deserved boost toward a more positive future.

Eight years later, most of the optimism has disappeared. Much of the rubble caused by the earthquake has been removed, but little has been rebuilt. A majority of Haitians never saw the benefits of the promised billions. The country remains nominally democratic; fewer than 20% bothered to vote in the last presidential election. Since then, factions within the legislature have fought over perks and traded accusations of corruption.

Read the full article in the Washington Post.

Freelancing abroad in a world obsessed with Trump

Yardena Schwartz, Columbia Journalism Review, 30 January 2018

‘I can’t make a living reporting from the Middle East anymore,’ said Sulome in mid-December. ‘I just can’t justify doing this to myself.’ The day we spoke, she heard that Foreign Policy, one of the most reliable destinations for freelancers writing on-the-ground, deeply reported international pieces, would be closing its foreign bureaus. (CJR independently confirmed this, though it has not been publicly announced.) ‘They are one of the only publications that publish these kinds of stories,’ she said, letting out a defeated sigh. (Disclosure: Sulome was a classmate of mine at Columbia Journalism School from 2010 to 2011.)

In 2015, Sulome published 16 major feature stories, while also writing her book. In 2016, she published 12, though she was busy doing publicity for her book. In 2017, the total dropped to nine stories, though she was reporting and pitching full time. She now plans to spend her time focusing on her next book, which is about radicalism in America.

Sulome blames a news cycle dominated by Donald Trump. Newspapers, magazines, and TV news programs simply have less space for freelance international stories than before—unless, of course, they directly involve Trump.

According to a study by Harvard’s Shorenstein Center on Media, Politics and Public Policy, Trump was the focus of 41 percent of American news coverage in his first 100 days in office. That’s three times the amount of coverage showered on previous presidents. This laser-eyed focus on Trump has left little room for other crucial stories.

‘Trump has been a ratings magnet on TV and in print,’ says Rick Edmonds, the Poynter Institute’s media business analyst. ‘There’s a pretty clear indication that aggressive reporting on Trump is giving The New York Times, The Washington Post, and others a boost. A large portion of the population thinks he’s a maniac and wants to read about him every day. That tends to reduce attention on the rest of the world.’

Read the full article in the Columbia Journalism Review.

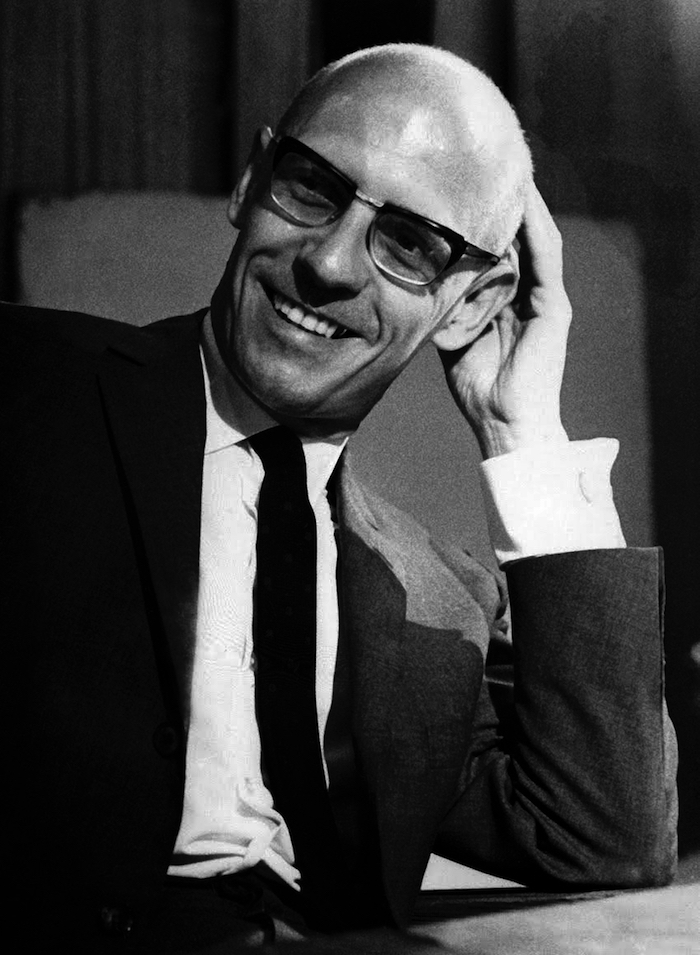

Michel Foucault’s unfinished book published in France

Peter Libbey, New York Times, 8 February 2018

Foucault’s unfinished investigation into the topic of sexuality in early Christian thought and practice is the fourth book in his ‘History of Sexuality’ project. The three previously published volumes addressed sexuality during the modern period, from the 17th century to the mid-20th century; in ancient Greece; and in the Roman world. In them Foucault hoped to explain how sexuality became an object of scientific study and a subject of moral preoccupation.

Foucault was unable to finish ‘Confessions of the Flesh’ before his death in 1984 from an AIDS-related illness.

The decision to publish the book was spurred by the sale in 2013 of the Foucault archives, which contained both a handwritten version of ‘Confessions of the Flesh’ and a typed manuscript that Foucault had begun to correct, to the National Library of France by his longtime partner, Daniel Defert. Once that material became available to researchers, Foucault’s family, who hold the rights to his work, decided it should be shared more widely.

Henri-Paul Fruchaud, Foucault’s nephew, said that a third manuscript, a typed version of the handwritten text in the archive, was already in Gallimard’s possession, but it was incomplete and contained errors. ‘With all three versions in my hands, I realized that it was possible to have a proper final edition,’ Mr. Fruchaud said.

Anyone hoping that the new work will slot neatly into contemporary debates about sexuality may be surprised by what they find. ‘Foucault essentially says you can’t look for solutions to the present in the past. It’s not like we can read off these classical texts some kind of code by which we should now live. I think that some people want that in Foucault,’ said Stuart Elden, a Foucault scholar at the University of Warwick in Britain and the author of ‘Foucault’s Last Decade,’ in a telephone interview.

Read the full article in New York Times.

Against the technocrats

Sheri Berman, Dissent, Winter 2018

Over the past few years concerns about ‘unchecked’ democracy and rule by the people have exploded—but such concerns have been around as long as democracy itself. The ancient Greeks commonly equated democracy with mob rule. Aristotle, for example, worried about democracy’s tendency to degenerate into ‘chaotic rule by the masses’ and in Plato’s The Republic, Socrates argues that given power and freedom the masses will indulge their passions, destroy traditions and institutions, and be easy prey for tyrants. Classical liberals, meanwhile, lived in mortal fear of democracy, convinced that once given power ‘the people’ would trample the liberties and confiscate the property of elites. Great liberal thinkers like Tocqueville, John Stuart Mill, and Ortega y Gasset constantly worried about democracy leading to a ‘tyranny of the majority’ and the masses’ susceptibility to illiberal dictators.

While concerns about illiberalism, populism, and majoritarianism are certainly well-founded, blaming such phenomena on an ‘excess’ of democracy is not. Such arguments rest on a fundamental misunderstanding of how liberal democracy has historically developed and how liberalism and democracy actually interact.

With regard to the former, many analysts argue that the problems facing many new democracies are the consequence of democratization preceding the establishment of liberalism. In such situations the ‘passions of the people’ run rampant, unleashing dangerous forces that make it extremely difficult to establish stable liberal democracy down the road. Zakaria, for example, argues that ‘constitutional liberalism has led to democracy, but democracy does not seem to bring constitutional liberalism.’ Similarly, political scientists Jack Snyder and Edward Mansfield argue that if democratization occurs in countries where liberal traditions are not firmly established, illiberalism and conflict will likely result. ‘Premature, out-of-sequence attempts to democratize may make subsequent efforts to democratize more difficult and more violent than they would otherwise be.’

As for the latter argument, many contend that the problems facing established Western democracies are the consequence of an ‘excess’ of democracy and a concomitant withering of liberalism. As commentator Andrew Sullivan put it, we are living in ‘hyperdemocratic times,’ with the ‘passions of the mob’ running rampant and posing a danger to democracy itself. ‘Democracies end,’ the title of his piece in New York magazine reads, ‘when they are too democratic.’

Both of these arguments are wrong. Historically illiberal democracy has been a stage on the route to liberal democracy rather than the end point of a country’s political trajectory. Indeed, in the past, the experience of, or lessons learned from, flawed and even failed democratic experiments have played a crucial role in helping societies appreciate liberal values and institutions. And many of the problems that have emerged in Western democracies today are not the result of ‘hyperdemocratization’—but the exact opposite. Over the past decades, democratic institutions and elites have become increasingly out of touch with and insulated from the people, contributing greatly to the anger, frustration, and resentment that is eating away at liberal democracy today.

Read the full article in Dissent.

British government’s new ‘anti-fake news’ unit

has been tried before – and it got out of hand

Dan Lomas, The Conversation, 25 January 2018

Details of the new anti-fake news unit are vague, but may mark a return to Britain’s Cold War past and the work of the Foreign Office’s Information Research Department (IRD), which was set up in 1948 to counter Soviet propaganda. The unit was the brainchild of Christopher Mayhew, Labour MP and under-secretary in the Foreign Office, and grew to become one of largest Foreign Office departments before its disbandment in 1977 – a story revealed in The Guardian in January 1978 by its investigative reporter David Leigh.

This secretive government body worked with politicians, journalists and foreign governments to counter Soviet lies, through unattributable ‘grey’ propaganda and confidential briefings on ‘Communist themes’. IRD eventually expanded from this narrow anti-Soviet remit to protect British interests where they were likely ‘to be the object of hostile threats’.

Examples of IRD’s early work include reports on Soviet gulags and the promotion of anti-communist literature. George Orwell’s work was actively promoted by the unit. Shortly before his death in 1950, Orwell even gave it a list of left-wing writers and journalists ‘who should not be trusted’ to spread IRD’s message. During that decade, the department even moved into British domestic politics by setting up a ‘home desk’ to counter communism in industry.

RD also played an important role in undermining Indonesia’s President Sukarno in the 1960s, as well as supporting western NGOs – especially the Thomson and Ford Foundations. In 1996, former IRD official Norman Reddaway provided more information on IRD’s ‘long-term’ campaigns (contained in private papers). These included ‘English by TV’ broadcast to the Gulf, Sudan, Ethiopia and China, with other IRD-backed BBC initiatives – ‘Follow Me’ and ‘Follow Me to Science’ – which had an estimated audience of 100m in China.

IRD was even involved in supporting Britain’s entry to the European Economic Community, promoting the UK’s interests in Europe and backing politicians on both sides. It would shape the debate by writing a letter or article a day in the quality press. The department was also involved in more controversial campaigns, spreading anti-IRA propaganda during The Troubles in Northern Ireland, supporting Britain’s control of Gibraltar and countering the ‘Black Power’ movement in the Caribbean.

IRD’s activities were steadily getting out of hand, yet an internal 1971 review found the department was still needed, given ‘the primary threat to British and Western interests worldwide remains that from Soviet Communism’ and the ‘violent revolutionaries of the “New Left”’.

Read the full article in the Conversation.

Godzooks

David Berlinski, Inference, February 2018

Harari is a Whig historian, but he is not a Whig optimist. He is proud of himself as a man prepared to see things as they really are. Whether they really are as he sees them is rather less clear. Homo Deus is a work of speculation. Standards are looser than they might otherwise be. This is inevitable. In writing about the near future, Harari is guessing, and in writing about the far future, when human beings have long promoted themselves into the inorganic world, he is guessing again. For all that, Homo Deus is intended as a work of history, and the speculations in which Harari is engaged follow a familiar logical pattern. They are like initial value problems in physics. The future that Harari discerns must be projected from the historical present. The historical present. The day before yesterday is not good enough. Something more spacious is needed, some sense of the times in which we live.

‘During the second half of the twentieth century,’ Harari writes, ‘[the] Law of the Jungle has finally been broken, if not rescinded.’ By the law of the jungle, he means the state of civil society under conditions of war, famine, and disease. These are the conditions under which humanity has long lived and suffered. If they have not entirely disappeared, they are, at least, in abeyance.

Are they? Are they really? ‘Whereas in ancient agricultural societies,’ Harari writes, ‘human violence caused about 15 percent of all deaths, during the twentieth century violence caused only five 5 percent of deaths, and in the early twenty-first century it is responsible for about 1 percent of global mortality.’ Stone age violence no longer commands anyone’s moral interest, or indignation, and, in any case, Harari’s assessment of prehistoric violence is, as Brian Ferguson observed, ‘utterly without empirical foundation.’ Seventeen years into the twenty-first century, we remain bound to the twentieth, haunted by its horrors. Harari’s assertion that during the twentieth century violence caused only five percent of deaths worldwide is morally obtuse. The decline in violence is, most often, a statistical artefact of the growth in the world’s population. Roughly six million Poles of Poland’s pre-war population of thirty-five million died during the Second World War—seventeen percent, or almost one in five. The world’s population in 1939 was 2.3 billion. Point two percent of the world’s population perished in Poland. Which number better expresses the horror: seventeen percent or point two percent? Would the horror have been less had the population of South America been greater? Neither murder nor genocide has in the twentieth century been randomly distributed. The world’s population is an irrelevance.

‘In most countries today,’ Harari writes, ‘overeating has become a far worse problem than famine.’ There are today famines taking place or about to take place in northern Nigeria, Somalia, Yemen, and South Sudan. Some twenty million people, Secretary-General António Guterres of the United Nations observed, are at risk. They are not at risk because fat people are fat. They are at risk because they have no food. If Chinese peasants are becoming obese, it has not been widely reported. Of the most terrible famines in history, many took place in the twentieth century. Persia suffered famine between 1917 and 1919. Eight to nine million people died. A sixth of the population of Turkestan died of hunger between 1917 and 1921. Famine in Russia in 1921 caused five million deaths; from 1928 to 1930, famine in northern China, three million. Famine in the Ukraine between 1931 and 1934 caused five million deaths; famine in China in 1936, five million. One million people died of famine in Leningrad during its wartime blockade by the German army. In 1942 and 1943, famines in China and Bengal caused between three and five million deaths. Two and a half million Javanese died of hunger during the Japanese occupation. The little-known Soviet famine of 1947 caused roughly one and one half million deaths. Famine killed between fifteen and forty million Chinese between 1959 and 1961. One million people died of hunger during the Sahel drought between 1968 and 1972. Of hunger, note, and not obesity. The North Korean famine of 1996 was responsible for between three hundred thousand and 3.5 million deaths. No one knows its true extent—circumstances that, if they did not chill the blood, should have stayed the hand of historians writing about the disappearance of famine in the modern world. The Second Congo War, between 1998 and 2004, caused almost four million deaths from starvation. In 1998, three hundred thousand died in Somalia. They died because they had nothing to eat.

Read the full article in Inference.

India conquered: Britain’s Raj and the chaos of Empire

Michael S Dodson, et al, Reviews in History, 15 February 2018

Wilson’s history of the British Raj manages to be both a general history and a revisionist history; a history that is accessible to those with a passing interest in Indian affairs of the past and one that can be read with real interest (even relish) by the specialist. It is also a much needed antidote for those in Britain foolish enough to believe that empire made Britain great. This book is an ambitious undertaking, moreover, and a deeply satisfying one, that left me having learnt not only some new facts about Britain’s Indian empire but also rethinking some of the fundamental arguments I’ve come to adopt about the character of Britain’s empire in India, its making, and its unmaking. It never reduces historical causation to the product of a single individual (even when, in certain cases, the personalities involved might nearly warrant it – I’m thinking here of Robert Clive) and while it flirts on occasion with an excess of detail (Indian history can be quite complicated, despite what some on the political right might have us believe) it never loses sight of its point.

Wilson’s core argument is that the British empire in India was never as unified as it claimed; never as authoritative as it imagined itself to be; and never as confident in its aims and purposes as it projected. The British empire in India, Wilson writes, was ‘never a project or system. It was far more anxious and chaotic’ (p. 9). Wilson is on his strongest ground when discussing the East India Company period. He is adept at describing the start-stop progress of the Company’s trade, the intellectual/ideological motivations of the Company’s policies and practices, and the ways in which the Company interacted with Indian state actors.

We often read in accounts of the Company that when it conquered an area of land (as it often did in the 18th century!) then that area came unproblematically under its authority. From that point on, that account will simply move on to another part of the narrative of unstoppable British conquest (next the Marathas, then the Sikhs …). Yet Wilson shows us that in many cases, such as the famous defeat of Tipu Sultan of Mysore in 1799, the moment of conquest was just a beginning, and the chaos and violence that erupted in its aftermath was far worse than we have acknowledged. It is hard to underestimate how important this is. The defeat of Tipu Sultan in 1799 stands in narratives of the British empire as a moment of victory that marks the transition to a confident, powerful, and successful British colonial state in the 19th century. This is certainly how Niall Ferguson portrays it in his Empire. Wilson, in contrast, reminds us of the Poligar Wars (1799–1801) that followed, in which local landlords seized power and refused to accede to British claims of sovereignty. These were bloody and dangerous times for all concerned.

Read the full article in Reviews in History.

Why do white people like what I write?

Pankaj Mishra, London Review of Books, 22 February 2018

He doesn’t have a perch in academia, where most prominent African-American intellectuals have found a stable home. Nor is he affiliated to any political movement – he is sceptical of the possibilities of political change – and, unlike his bitter critic, Cornel West, he is an atheist. Identified solely with the Atlantic, a periodical better known for its oligarchic shindigs than its subversive content, Coates also seems distant from the tradition of black magazines like Reconstruction, Transition and Emerge, or left-wing journals like n+1, Dissent and Jacobin. He credits his large white fan club to Obama. Fascination with a black president, he thinks, ‘eventually expanded into curiosity about the community he had so consciously made his home and all the old, fitfully slumbering questions he’d awakened about American identity.’ This is true, but only in the way a banality is true. Most mainstream publications have indeed tried in recent years to accommodate more writers and journalists from racial and ethnic minorities. But the relevant point, perhaps impolitic for Coates to make, is that those who were assembling sensible arguments for war and torture in prestigious magazines only a few years ago have been forced to confront, along with their readers, the obdurate pathologies of American life that stem from America’s original sin.

Coates, followed by the ‘white working classes’, has surfaced into liberal consciousness during the pained if still very partial self-reckoning among American elites that began with Hurricane Katrina. Many journalists have been scrambling, more feverishly since Trump’s apotheosis, to account for the stunningly extensive experience of fear and humiliation across racial and gender divisions; some have tried to reinvent themselves in heroic resistance to Trump and authoritarian ‘populism’. David Frum, geometer under George W. Bush of an intercontinental ‘axis of evil’, now locates evil in the White House. Max Boot, self-declared ‘neo-imperialist’ and exponent of ‘savage wars’, recently claimed to have become aware of his ‘white privilege’. Ignatieff, advocate of empire-lite and torture-lite, is presently embattled on behalf of the open society in Mitteleuropa. Goldberg, previously known as stenographer to Netanyahu, is now Coates’s diligent promoter. Amid this hectic laundering of reputations, and a turnover of ‘woke’ white men, Coates has seized the opportunity to describe American power from the rare standpoint of its internal victims.

As a self-professed autodidact, Coates is primarily concerned to share with readers his most recent readings and discoveries. His essays are milestones in an accelerated self-education, with Coates constantly summoning himself to fresh modes of thinking. Very little in his book will be unfamiliar to readers of histories of American slavery and the mounting scholarship on the new Jim Crow. Coates, who claimed in 2013 to be ‘not a radical’, now says he has been ‘radicalised’, and as a black writer in an overwhelmingly white media, he has laid out the varied social practices of racial discrimination with estimable power and skill. But the essays in We Were Eight Years in Power, so recent and much discussed on their first publication, already feel like artefacts of a moribund social liberalism. Reparations for slavery may have seemed ‘the indispensable tool against white supremacy’ when Obama was in power. It is hard to see how this tool can be deployed against Trump. The documentation in Coates’s essays is consistently impressive, especially in his writing about mass imprisonment and housing discrimination. But the chain of causality that can trace the complex process of exclusion in America to its grisly consequences – the election of a racist and serial groper – is missing from his book. Nor can we understand from his account of self-radicalisation why the words ‘socialism’ and ‘imperialism’ became meaningful to a young generation of Americans during what he calls ‘the most incredible of eras – the era of a black president’. There is a conspicuous analytical lacuna here, and it results from an overestimation, increasingly commonplace in the era of Trump, of the most incredible of eras, and an underestimation of its continuities with the past and present.

Read the full article in the London Review of Books.

What you don’t know about abolitionism

Manisha Sinha & Robert Lindley,

History News Network, 16 February 2018

Robin Lindley: You describe a first and a second wave of abolitionism in the United States from colonial times to the Civil War, and you also explored transnational trends. Are these original formulations with your book?

Professor Manisha Sinha: I think there were historians who looked at connections between the American and British abolitionist movements. David Brion Davis in his trilogy on the problem of slavery looked at slavery through a cross-national lens and through a long period.

What is different about my book is the [focus on] the slaves themselves—their motivations and actions in the movement. Also, the second thing was to look at how abolitionism overlapped with contemporary revolutionary and progressive movements. The abolitionists were trying to harness international progressive forces against slavery.

In the first wave [colonial times to 1830], we had understudied the influence of the Haitian Revolution [1791-1804] both on black abolitionists and the abolitionist movement as a whole.

The usual picture for the Haitian Revolution was a negative one – that slaveholders became extremely paranoid and clamped down on any sign of opposition. That is true, but the Haitian Revolution also occupied an important place in the abolitionist imagination, including the American abolitionist imagination, for invoking violence in self-defense on behalf of the slaves. This is true not only of black abolitionists but also of white abolitionists who were pacifists and believed in nonviolence. For me, that story was fascinating.

On the international level again, there was the Anglo-American movement for the abolition of the African slave trade and, by the antebellum period, the democratic revolutions in Europe in the 1830s and 1848. And there was an overlap between abolition and radical international movements such as feminism, utopian socialism, pacifism, anti-imperialism.

I think previous historians are too quick to dismiss abolitionism as some sort of bourgeois reform movement that held up the status quo in a market society. Instead, I found that abolitionists were critical not just of slavery but also of other injustices and inequalities in their world. I think that is a fairly new perspective because most historians had argued the opposite.

Read the full article on the History News Network.

Stop replacing London’s phone boxes with corporate surveillance

Ross Atkin, Wired, 16 February 2018

Over the last few months an unfamiliar object has begun to appear on London’s streets. Ratty old BT payphones, that of late had only functioned as advertising space and unofficial public toilets, are being replaced by internet-connected ‘Links’ kiosks as part of a BT, outdoor advertising giant Primesight and Intersection, a smart cities firm which counts Alphabet subsidiary Sidewalk Labs as an investor.

In exchange for free wifi and phone charging – and even free calls if you are prepared to shout into the kiosk’s side, as apparently over 86,000 desperate callers have already done – users allow the consortium to identify them and track their movements through the city.

Here’s how it works. You approach the kiosk and connect your phone to ‘_InLinkUK from BT Free Wi-Fi’. Then there’s a login page. You submit your email address and accept the terms of use and privacy policy. At the same time, the kiosk also collects your device type and MAC addresses, an identifier unique to each individual wireless device.

Congratulations! Now you have allowed your personal data to be used by the three companies involved, potentially alongside third parties, who – those T&Cs state – ‘may include advertising partners or other providers that help [the consortium] understand [its] users’, to target tailored advertising and monitor its effectiveness.

There may be other sensors collecting other types of data, but at this point we cannot know for sure. Unlike a consumer Internet of Things product, which would have been purchased, disassembled and analysed component by component by vigilant members of the tech community as soon as it went on sale, a teardown of Links kiosks (and other bits of connected public infrastructure) cannot be accomplished without committing some very public acts of vandalism and theft.

We do know from the published FAQs that each kiosk contains three cameras which ‘are turned off at the moment’. (Although presumably they are not just there for decoration.) We also know that the kiosks are designed to be ‘modular and extensible’, and BT’s publicity mentions that the Links will ‘feature sensors, which can capture real-time data relating to the local environment – including for example air and noise pollution, outdoor temperature and traffic conditions’. These sensors are not mentioned in the current privacy policy, which is ‘under regular review’ and so presumably subject to change at the convenience of InLinkUK.

Read the full article in Wired.

Art et Liberté: Egypt’s surrealists

Charles Shafeieh, New York Review Daily, 3 February 2018

Though Art et Liberté was universalist in its philosophical convictions, the writing and visual art produced for the group’s five exhibitions and multiple publications—of which more than a hundred works and a similar number of archival materials are on display at the Tate—responded to specific Egyptian concerns. The Egyptian group’s work was no mere imitation of that of André Breton and his associates in the Parisian Surrealist scene, which tends to be regarded by critics as the movement’s one and true home. Rather, Egypt had its own distinct history and a style of Surrealism that, some argued, stretched into its ancient past. Painter, writer, and founding member of Art et Liberté Kamel el-Telmisany responded to public criticism of the group that it was contaminating Egyptian culture with European perversions: ‘Many of the Pharaonic sculptures… are surrealist… Much Coptic art is surrealist. Far from aping a foreign artist movement, we are creating art that has its origins in the brown soil of our country and which has run through our blood ever since we have lived in freedom and up until now.’

He and the painter and writer Ramses Younan criticized Dalí and Magritte as too premeditated, and the practitioners of automatic writing and drawing as insufficiently socially-engaged. They instead advocated for what they called Free Art, or Subjective Realism—an active mining of the unconscious fused with local imagery that would be familiar to Egyptians, but not fetishistic or nationalistic (a crime that Henein leveled against the Contemporary Art Group that succeeded Art et Liberté). The results were eclectic, as often Expressionist in style as overtly Surrealist, and occasionally humorous, such as Étienne Sved and Abduh Khalil’s irreverent parodies of Pharaonic symbols, which included transforming a pyramid into a chaise lounge.

Egypt in the late 1930s was a nation already deeply divided, with fascism’s growing appeal and Britain hindering any opportunity of national autonomy. As war approached, its economy stalled and poverty increased sharply as thousands of troops from across the Commonwealth descended on the country. The disturbed times found expression in images such as the bloodshot eye embedded within a mass of bulbous tentacles in Laurent Marcel Salinas’s Naissance (1944); the hirsute tree in Samir Rafi’s Nudes (1945) standing before faceless men and women either dead or running from an unidentifiable threat; and the naked girl, lost both to a pool of flames and a menacing giant, in Inji Efflatoun’s Girl and Monster (1941).

Read the full article in NYRB Daily.

Poetry of Puritanism

Marilynne Robinson, Times Literary Supplement, 14 February 2018

Europeans often say our culture is Puritan – Lollard, according to Freud – and we don’t know enough history to understand what they might mean by this. We have made a project of freeing ourselves of even minimal standards of taste or discretion, and still the word clings. Ethical rigor, aversion to display, the ideal of vocation are all diminished things among us, and still we are Puritan. Most recently I heard us denounced in these terms at a dinner table in London. How horrifying our rules against sexual harassment! It is the most natural thing in the world for students to fall in love with their professors, subordinates with their superiors! And so on. My suggestion that this might all seem very different from the perspective of the student or the subordinate, and my thoughts about fairness, merit, and so on, were not of interest. They were merely one more Puritanical pretext for denying the pleasures of life. I think in many cases Puritanical may simply mean ‘reformist,’ tending to assume that even very settled cultural patterns and practices can be called into question, that they are not presumptively endorsed by culture, that what is traditional cannot claim therefore to be rooted in human nature. We tend to forget that our revolution was one in a series – Geneva expelled its Savoyard rulers and was governed by elected councils. The Dutch expelled the Hapsburg emperor and in the process trained sympathetic British volunteers who took the experience home with them. Then with the Puritan Revolution England tried and executed its king and attempted a decade of parliamentary government. More than a century later the American colonies rejected monarchy as a system on the basis of the abuses of the king then in power. This is not logical, strictly speaking, but it affiliated the Americans with the great precedent of the English revolution, the revolution of Milton and Marvell. The most revolutionary idea contained in all this is that society as a whole can be and should be reformed. This Puritan energy does indeed continue to animate American life.

Sometimes on the strength of a reformist movement history is recovered – there are innumerable instances of this in the last half century, in this country often as a consequence of the civil rights movement. In Europe, perhaps as a secondary consequence of this movement, Britain, the Netherlands, and other countries have begun to acknowledge the enormous extent of their roles in the Atlantic slave trade. It says a great deal about human consciousness, collective and individual, that a phenomenon of such scale, duration, and consequence could be obscured so thoroughly. One of the many advantages of my long life is that I remember how things were before that old oblivion began to break up a little. The past really is a foreign country, even the near past, the one you have lived through. You have to have been there to have anything like a true sense of it. This does not make the past understandable, any more than living now makes the present understandable, but it does allow you to call to mind the cohesion of its elements, from which the whole strange mind-set derived its authority, as dreams and eras and cultures do, always including our own. We continue to learn how important slavery and racism have been to the construction of Western civilization, literally as well as figuratively. Without question we have vastly more to learn. Through all this it is important to remember that history that is grossly incomplete can feel coherent, sufficient, and true. The reality of history, whatever it is, is perhaps best demonstrated by the power it has when the tale it tells is false.

Read the full article in the Times Literary Supplement.

Science’s pirate queen

Ian Graber-Stiehl, The Verge, 8 February 2018

In cramped quarters at Russia’s Higher School of Economics, shared by four students and a cat, sat a server with 13 hard drives. The server hosted Sci-Hub, a website with over 64 million academic papers available for free to anybody in the world. It was the reason that, one day in June 2015, Alexandra Elbakyan, the student and programmer with a futurist streak and a love for neuroscience blogs, opened her email to a message from the world’s largest publisher: ‘YOU HAVE BEEN SUED.’

It wasn’t long before an administrator at Library Genesis, another pirate repository named in the lawsuit, emailed her about the announcement. ‘I remember when the administrator at LibGen sent me this news and said something like ‘Well, that’s… that’s a real problem.’ There’s no literal translation,’ Elbakyan tells me in Russian. ‘It’s basically ‘That’s an ass.’ But it doesn’t translate perfectly into English. It’s more like ‘That’s fucked up. We’re fucked.’’

The publisher Elsevier owns over 2,500 journals covering every conceivable facet of scientific inquiry to its name, and it wasn’t happy about either of the sites. Elsevier charges readers an average of $31.50 per paper for access; Sci-Hub and LibGen offered them for free. But even after receiving the ‘YOU HAVE BEEN SUED’ email, Elbakyan was surprisingly relaxed. She went back to work. She was in Kazakhstan. The lawsuit was in America. She had more pressing matters to attend to, like filing assignments for her religious studies program; writing acerbic blog-style posts on the Russian clone of Facebook, called vKontakte; participating in various feminist groups online; and attempting to launch a sciencey-print T-shirt business.

That 2015 lawsuit would, however, place a spotlight on Elbakyan and her homegrown operation. The publicity made Sci-Hub bigger, transforming it into the largest Open Access academic resource in the world. In just six years of existence, Sci-Hub had become a juggernaut: the 64.5 million papers it hosted represented two-thirds of all published research, and it was available to anyone.

But as Sci-Hub grew in popularity, academic publishers grew alarmed. Sci-Hub posed a direct threat to their business model. They began to pursue pirates aggressively, putting pressure on internet service providers (ISPs) to combat piracy. They had also taken to battling advocates of Open Access, a movement that advocates for free, universal access to research papers.

Sci-Hub provided press, academics, activists, and even publishers with an excuse to talk about who owns academic research online. But that conversation — at least in English — took place largely without Elbakyan, the person who started Sci-Hub in the first place. Headlines reduced her to a female Aaron Swartz, ignoring the significant differences between the two. Now, even though Elbakyan stands at the center of an argument about how copyright is enforced on the internet, most people have no idea who she is.

Read the full article in the Verge.

The rise of ‘digital poorhouses’

Tanvi Misra & Virginia Eubanks, CityLab, 6 February 2018

Often when we talk about new technologies, we talk about them as ‘disruptors’—things that shake up the system that we’re in right now. One of my big arguments in the book is that the tools that I’m talking about are more evolution than revolution. So that history really, really matters.

Why I start the book with a brick-and-mortar poorhouse is because it was the most innovative poverty regulation system of its time, in the 1800s. It rose out of a huge economic catastrophe—the 1819 depression—and the social movements organized by folks to protect themselves and their families. What’s really important about the poorhouse—and this is the thread that goes throughout all of the things I talk about in the book—is that it was based on this distinction between what at the time were called the ‘impotent’ and the ‘able’ poor. The ‘impotent’ poor were folks who, by reason of physical disability or age or infirmity, just couldn’t work. The ‘able’ poor were those folks who moral regulators at the time believed were probably able to work, but might just be shirking [it].

That distinction between the impotent and the able poor, which today we would talk about as ‘deserving’ and ‘undeserving’ poor, created a public assistance system that was more of a moral thermometer than a floor that was under everybody protecting their basic human rights.

I think of that as the deep social programming of all of the administrative public assistance systems that serve poor working-class communities. That social programming often shows up in invisible assumptions that drive the kind of automation of inequality that I talk about in the book.

Read the full article in CityLab.

Prowess of Nina Simone’s early records

Jason Heller, The Atlantic, 20 February 2018

Eunice Waymon was born into poverty in 1933 in Tryon, North Carolina, and the precocious singer-pianist made her way to the renowned Juilliard School in New York before adopting the stage name Nina Simone in 1954. She had grown up steeped in church music. Secular sounds, however, called to her. As she told the magazine Hit Parader, ‘We didn’t have a record player, but we had a radio and a piano, and somebody in my family was always singing or playing or dancing. Oh, I heard a lot of boogie woogie too. That killed me, because I loved to dance. I had to play that when mama was out of the house because she didn’t allow it.’ Blues, jazz, and classical music – including Simone’s beloved Bach – all found their way into her playing style. After taking on a name she felt was better suited to show business, Simone began performing in bars in Atlantic City. Her notoriety there grew, and in 1957, she signed with Bethlehem Records.