The latest (somewhat random) collection of recent essays and stories from around the web that have caught my eye and are worth plucking out to be re-read.

RSF Index 2018:

Hatred of journalism threatens democracies

Reporters Without Borders, April 2018

The climate of hatred is steadily more visible in the Index, which evaluates the level of press freedom in 180 countries each year. Hostility towards the media from political leaders is no longer limited to authoritarian countries such as Turkey (down two at 157th) and Egypt (161st), where “media-phobia” is now so pronounced that journalists are routinely accused of terrorism and all those who don’t offer loyalty are arbitrarily imprisoned.

More and more democratically-elected leaders no longer see the media as part of democracy’s essential underpinning, but as an adversary to which they openly display their aversion. The United States, the country of the First Amendment, has fallen again in the Index under Donald Trump, this time two places to 45th. A media-bashing enthusiast, Trump has referred to reporters ‘enemies of the people’, the term once used by Joseph Stalin…

‘The unleashing of hatred towards journalists is one of the worst threats to democracies’, RSF secretary-general Christophe Deloire said. ‘Political leaders who fuel loathing for reporters bear heavy responsibility because they undermine the concept of public debate based on facts instead of propaganda. To dispute the legitimacy of journalism today is to play with extremely dangerous political fire.’

In this year’s Index, Norway is first for the second year running, followed – as it was last year – by Sweden (2nd). Although traditionally respectful of press freedom, the Nordic countries have also been affected by the overall decline. Undermined by a case threatening the confidentiality of a journalist’s sources, Finland (down one at 4th) has fallen for the second year running, surrendering its third place to the Netherlands. At the other end of the Index, North Korea (180th) is still last.

The Index also reflects the growing influence of ‘strongmen’ and rival models. After stifling independent voices at home, Vladimir Putin’s Russia (148th) is extending its propaganda network by means of media outlets such as RT and Sputnik, while Xi Jinping’s China (176th) is exporting its tightly controlled news and information model in Asia. Their relentless suppression of criticism and dissent provides support to other countries near the bottom of the Index such as Vietnam (175th), Turkmenistan (178th) and Azerbaijan (163rd).

When it’s not despots, it’s war that helps turn countries into news and information black holes – countries such as Iraq (down two at 160th), which this year joined those at the very bottom of the Index where the situation is classified as ‘very bad.’ There have never been so many countries that are coloured black on the press freedom map.

Read the full report.

The madness of our gender debate,

where feminists defend slapping a 60-year-old woman

Helen Lewis, New Statesman, 20 April 2018

The Wolf affair also demonstrates another alarming phenomenon: the left getting high on its own supply of self-righteousness. ‘Some feminists have a different conception of gender to me’ gets smudged into ‘some feminists talk about me in ways that I find offensive’ and on to ‘some feminists are basically Hitler, trying to eradicate people like me’.

Once you reach the last statement, then of course you can slap a woman and still think of yourself as a good person. She wants to kill you; a mere punch is self-defence. (I’m not exaggerating about the language. The Edinburgh branch of Action for Trans Health tweeted the day after the attack: ‘Punching TERFs is the same as punching Nazis. Fascism must be smashed with the greatest violence to ensure our collective liberation from it.’) Luckily, sanity prevailed in some corners: immediately after the attack, the trans activist Shon Faye tweeted: ‘Whether this is true or not – physical violence against women (cis or trans) even by women (cis or trans) is unacceptable.’ What’s astonishing is that anyone following the debate would know this was a brave thing for her to say.

As for the lack of reporting, there’s a simple reason. The LGBT press sees its role as a cheerleader rather than an interrogator, particularly in the age of social media, where feel-good stories travel at the speed of a Facebook share. The liberal media, too, wants every narrative to have clearly defined ‘sides’, and adjudicating between the right of trans people to protest speech they find offensive and the right of women to live their lives free from the threat of violence is, clearly, deemed to be too difficult.

Reporting the assault presumably feels too much like casting your lot in with American social conservatives, who have filled the space vacated by hysteria over gay men with hysteria over trans women. But is it really so hard to say that trans people deserve the right to live free from discrimination and abuse, but not the right to punch women with whom they disagree?

What led to the attack in the first place? A group of women had gathered to discuss proposed changes to the Gender Recognition Act and the Equality Act, which will allow everyone to ‘self-define’ their gender, rather than going through a drawn-out process requiring a medical diagnosis. Many of the feminists opposing the reform regard me as a rank collaborator, because I agree that it is possible for men to become women and vice versa. Mysteriously, that doesn’t stop the other side calling me a TERF. All this proves is that the word is meaningless, even before the likes of Tara Wolf casually redefine it to suggest that feminists want to exterminate them.

Read the full article in the New Statesman.

James Comey sees himself as a victim of Trump.

He refuses to see the victims of the justice stystem.

Peter Maass, The Intercept, 18 April 2018

James Comey portrays himself as a law enforcement saint who desires only the best for us, and the best is manifestly not President Donald Trump. But if you read ‘A Higher Loyalty’ with more than its anti-Trump morsels in mind, a less benevolent version of Comey emerges — akin to the King George character in ‘Hamilton,’ who lovingly sings to his rebellious American subjects, ‘And when push comes to shove/ I will send a fully armed battalion/ To remind you of my love.’

Most stories about Comey’s best-selling memoir have focused on its Hamlet-like agonizing over his potential role in tilting the 2016 election, and his horror at discovering that Trump, once in the White House, was as thuggishly corrupt as the mafia dons Comey had prosecuted before he led the FBI and got fired by Trump. But Comey’s insistence on upholding the law is devotional to the point of ruthlessness, as he makes clear when explaining the need to send Martha Stewart to jail in 2003 for lying about an insider stock tip she had received.

‘People must fear the consequences of lying in the justice system or the system can’t work,’ Comey writes. ‘There was once a time when most people worried about going to hell if they violated an oath taken in the name of God. That divine deterrence has slipped away from our modern cultures. In its place, people must fear going to jail. They must fear their lives being turned upside down. They must fear their pictures splashed on newspapers and websites. People must fear having their names forever associated with a criminal act if we are to have a nation with the rule of law.’

This is ridiculous and dangerous, because it suggests Americans are insufficiently cowed by the necessarily God-like wrath of the machinery of law enforcement. Comey is worried that the country risks degenerating into criminality and sloth — and that all that’s standing between us and chaos is the FBI’s lash and our submission to it. Rather than separating himself from the Trump administration’s extremism, Comey sounds much like retired Gen. John Kelly, the White House chief of staff who recently bemoaned the lost discipline of an earlier and supposedly sunnier era.

‘You know, when I was a kid growing up, a lot of things were sacred in our country,’ Kelly said during a bitter press conference, in which he tried to smear a member of Congress who had accurately reported that a grieving war widow was offended by comments Trump made in a fumbled condolence call. ‘Women were sacred, looked upon with great honor. That’s obviously not the case anymore, as we see from recent cases. Life — the dignity of life — is sacred. That’s gone. Religion, that seems to be gone as well.’

Like Kelly, Comey frames his blinkered nostalgia as public virtue, and he’s largely succeeding: His book has been lavishly and warmly received. Comey is certainly right about the danger of Trump, but that doesn’t mean he’s right about other things. For instance, he shows minimal concern for the police killings of black men and the protest movement that’s grown out of them. He seems unable to believe that poor and minority communities have a fair case against the way law enforcement has been practiced on them.

Read the full article in The Intercept.

The vetting files: How the BBC kept out ‘subversives’

Paul Reynolds, BBC News, 22 April 2018

Vetting was brought into play once a candidate and one or two alternatives labelled ‘also suitable’ had been selected for a job. The alternatives served a useful purpose. If the first choice was barred by vetting, the appointments board moved easily on to the second. The candidates were told only that ‘formalities’ would be carried out before an appointment was made. This sounded harmless enough; it would allow time to follow up references, perhaps. Candidates did not know that ‘formalities’ meant vetting – and was, in fact, the code word for the whole system.

A memo from 1984 gives a run-down of organisations on the banned list. On the left, there were the Communist Party of Great Britain, the Socialist Workers Party, the Workers Revolutionary Party and the Militant Tendency. By this stage there were also concerns about movements on the right – the National Front and the British National Party. A banned applicant did not need to be a member of these organisations – association was enough…

If staff came under suspicion only after they had been employed by the BBC or applied for transfer to a job that needed vetting, an image resembling a Christmas tree was drawn on their personal file.

This ‘tree’ was an important part of the process. The BBC maintained a ‘Staff Transfer List’ which named staff who needed to be checked if they were to be promoted. A tree added to the file alerted the administration that this was a security case. Also written on to their file was a so-called ‘Standing Reminder’. This stated: ‘Not to be promoted or transferred (or placed on continuous contract) without reference to [Director of Personnel].’

So keen was the BBC to maintain secrecy that it secretly removed the Standing Reminder from someone’s file if they went to an Industrial Tribunal, which had the power to call for personal files. It was also agreed to (misleadingly) explain away a stamp on a file saying ‘Normal Appointments Formalities Completed’ by pretending that it referred to ‘routine procedures, Next of Kin, Pension etc’.

Read the full article on BBC News.

In defence of the bad, white working class

Shannon Burns, Meanjin, Winter 2017

As an aspirational teenage lumpen, I learned to embrace a working-class ethos. It was a simple, experiential lesson: whenever I allowed myself to feel like a victim, I fell into paralysis and deep poverty; whenever I took pride in my capacity to work and endure, things got slightly better. One world view worked; the other didn’t.

Even if I was wronged or oppressed or marginalised, claiming victim status seemed absurd (since I often came across people who were more unfortunate than me), limiting (since there were other, enriching aspects of life to focus on), humiliating (because in the working-class world self-pity is reviled), and self-defeating (because if you allow yourself to think and behave like a victim, you quickly fall into lumpen despair).

At university, I discovered that this ethos didn’t apply. A season of despair would not send middle-class teens spiralling into a life of drug-addled indigence; they could simply brush themselves off and enrol again next year. Strong, class-enforced safety nets meant that self-pity could be accommodated, and victimhood could even form part of a functional identity.

Indeed, the willingness to expose your wounds is another sign of privilege. Those for whom injury has a use-value will display their injuries; those for whom woundedness is a survival risk, won’t. As a consequence, middle-class grievances now drown out lower class pain. This is why the wounded lower classes come to embrace conservative discourses that ridicule middle-class anguish. Those who cannot afford to see themselves as disadvantaged are instinctively repulsed by those who harp on about disadvantage.

Language is another site of class-conflict. I grew up in violent environments. For people like me, ‘symbolic violence’ or ‘offensive speech’ were, if anything, a benign alternative to real violence and real hate. It was often registered as a joke—or yes, banter—because we understood its relative harmlessness. When I first came across someone who reacted to something that was said to him as though something had been done to him, I thought he was insane. But he wasn’t. He was from a lower middle-class family and was unfamiliar with our habits of speech. He’d never been beaten, so the words felt ‘violent’ enough for him to react in a way that was, in our environment, laughable.

Read the full article in Meanjin.

Free speech and Nazi dogs

David Baddiel, Times Literrary Supplement, 10 April 2018

On a podcast with the comedian Ricky Gervais, we discussed the fact that Meechan ‘could have come up with a different cue’. Yes, he could. If all he wanted to do was make his cute pug into a Nazi, he could just have said ‘Sieg Heil’ (he does in fact, once), which is less offensive. But the comedian in me was prepared to overlook that; in fact, to accept that going further was an artistic decision: Meechan chose words more obviously offensive in order to make the joke more extreme – and therefore funnier. As long as one still believes his intent was comic, and nothing else, it makes sense.

But the judge disagreed, stating that ‘the context was irrelevant’. To a comedian this seems odd: if context is irrelevant, then Sacha Baron Cohen should immediately be arrested for posting a video of himself singing the song ‘Throw the Jew Down the Well’ – never mind that he is playing the character of Borat, an anti-Semitic idiot. That’s where the issue lies for comedians in this judgment: any joke that involves taking on the character and voice of something you despise, in order to subvert it, is threatened.

That said, not many comedians spoke up for Meechan after he was found guilty. There was a discomfort around the whole thing, less perhaps about the joke than about who the teller was. Meechan didn’t help his case by turning to various liberal folk devils, including the British provocateur Katie Hopkins and the former leader of the English Defence League Tommy Robinson.

Read the full article in the Times Literary Supplement.

Palantir knows everything about you

Peter Waldman, Lizette Chapman & Jordan Robertson,

Bloomberg, 19 April 2018

Palantir began work with the LAPD in 2009. The impetus was federal funding. After several Sept. 11 postmortems called for more intelligence sharing at all levels of law enforcement, money started flowing to Palantir to help build data integration systems for so-called fusion centers, starting in L.A. There are now more than 1,300 trained Palantir users at more than a half-dozen law enforcement agencies in Southern California, including local police and sheriff’s departments and the Bureau of Alcohol, Tobacco, Firearms and Explosives.

The LAPD uses Palantir’s Gotham product for Operation Laser, a program to identify and deter people likely to commit crimes. Information from rap sheets, parole reports, police interviews, and other sources is fed into the system to generate a list of people the department defines as chronic offenders, says Craig Uchida, whose consulting firm, Justice & Security Strategies Inc., designed the Laser system. The list is distributed to patrolmen, with orders to monitor and stop the pre-crime suspects as often as possible, using excuses such as jaywalking or fix-it tickets. At each contact, officers fill out a field interview card with names, addresses, vehicles, physical descriptions, any neighborhood intelligence the person offers, and the officer’s own observations on the subject.

The cards are digitized in the Palantir system, adding to a constantly expanding surveillance database that’s fully accessible without a warrant. Tomorrow’s data points are automatically linked to today’s, with the goal of generating investigative leads. Say a chronic offender is tagged as a passenger in a car that’s pulled over for a broken taillight. Two years later, that same car is spotted by an automatic license plate reader near a crime scene 200 miles across the state. As soon as the plate hits the system, Palantir alerts the officer who made the original stop that a car once linked to the chronic offender was spotted near a crime scene.

The platform is supplemented with what sociologist Sarah Brayne calls the secondary surveillance network: the web of who is related to, friends with, or sleeping with whom. One woman in the system, for example, who wasn’t suspected of committing any crime, was identified as having multiple boyfriends within the same network of associates, says Brayne, who spent two and a half years embedded with the LAPD while researching her dissertation on big-data policing at Princeton University and who’s now an associate professor at the University of Texas at Austin. ‘Anybody who logs into the system can see all these intimate ties,’ she says. To widen the scope of possible connections, she adds, the LAPD has also explored purchasing private data, including social media, foreclosure, and toll road information, camera feeds from hospitals, parking lots, and universities, and delivery information from Papa John’s International Inc. and Pizza Hut LLC.

Read the full article in Bloomberg.

When terrorists and criminals govern better than governments

Shadi Hamid, Vanda Febab-Brown & Harold Trinkunas,

Atlantic, 4 April 2018

To put it more simply, its governing ideology aside, the Islamic State would not have been able to govern large swathes of Syria and Iraq if there had been a legitimate, responsive state there already governing. Within the broad category of ‘governance,’ there is a common thread that sticks out across regions, religions, and cultures: the demand for justice, even if it is of the ‘rough and ready’ variety. Order and security are what residents demand most, at least at first. Even when local orders are brutal, they can offer the swift and predictable dispute resolution that populations seek.

In Afghanistan, conflicts over land and water and tribal feuds have escalated after the end of the Taliban regime as a result of weak institutional rule and power usurpation. The post-Taliban formal courts have not been able to stop or resolve such conflicts. Worse, the courts became corrupt and themselves a tool of land expropriation, something that worsened during the presidency of Hamid Karzai. The Taliban has moved to fill the gap by providing free mediation of tribal, criminal, and personal disputes. Afghans report a great degree of satisfaction with Taliban verdicts, unlike those of the official justice system, where petitioners often have to pay considerable bribes.

The Taliban’s code of conduct – the so-called Taliban La’iha – is designed both to maintain control of the Taliban rank-and-file and to limit the emergence of rogue elements. The Quetta Shura of the Taliban, one of its key leadership groups now based in Quetta, Pakistan, has even established teams to travel throughout Afghanistan and uncover complaints from local populations against the Taliban—about corruption, brutality, or other mistreatment. It has also distributed phone numbers throughout the country for reporting abuses. How much the local population trusts this reporting system and actually experiences any redress of grievances varies considerably, of course. In practice, the population is intimidated and abused by Taliban fighters and officials. Still, it says something that the Taliban feels it stands to gain from establishing and advertising such a system in the first place.

Similarly, the Islamic State went about building a distinctive legal order, allowing it to justify violence, regulate economic transactions, and reduce crime and corruption. In short order, with the international community paying little attention, it set up fairly elaborate institutional structures, oriented around interlocking sharia courts, binding fatwas, and detailed tax codes. This was what Yale University’s Andrew March and Mara Revkin term ‘scrupulous legality.’ Distinguishing itself in governance terms was not particularly difficult, since the bar was so low; the other choices were the chaotic rule of other rebel groups consumed by infighting, the mass murder of the Assad regime, or the sectarianism of the Iraqi government and Shia militias.

Read the full article in the Atlantic.

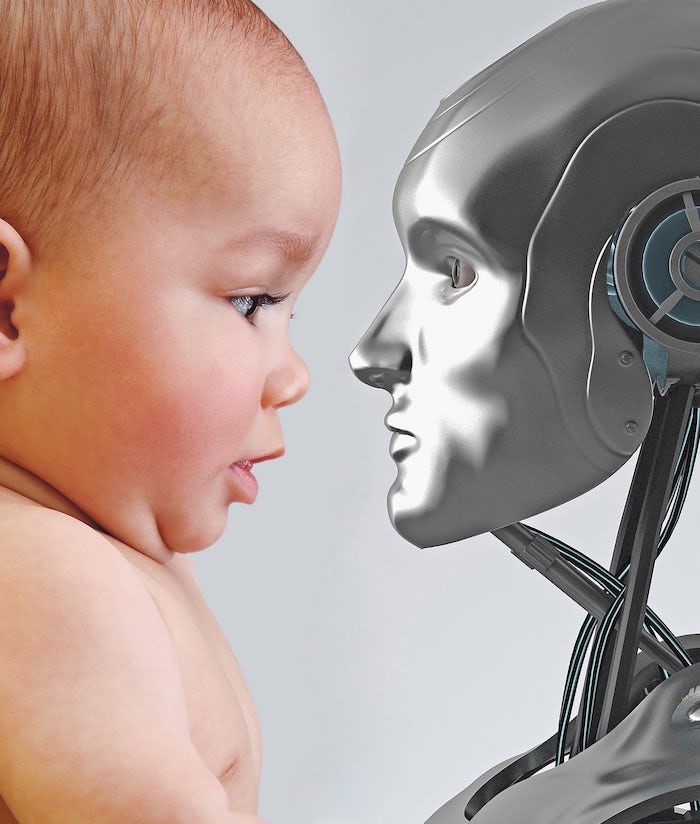

How babies learn – and why robots can’t compete

Alex Beard, Guardian, 3 April 2018

The first time his son uttered something that wasn’t just babble, Roy was sitting with him looking at pictures. ‘He said ‘fah’,’ Roy explained, ‘but he was actually clearly referring to a fish on the wall that we were both looking at. The way I knew it was not just coincidence was that right after he looked at it and said it, he turned to me. And he had this kind of look, like a cartoon lightbulb going off – an ‘Ah, now I get it’ kind of look. He’s not even a year old, but there’s a conscious being, in the sense of being self-reflective.’ ‘I guess, putting on my AI hat, it was a humbling lesson,’ he continued. ‘A lesson of like, holy shit, there’s a lot more here.’

Roy was no longer sure you could bring a robot up like a real human – or that we should even try. It didn’t seem there was much to gain by developing robots that took exactly one human childhood to become exactly like one young adult human. That’s what people did. And that was before you got into imagination or emotions, identity or love – things that were impossible for Toco. Watching his son, Roy had been blown away by ‘the incredible sophistication of what a language learner in the flesh actually looks like and does’. Infant humans didn’t only regurgitate; they created, made new meaning, shared feelings.

The learning process wasn’t decoding, as he had originally thought, but something infinitely more continuous, complex and social. He was reading Helen Keller’s autobiography to his kids, and had been struck by her epiphany at understanding language for the first time. Deaf and blind after an illness in infancy, Keller was seven years old when she got it. ‘Suddenly I felt a misty consciousness as of something forgotten,’ she wrote, ‘a thrill of returning thought; and somehow the mystery of language was revealed to me. I knew then that ‘w-a-t-e-r’ meant the wonderful cool something that was flowing over my hand. That living word awakened my soul, gave it light, hope, joy, set it free! Everything had a name, and each name gave birth to a new thought. As we returned to the house every object which I touched seemed to quiver with life.’

Roy had recently started working with Hirsh-Pasek, following her insight that machines might augment learning between humans, but would never replace it. He had discovered that human learning was communal and interactive. For a robot, the acquisition of language was abstract and formulaic. For us, it was embodied, emotive, subjective, quivering with life. The future of intelligence wouldn’t be found in our machines, but in the development of our own minds.

Read the full article in the Guardian.

What is empathy?

Andrew Scull, Times Literary Supplement, 10 April 2018

Concerned not to be trapped in an ethnocentric, purely Western mental universe, Stuurman ranges widely across different civilizations. Homer and Confucius, Herodotus and Ibn Khaldun, the Judaeo-Christian and the Islamic traditions, and the major thinkers of the European Enlightenment, are all invoked to unpack how our common humanity came to be imagined and invented. So, too, are less obvious figures such as travellers and ethnographers, as well as anthropologists of various stripes. Attention to the language, attitudes and behaviour of European colonizers is matched by an attempt to recover the sensibilities and reactions of the colonized. The American and French Revolutions sit astride an examination of the Haitian Revolution, and discussion of national liberation movements in the developing world is paired with the African American struggle for equality in the United States.

At times, the exposition can seem a laboured and dutiful recital and precis of different strands of social thought. Overall, however, the intelligence and learning of the author shine through, as does his constant attention to the blind spots and limitations of the thinkers whose work he examines. This is not contextual history. Stuurman wants to mine the past, to recover what he thinks is valuable in different intellectual traditions, to look for commonalities and points of difference, and to search out the problems and persistent inequalities that often lay below the surface of seeming assaults on inequality. Telling here are his critiques, for example, of Enlightenment ideologues and their limitations. ‘All men’ – but what about women? And what about those who lacked the qualities of enlightened gentlemen? ‘Savages’ were fellow human beings, who shared the capacity to reason, but had developed it in only rudimentary form. Egalitarianism would be realized in the future, but only once ‘advanced’ societies had tutored the backward, and brought them into the modern world (that is, remade them in their own image). Here Stuurman elaborates on how arranging the history of humanity in a temporal sequence engendered a new form of inequality, and thus provided a novel way of combining a claim of human inequality with continued unequal treatment of some people: temporal inequality meant that one could acknowledge ‘the equality of non-Europeans as generic human beings but downgrade them as imprisoned in primitive and backward cultures’.

In the nineteenth century, such notions justified imperialism by reference to its ‘civilizing mission’, no matter the rapacious realities of colonial rule. Or in the twentieth century, they could be employed to justify authoritarian, dictatorial and murderous regimes. For their leaders were the enlightened, the vanguard more advanced than hoi polloi, and tasked with overcoming the false consciousness of the masses and leading them to the promised egalitarian paradise. Yet the Enlightenment, Stuurman insists, was Janus-faced. Though its ideas were mobilized to underwrite the ‘scientific racism’ of the nineteenth century and the vanguardism of the Leninists and Maoist of the twentieth, it can be seen as giving a decisive boost to the idea that we possess a common humanity, and to efforts to criticize and supersede a whole array of social inequalities. Indeed, it was on the discourses of the Enlightenment that the critics of racism, authoritarianism, and the subordination of women drew to construct their intellectual assaults on these structures, as he acknowledges others have previously grasped.

Read the full article in the Times Literary Supplement.

Here’s why tech companies abuse our data:

because we let them

Brett Frischmann, Guardian, 10 April 2018

We live in an e-commerce utopia. I can call out orders and my demands are satisfied through an automated, seamless transaction. I just have to ask Alexa, or Siri, or one of the other digital assistants developed by Silicon Valley firms, who await the commands and manage the affairs of their human bosses.

We’re building a world of frictionless consumerism. To see the problems with it requires you to stop and think, which itself runs against the grain of this technology. Utopia is in the eye of the beholder. One person’s utopia is another’s dystopia. Take the obese humans in Pixar’s Wall-E: never having to move from their mobile chairs with screens they use to get their food delivered to them. The point is not that Alexa will make us obese. Rather, it’s that intelligent technology can manage much more than isolated purchase orders of books, or the devices and systems in our homes. It can manage our lives, who we are and are capable of being, for better or worse.

Silicon Valley’s pursuit of frictionless transactions in our modern digital networked environment is relentless. It has given rise to surveillance capitalism – the extraction and monetisation of our data – and the creeping use of such data to ‘scientifically’ manage most facets of our lives, in order to efficiently deliver cheap bliss and convenience.

Yet there is much more to being human than the pursuit of such shallow forms of happiness. Flourishing human beings need some friction in their decision-making. Friction is resistance; it slows things down. And in our hyper-rich, fast-paced, attention-deprived world, we need opportunities to stop and think, to deliberate and even second-guess ourselves and others. This is how we develop the capacity for self-reflection; how we experiment, learn and develop our own beliefs, tastes and preferences; how we exercise self-determination. This is our free will in action.

Read the full article in the Guardian.

Canary Wharf: life in the shadow of the towers

Jane Martinson, Observer, 8 April 2018

The house I lived in, growing up on London’s Isle of Dogs, was beside a huge gate and high walls – impenetrable barriers even to the naughty kids who wanted to explore the docks beyond. My friends and I remember glimpses of watery wastelands as we walked off the Thames peninsula that we all called ‘the Island’, on rickety bridges whose gaps and creaks featured in our nightmares.

When, 30 years ago this month, Margaret Thatcher drove the first pile for Canary Wharf and promised a land of opportunity, those high walls came down. But by the time its celebrated pyramid roof was placed on One Canada Square in 1991, Thatcher had been ousted and the London commercial property market had collapsed. It was quite possibly the worst time to launch and, within a year, Olympia and York, the company charged with making the neoliberal dream of turning redundant docks into the reality of a gleaming financial citadel, filed for bankruptcy.

But now, Canary Wharf provides as many jobs as the docks did when they acted as a linchpin between the City of London and the global trade of the British empire. Canary Wharf’s first building, the second tallest in the UK after the Shard, is now as much a part of London’s skyline as the Empire State in New York and the area, with a further massive expansion already under way, is now more Wall Street than wasteland.

Yet the past three decades have not only tracked the boom and bust of the UK economy but have left the Isle of Dogs a microcosm of the social revolution that has changed the face of Britain. At the same time, the extremes of income inequality – from great wealth to desperate deprivation – have revealed social tensions that bedevil the country as a whole.

My mum and sisters still live on the island and I go back all the time; when the development first started, I had just gone to university and each holiday brought a new road network to negotiate and a new building site to discuss. I thought things could only get better for everyone living there, yet it took the surprise of Brexit to make me realise that the reality has been very different.

Local deprivation levels are among the worst in the country and a sense of powerlessness, which may have started as a reaction to the unaccountable regeneration scheme, now seems more widespread. The transport, shopping and leisure opportunities might have been revolutionised, but for a surprising number living there, Canary Wharf appears just as impenetrable as the docks were when I was a child.

Read the full article in the Observer.

The great Chinese dinosaur boom

Richard Conniff, Smithsonian Magazine, May 2018

For paleontologists, the value of Liaoning fossils lies not just in the extraordinarily preserved details but also in the timing: They’ve opened a window on the moment when birds broke away from other dinosaurs and evolved new forms of flight and ways of feeding. They reveal details about most of the digestive, respiratory, skeletal and plumage adaptations that transformed the creatures from big, scary meat-eating dinosaurs to something like a modern pigeon or hummingbird.

‘When I was a kid, we didn’t understand those transitions,’ says Matthew Carrano, the curator of dinosauria at Smithsonian’s National Museum of Natural History. ‘It was like having a book with the first chapter, the fifth chapter and the last ten chapters. How you got from the beginning to the end was poorly understood. Through the Liaoning fossils, we now know there was a lot more variety and nuance to the story than we would have predicted.’

These transitions have never been detailed in such abundance. The 150-million-year-old Archaeopteryx has been revered since 1861 as critical evidence for the evolution of birds from reptiles. But it’s known from just a dozen fossils found in Germany. By contrast, Liaoning has produced so many specimens of some species that paleontologists study them not just microscopically but statistically.

‘That’s what’s great about Liaoning,’ says Jingmai O’Connor, an American paleontologist at Beijing’s Institute of Vertebrate Paleontology and Paleoanthropology (IVPP). ‘When you have such huge collections, you can study variation between species and within species. You can look at male-female variation. You can confirm the absence or presence of anatomical structures. It opens up a really exciting range of research topics not normally available to paleontologists.’

But the way fossils get collected in Liaoning also jeopardizes research possibilities. O’Connor says it’s because it has become too difficult to deal with provincial bureaucrats, who may be hoping to capitalize on the fossil trade themselves. Instead, an army of untrained farmers does much of the digging. In the process, the farmers typically destroy the excavation site, without recording such basic data as the exact location of a dig and the depth, or stratigraphic layer, at which they found a specimen. Unspectacular invertebrate fossils, which provide clues to a specimen’s date, get cast aside as worthless.

As a result, professional paleontologists may be able to measure and describe hundreds of different Confuciusornis, a crow-sized bird from the Early Cretaceous. But they have no way to determine whether individual specimens lived side by side or millions of years apart, says Luis Chiappe, who directs the Dinosaur Institute at the Natural History Museum of Los Angeles County. That makes it impossible to track the evolution of different traits – for instance, Confuciusornis’ toothless modern bird beak – over time.

Read the full article in the Smithsonian Magazine.

Our obsession with Aung San Suu Kyi blinds us

to the deeper causes of the Rohingya tragedy

Faisal Devji, Prospect, 16 March 2018

Even those of us who refuse to excuse her silence about—or toleration of—the violence perpetrated by Burmese civilians and soldiers in Rakhine are compelled to consider whether our focus on Suu Kyi blinds us to the most basic understanding of the situation. Why, for example, do their enemies refer to the Rohingya primarily as Bengalis rather than, as the international media repeatedly does, as Muslims?

The name Bengali signals more than an attempt to classify the Rohingya as foreigners or ‘illegal’ immigrants. Displacing terms like ‘Muslim’ and even ‘Bangladeshi’ for a religiously neutral one, emerges from a longer history of Burmese racism and xenophobia going back to colonial times.

This former Buddhist kingdom had been conquered and incorporated into British India in the middle of the 19th century, and its long-established Hindu and Muslim populations joined by Indian newcomers.

Burma’s large population of Hindu and Muslim traders, soldiers, administrators and labourers, many from the neighbouring Indian state of Bengal, became the objects of nationalist hatred, and were attacked in riots through the 1930s until they were finally expelled to India and what was then East Pakistan – now Bangladesh – in 1962. Even today in Burma, Indians, Bangladeshis and other South Asians are referred to by the popular slur ‘kala,’ an Indian-derived word that means ‘black’ but also ‘foreigner,’ without regard to religion or nationality

Targeting the Rohingya, in other words, is part of a complex history, in which a single regional and religious identity has taken centre stage in recent years. But it is precisely the complexity of this history that makes it erratic, because its violence is capable of shifting from one group to another. At the moment, for instance, non-Rohingya Muslims in Burma are not subject to the same kind of persecution as their co-religionists in Rakhine. For the latter combine two perceived threats – of ‘foreign’ immigrants, who may also include Hindus, and ‘secessionist’ minorities, including Christians and other groups.

Just as solely focusing on Suu Kyi blinds us to the history of Burmese nationalism, so too, does focusing on the religious identity of the Rohingya. Such an emphasis on their religion, paradoxically, pleases their Muslim supporters internationally because it resolves the Burmese conflict into a neat global stereotype – as nothing more than an example of Islamophobia.

Read the full article in Prospect.

End of the American dream?

The dark history of ‘America first’

Sarah Churchwell, Guardian, 21 April 2018

The slogan appears at least as early as 1884, when a California paper ran ‘America First and Always’ as the headline of an article about fighting trade wars with the British. The New York Times shared in 1891 ‘the idea that the Republican Party has always believed in’, namely: ‘America first; the rest of the world afterward’. The Republican party agreed, adopting the phrase as a campaign slogan by 1894.

A few years later, ‘See America First’ had become the ubiquitous slogan of the newly burgeoning American tourist industry, one that adapted easily as a political promise. This was recognised by an Ohio newspaper owner named Warren G Harding, who successfully campaigned for senator in 1914 under the banner ‘Prosper America First’. The expression did not become a national catchphrase, however, until April 1915, when President Woodrow Wilson gave a speech defending US neutrality during the first world war: ‘Our whole duty for the present, at any rate, is summed up in the motto: ‘America First’.’

American opinion was deeply divided over the war; while many decried what was widely perceived as a baldly nationalist venture by Germany, there was plenty of anti-British sentiment, too, especially among Irish-Americans. American neutrality was by no means always motivated by pure isolationism; it mingled pacifism, anti-imperialism, anti-colonialism, nationalism and exceptionalism as well. Wilson was delivering the ‘America first’ speech with his eye on a second presidential term: ‘America first’ should not be understood ‘in a selfish spirit’, he insisted. ‘The basis of neutrality is sympathy for mankind.’

The phrase was rapidly taken up in the name of isolationism, however, and by 1916 ‘America first’ had become so popular that both presidential candidates used it as a campaign slogan. When the US joined the war in 1917, ‘America first’ was transposed into a jingoistic motto; after the war, it slipped back into isolationism. In the summer of 1920, Senator Henry Cabot Lodge delivered a keynote speech at the Republican National Convention, denouncing the League of Nations in the name of ‘America first’. Harding secured the Republican nomination and promptly sailed to victory that November using the slogan, which his administration would invoke ceaselessly before it collapsed amid the ruins of the US’s greatest political bribery scandal to date.

By 1920, ‘America first’ had joined forces with another popular expression of the time, ‘100% American’, and both soon functioned as clear codes for nativism and white nationalism. It is impossible to grasp the full meaning of ‘100% American’ without recognising the legal and political force of eugenicist ideas about percentages in the United States. The so-called ‘one-drop rule’ – which said that one drop of ‘Negro blood’ made a person legally black – was the foundation of slavery and miscegenation laws in many states, used to determine whether an individual should be enslaved or free. The logic of the one-drop rule extended from the notorious three-fifths compromise in the constitution, which counted slaves as three-fifths of a person. Declaring someone 100% American was no mere metaphor in a country that measured people in percentages and fractions, in order to deny some of them full humanity.

Read the full article in the Guardian.

In 2018, we can all learn from

the Open University’s radical roots

Charlotte Lydia Riley, Prospect, 3 April 2018

The OU was revolutionary not only because it tried to change the assumption that adult education was somehow lesser, or that university education was only for the elite, but because it took some of the most cutting-edge educational technology and made it available to ordinary citizens. It wasn’t limited to vocational education (although this absolutely had a place within the OU; in fact, it is still the biggest provider of law degrees in the country). People could also study chemistry, art history, psychology.

By 4 August 1970, the closing date for prospective students to apply, the new university had received some 42,000 applications for 25,000 places. In January 1971 the first students started their courses. Over 30 per cent of them had less than two A levels or equivalent—the minimum qualification for entry to other British universities. 22 of the students were prisoners, starting a long relationship between the prison service and the OU. Quickly, academics like Stuart Hall, Asa Briggs and Doreen Massey would help to establish the OU as an important centre for research, as well as teaching.

When she laid the stone for the first OU library in Milton Keynes in 1973, Lee proclaimed that they had created ‘a great independent university which does not insult any man or any women whatever their background by offering them the second best: nothing but the best is good enough.’

Today, the OU is the biggest academic institution in the UK, and one of the largest universities in the world; since it was begun, more than 2 million students have studied on its courses. Horrocks’ comments that his lecturers were not doing ‘real’ teaching were seen as such a betrayal not only because the institution had spent so long patiently fighting against precisely this allegation, but because they revive the hierarchical, dismissive, divisive attitude that the university he leads was created to erase.

The OU matters, not just because of what it does (and it does a lot), but because of what it represents. It was created by Labour in the 1960s because they believed that a quality university education was a right of democratic citizenship. The Open Univerity was, and at its best still is, a way of challenging traditional assumptions about education: about who should be educated, and why, and how. Cutting it sends a message that these ideas are no longer important, at a time when they could not be more so. As university staff fight not only for their pensions, but for the values at the heart of higher education and research, we must not allow the OU and what it represents to be forgotten.

Read the full article in Prospect.

The death drive revisited: On Olivier Roy’s ‘Jihad and Death’

Suzanne Schneider, LA Review of Books, 2 April 2018

Perhaps most refreshingly, Jihad and Death resists the urge to present a ‘vertical’ approach to the subject — which would narrate a genealogy of jihad with predictable textual pit stops at Qur’an 9:5, Ibn Taymiyya, Sayyid Qutb, et al. — and instead chooses to situate jihad ‘alongside other forms of violence and radicalism that are very similar to it,’ ranging from mass shootings and generational revolt to doomsday cults. As he argues, it ‘is too often forgotten that suicide terrorism and phenomena such as al-Qaeda and ISIS are new in the history of the Muslim world, and cannot be explained simply by the rise of fundamentalism.’ In lieu of yet another attempt to unravel what the Qur’an ‘really’ says, Roy offers the reader a sociological analysis that zeroes in on the centrality (and novelty) of death within the new jihad. ‘What fascinates,’ he argues, ‘is pure revolt, not the construction of a utopia. Violence is not a means. It is an end in itself.’

Roy is most original when discussing these links between jihadism and other forms of youth culture and revolt, linking the self-performance element evident among many militants to video game heroes and a broader aestheticization of violence. And in a welcome addition to literature on Islamic violence, he draws explicit parallels between ‘our’ violence and ‘theirs,’ noting that the ‘boundaries between a suicidal psychopath and a militant for the caliphate’ have grown increasingly hazy. Particularly in the wake of mass shootings in the United States whose perpetrators have claimed allegiance to ISIS — such as those in San Bernardino or at the Pulse nightclub — the distinction between ‘jihadis’ and run-of-the-mill homegrown terrorists has become harder to sustain.

The conclusions Roy advances in Jihad and Death are based on a database of approximately 140 individuals ‘involved in terrorism in mainland France and/or having left France to take part in a ‘global’ jihad between 1994 and 2016.’ While there is no singular terrorist biography, there are recurrent characteristics: second-generation immigrants or converts with backgrounds featuring petty crime and prison stays, and often seemingly well integrated into secular culture. Crucially, among the group he analyzed, radicalization almost never occurs in the framework of a religious organization. This finding leads to one of the more compelling aspects of Roy’s text, namely his attention to sites of radicalization that sit outside of the usual circuits of mosque and social media. In fact, he argues that ‘combat-sports clubs are more important than mosques in jihadi socialization,’ drawing on examples like that of a group of Portuguese converts who joined ISIS and whose bond was solidified in a Thai boxing club.

Roy’s claims have been corroborated recently by those working to combat radicalization in European contexts, such as Usman Raja, who runs a mixed martial arts (MMA) gym geared toward reintegrating former militants. ‘The biggest thing these extremists get from it is community,’ he stated in a recent New York Times profile, which notes that Raja’s approach has been statistically more successful than typical programs focused around religious reeducation. Convincing militants that the Qur’an ‘really’ says something other than what they have heard in ISIS recruiting videos is largely irrelevant if, as Roy claims, they are not all that interested in Qur’anic exegesis.

Read the full article in the Los Angeles Review of Books.

How many genes do cells need?

Maybe almost all of them

Veronique Greenwood, Quanta Magazine, 19 April 2018

About 20 years ago, Charles Boone and Brenda Andrews decided to do something slightly nuts. The yeast biologists, both professors at the University of Toronto, set out to systematically destroy or impair the genes in yeast, two by two, to get a sense of how the genes functionally connected to one another. Only about 1,000 of the 6,000 genes in the yeast genome, or roughly 17 percent, are considered essential for life: If a single one of them is missing, the organism dies. But it seemed that many other genes whose individual absence was not enough to spell the end might, if destroyed in tandem, sicken or kill the yeast. Those genes were likely to do the same kind of job in the cell, the biologists reasoned, or to be involved in the same process; losing both meant the yeast could no longer compensate.

Boone and Andrews realized they could use this idea to figure out what various genes were doing. They and their collaborators went about it deliberately, by first generating more than 20 million strains of yeast that were each missing two genes — almost all of the unique combinations of knockouts among those 6,000 genes. The researchers then scored how healthy each of the double mutant strains was and investigated how the missing genes could be related. The results let the researchers sketch a map of the shadowy web of interactions that underlie life. Two years ago, they reported the details of the map and revealed that it had already allowed researchers to discover previously unknown roles for genes.

Along the way, however, they realized that a surprising number of genes in the experiment didn’t have any obvious interactions with others. ‘Maybe, in some cases, deleting two genes isn’t enough,’ Andrews said, reflecting on their thoughts at the time. Elena Kuzmin, a graduate student in the lab who is now a postdoc at McGill University, decided to go one step further by knocking out a third gene.

Read the full article in Quanta Magazine.

The island where France’s colonial legacy lives on

Maddy Crowell, Atlantic, 21 April 2018

But for some, the very idea of a slavery memorial in Guadeloupe is an odd gesture. Nearly three-quarters of the 405,000 people living on the island descend from West African slaves, but many have little connection to their ancestry. When slavery ended, former slaves were declared French citizens—yet no official record of their ancestors’ arrival to the island exists. It was as if history had been wiped clean, plunging Guadeloupean society into a ‘cultural amnesia,’ as Jacques Martial, a French actor who is currently the chairman of Memorial ACTe, put it. ‘Everyone wanted to forget the past after 1848, and nobody could. Guadeloupeans were saying, “Enough is enough. We cannot go forward and forget our ancestors.”’

Yet Memorial ACTe, which today receives up to 300,000 visitors a year—nearly all of them foreign—has been a source of controversy since its inauguration on May 10, 2015. On that day, François Hollande, then the president of France, toured the memorial and declared that ‘France is able to look at its history because France is a great country that is not afraid of anything—especially not of itself.’ But outside the memorial, the mood was anything but reflective. Protestors had gathered, chanting: ‘Guadeloupe is ours, not theirs!’ Most of them regarded the presence of a French president, especially one inaugurating a slavery memorial, as an extension of France’s colonial legacy. Others demanded not a memorial, but reparations: Most of the cost of the memorial had been paid out of local tax revenue, according to the European Commission—a steep price in a place where the average salary is less than 1,200 euros a month. For many Guadeloupeans, the memorial offered France an out, a way of exonerating itself from the bloody legacy of a 200-year slave trade without grappling with the past, as Eli Domota, the leader of the labor union Liyannaj Kong Pwofitasyon (LKP), or Alliance Against Profiteering, told me.

Sidestepping the past also appeared to be the preference of Emmanuel Macron, the current president of France. Last November on a trip to Burkina Faso, another former French colony, he gave a speech in which he argued that France’s imperial history should not shape his government’s current relationship with the country. ‘Africa is engraved in French history, culture and identity. There were faults and crimes, there were happy moments, but our responsibility is to not be trapped in the past,’ he said. On a trip in December to Algeria, another former colony, Macron visited President Abdelaziz Bouteflika and urged the country’s youth ‘not to dwell on past crimes.’ In March, he said that French should be the official language of Africa, because it is the ‘language of freedom.’ His first and only visit to Guadeloupe came after Hurricane Irma, when he pledged that France would pay 50-million euros of aid and provide Guadeloupeans with free flights to France. But locals criticized his visit, saying that white tourists were given priority access to emergency supplies. Macron has not visited the Caribbean since.

Among Guadeloupeans, then, there remains a fundamental tension over how to navigate their ‘French’ status—especially on an island whose local economy caters almost entirely to French tourists. Whether Memorial ACTe has helped resolve that tension is an open question. But the opposition to it has revealed two contrasting visions for Guadeloupe’s future: continued unity with France, or complete autonomy from it.

Read the full article in the Atlantic.

Did Foucault reinvent his history of sexuality

through the prism of neoliberalism?

Mitchell Dean & Daniel Zamora, LA Review of Books, 18 April 2018

Although Foucault was not, of course, a partisan of neoliberalism in our contemporary sense, and partially perceived its dangers, it nevertheless seemed to offer new margins of freedom for minority practices, a stimulating starting point so as ‘to be governed less’ or at least to contest how to be governed, by whom, and for what purpose. Citing drug use, sexuality, and the refusal to work under the proposed system of negative tax, Foucault saw in neoliberalism a reflection of his own ambition to rethink the task of criticism outside a Marxist and socialist framework. In this sense, far from sketching separate research programs, the redefinition of the history of sexuality through the prism of self-techniques and the study of neoliberalism as a type of ‘governmentality’ indicate the philosopher’s desire to displace the politics of macro-economic structures onto the problematic of individual self-formation. That’s precisely why the historian Julian Bourg saw in those evolutions a turn to ethics in the French left, a turn that ‘revolutionized what was the very notion of revolution itself.’ However, in the long run, the slow substitution of ‘care of the self’ for the old ‘class struggle’ and the reduction of politics to the restrictive terms of the ethics of subjective identity, has, in many respects, fundamental affinities with contemporary neoliberalism. In this sense, though Foucault bequeathed us powerful tools to rethink our relationship with ourselves and the specificities of neoliberalism, these tools will prove very weak to challenge its logic. ‘Do not forget to invent our own life,’ concluded Foucault at the dawn of the entry of neoliberalism into mainstream political discourse. But does not this idea resonate surprisingly with Gary Becker’s call to become ‘entrepreneurs of ourselves’?…

We believe there is a significance to Foucault’s trajectory that goes beyond French intellectual history and politics. He inaugurated a whole series of concerns that came to characterize critical and radical thought over the ensuing 30-odd years, and which remains salient today. They included the questions of what sociologists in the 1990s would variously term ‘self-identity,’ ‘the reflexive project of the self,’ ‘inventing our selves,’ and ‘ethico-politics,’ which would place new forms of political action and self-invention on the agenda, often sidelining politics conducted through parties, trade unions, parliaments, and governments. It seems to us that the left — particularly what came to be described as the center left exemplified by the Third Way — came to focus on the arts of self-invention on the one hand and the neoliberal hollowing-out of the political on the other, while dismissing the salience of the ‘class struggle.’ From Trump to Brexit, to the demise of French socialism, the recent disaster of the Italian elections, and the crisis at the Nordic heart of the social democratic model, the folly of this strategic intersection and its evacuation of the problem of economic exploitation and inequality has become all too plain to see.

Read the full article in the Los Angeles Review of Books.

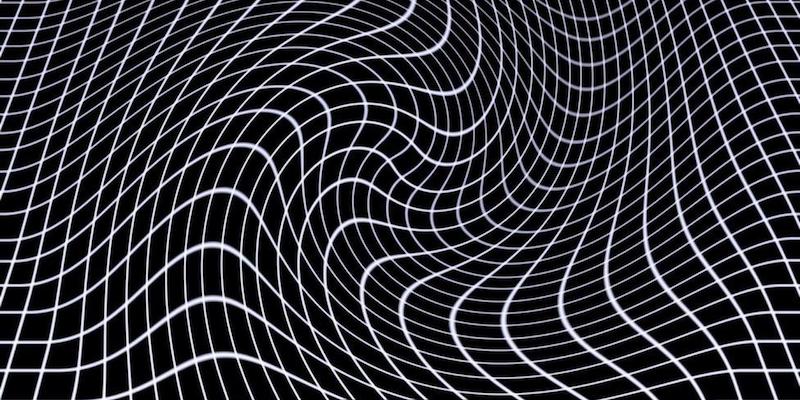

How gravitational waves could solve

some of the universe’s deepest mysteries

David Castelvecchi, Nature, 11 April 2018

With a handful of discoveries already under their belts, gravitational-wave scientists have a long list of what they expect more data to bring, including insight into the origins of the Universe’s black holes; the extreme conditions inside neutron stars; a chronicle of how the Universe structured itself into galaxies; and the most-stringent tests yet of Albert Einstein’s general theory of relativity. Gravitational waves might even provide a window into what happened in the first few moments after the Big Bang.

Researchers will soon start working down this list, with the help of the US-based Laser Interferometer Gravitational-Wave Observatory (LIGO), the Virgo observatory near Pisa, Italy, and a similar detector in Japan that could begin making observations next year. They will get an extra boost from space-based interferometers, and from terrestrial ones that are still on the drawing board — as well as from other methods that could soon start producing their own first detections of gravitational waves (see ‘The gravitational-wave spectrum’)…

For a field of research that is not yet three years old, gravitational-wave astronomy has delivered discoveries at a staggering rate, outpacing even the rosiest expectations. In addition to the discovery in August of the neutron-star merger, LIGO has recorded five pairs of black holes coalescing into larger ones since 2015 (see ‘Making waves’). The discoveries are the most direct proof yet that black holes truly exist and have the properties predicted by general relativity. They have also revealed, for the first time, pairs of black holes orbiting each other.

Researchers now hope to find out how such pairings came to be. The individual black holes in each pair should form when massive stars run out of fuel in their cores and collapse, unleashing a supernova explosion and leaving behind a black hole with a mass ranging from a few to a few dozen Suns…

Further observations could also provide insight into some of the fundamental questions about black-hole formation and stellar evolution. Collecting many measurements of masses should reveal gaps — ranges in which few or no black holes exist, says Vicky Kalogera, a LIGO astrophysicist at Northwestern University in Evanston, Illinois. In particular, ‘there should be a paucity of black holes at the low-mass end’, she says, because relatively small supernovae tend to leave behind neutron stars, not black holes, as remnants. And at the high end — around 50 times the mass of the Sun — researchers expect to see another cut-off. In very large stars, pressures at the core are thought eventually to produce antimatter, causing an explosion so violent that the star simply disintegrates without leaving any remnants at all. These events, called pair-instability supernovae, have been theorized, but so far there has been scant observational evidence to back them up.

Eventually, the black-hole detections will delineate a map of the Universe in the way galaxy surveys currently do, says Rainer Weiss, a physicist at the Massachusetts Institute of Technology in Cambridge who was the principal designer of LIGO. Once the numbers pile up, ‘we can actually begin to see the whole Universe in black holes’, he says. ‘Every piece of astrophysics will get something out of that.’

Read the full article in Nature.

‘No company is so important

its existence justifies setting up a police state’

Noah Kulwin & Richard Stallman, New York Magazine, 18 April 2018

I’m interested in how you think that the major digital platforms in particular, and Silicon Valley more broadly, sort of … went off the rails. I’m thinking of the toxic nature of many of these communities and platforms online — issues with data privacy, the ability to be abused for electioneering or other purposes, and so on.

You’re talking about very — about specific manifestations, and in some cases in ways that presuppose a weak solution.

What is data privacy? The term implies that if a company collects data about you, it should somehow protect that data. But I don’t think that’s the issue. I think the problem is that it collects data about you period. We shouldn’t let them do that.

I won’t let them collect data about me. I refuse to use the ones that would know who I am. There are unfortunately some areas where I can’t avoid that. I can’t avoid even for a domestic flight giving the information of who I am. That’s wrong. You shouldn’t have to identify yourself if you’re not crossing a border and having your passport checked.

With prescriptions, pharmacies sell the information about who gets what sort of prescription. There are companies that find this out about people. But they don’t get much of a chance to show me ads because I don’t use any sites in a way that lets them know who I am and show ads accordingly.

So I think the problem is fundamental. Companies are collecting data about people. We shouldn’t let them do that. The data that is collected will be abused. That’s not an absolute certainty, but it’s a practical, extreme likelihood, which is enough to make collection a problem.

A database about people can be misused in four ways. First, the organization that collects the data can misuse the data. Second, rogue employees can misuse the data. Third, unrelated parties can steal the data and misuse it. That happens frequently, too. And fourth, the state can collect the data and do really horrible things with it, like put people in prison camps. Which is what happened famously in World War II in the United States. And the data can also enable, as it did in World War II, Nazis to find Jews to kill.

In China, for example, any data can be misused horribly. But in the U.S. also, you’re looking at a CIA torturer being nominated to head the CIA, and we can’t assume that she will be rejected. So when you put this together with the state spying that Snowden told us about, and with the Patriot Act that allows the FBI to take almost any database of personal data without even talking to a court. And what you see is, for companies to have data about you is dangerous.

And I’m not interested in discussing the privacy policies that these companies have. First of all, privacy policies are written so that they appear to promise you some sort of respect for privacy, while in fact having such loopholes that the company can do anything at all. But second, the privacy policy of the company doesn’t do anything to stop the FBI from taking all that data every week. Anytime anybody starts collecting some data, if the FBI thinks it’s interesting, it will grab that data.

Read the full article in New York Magazine.

More equal than others

Amia Srinivasan, New York Review of Books, 19 April 2018

For many of the founding fathers, the principle of basic equality was consistent with some people being the property of others; in 1776 the abolitionist Thomas Day remarked that ‘if there be an object truly ridiculous in nature, it is an American patriot, signing resolutions of independency with the one hand, and with the other brandishing a whip over his affrighted slaves.’ A commitment to basic equality was also apparently consistent with ‘free’ women being legally excluded from civic life. Today, the US’s commitment to basic equality is apparently consistent with not only enormous socioeconomic inequality, but also enormous inequality of opportunity, much of it still determined by race and gender.

The seeming compatibility of basic equality with gross material and social inequality has led more than one critic (Marx most obviously) to wonder if talk of being ‘created equal’ is a hollow spiritual promise designed to placate those suffering from earthly misery. That basic equality is not such a hollow promise—that it means something substantial, and that it is crucial to our political morality—is the central thesis of Jeremy Waldron’s One Another’s Equals.

In the postwar period, political philosophers have been much concerned with equality, though their concern has generally focused on what Waldron calls ‘surface-level issues’ of equality: whether, as egalitarians, we should be aiming for equality of well-being, equality of material resources, or equality of opportunity. (By calling such questions ‘surface-level’ Waldron doesn’t mean they are superficial; he calls them ‘some of the most intractable problems of political philosophy.’ He means they take for granted that some sort of egalitarianism is right.)

Meanwhile, less has been said in the postwar period about what might justify a commitment to basic equality: why we should be some sort of egalitarian at all. Writing in 1962, Bernard Williams suggested that our common capacities to suffer and to love were the grounds of universal human equality. A year later, John Rawls proposed that what he called a ‘sense of justice,’ a desire and ability to act on the demands of justice, was both necessary and sufficient to ground human equality. In his book, Waldron attempts to elaborate and improve on such proposals in a way that is both more systematic and, he says, more ‘wide-ranging’ than contemporary philosophical discussions of equality.

A helpful way of thinking about basic equality, Waldron says, is that it denies that there are fundamental differences of kind between humans. While there are humans in different conditions—children and adults, the married and unmarried, citizens and aliens—there are not humans of different sorts, demanding fundamentally different forms of moral attention. Of course much turns on what we mean by ‘fundamentally’ here. Clearly, the particular vulnerability of children means that they demand certain kinds of moral treatment that adults do not. More controversially, we might think that distinguishing between citizens and aliens involves treating some humans as if they were more deserving of our moral consideration than others. (How much of a consolation is it to tell a Syrian refugee that, while the border is closed to her, we are all created equal?)

Read the full article in New York Review of Books.

Finger fossil puts people in Arabia at least 86,000 years ago

Bruce Bower, Science News, 9 April 2018

A single human finger bone from at least 86,000 years ago points to Arabia as a key destination for Stone Age excursions out of Africa that allowed people to rapidly spread across Asia.

Excavations at Al Wusta, a site in Saudi Arabia’s Nefud desert, produced this diminutive discovery. It’s the oldest known Homo sapiens fossil outside of Africa and the narrow strip of the Middle East that joins Africa with Asia, based on dating of the bone itself, says a team led by archaeologists Huw Groucutt and Michael Petraglia. This new find strengthens the idea that early human dispersals out of Africa began well before the traditional estimated departure time of 60,000 years ago and extended deep into Arabia, the scientists report April 9 in Nature Ecology & Evolution.

‘Although long considered to be far from the main stage of human evolution, Arabia was a stepping stone from Africa into Asia,’ says Petraglia, of the Max Planck Institute for the Science of Human History in Jena, Germany…

The 2016 Al Wusta find is probably the middle bone from an adult’s middle finger, suspects Groucutt, of the University of Oxford. It’s unclear whether the bone came from a man or a woman, or from a right or left hand.

It’s definitely human, though. To establish the fossil’s identify, the researchers compared a 3-D image of the ancient finger bone with corresponding bones of present-day people, apes and monkeys, as well as Neandertals and other ancient hominids.

The newly discovered fossil fits into a rough timeline of Stone Age human departures from Africa. H. sapiens reached what’s now Israel as early as 194,000 years ago and East Asia by at least 80,000 years ago . Humans arrived in Indonesia and Australia shortly before 60,000 years ago.

Read the full article in Science News.

The philosopher in dark times

George Prochnik, New York Times, 12 April 2018

In New York, Arendt’s intellectual acuity and conversational punch swiftly translated into social cachet. After meeting her at a dinner party in the mid-1940s, the literary critic Alfred Kazin was smitten: ‘Darkly handsome, bountifully interested in everything, this 40-year-old German refugee with a strong accent and such intelligence — thinking positively cascades out of her in waves,’ he wrote in his diary. Though she would only fully embrace the principle of amor mundi, love of the world, after contending philosophically with the cataclysm of World War II, the insatiable curiosity was there early on. ‘I believe it is very likely that men, if they ever should lose their ability to wonder and thus cease to ask unanswerable questions, also will lose the faculty of asking the answerable questions upon which every civilization is founded,’ she declared in one address. Arendt’s sheer delight in intellectual speculation counterpoints her intense ethical commitment to thinking as a form of political engagement.

The relationship was sometimes uneasy and often controversial, most famously in the case of her account of Adolf Eichmann’s trial in Jerusalem, in which she coined the term ‘the banality of evil.’ Watching Eichmann testify in his glass booth, Arendt became convinced that he was, above all, an inarticulate buffoon whose wicked deeds resulted from his participation in a bureaucratic structure that dissipated the sense of personal responsibility, and deadened the capacity for cognition. Gershom Scholem, the pioneering scholar of kabbalah, was one of many public intellectuals who felt that Arendt had lost track of the human reality of the Holocaust amid the scintillating twists of her argument. She had failed to reckon with the raw pleasure that playing God over others could afford, and so had overemphasized the role of systemically enforced thoughtlessness in preparing individuals to execute enormous crimes. Recent historical scholarship suggests that Arendt did, indeed, underestimate Eichmann’s ideological passion for National Socialism: Much of his clownish bumbling in Jerusalem may have been a conscious, self-exculpating performance. But her core insight into how even mediocrities can be institutionally benumbed and conscripted into heinous projects remains fertile.

Some of the work anthologized in this volume, edited by Jerome Kohn, comprises Arendt’s responses to current events, like her analysis of the televised 1960 national conventions, in which Kennedy and Nixon were the principal rivals, offering a rather surprising defense of the onscreen experience as a revealing format for viewing those ‘imponderables of character and personality which make us decide, not whether we agree or disagree with somebody, but whether we can trust him.’ Other essays provide deep conceptual etymologies of historical events, key figures and schools of thought. These include her profoundly enlightening study of how Karl Marx fits into the long Western political tradition and her detailed analysis of the challenge that the 1956 Hungarian revolution posed to the Russian military and propagandistic juggernaut. The most dynamic pieces here are Arendt’s interviews, in which the sweep and depth of her ruminations are layered with the caustic wit and engagé appeal of her voice. For all Arendt’s opposition to totalitarianism — and her willingness to implicate Marx in the development of certain totalitarian movements — Arendt remained unabashedly enamored of Marx’s proposition that ‘the philosophers have only interpreted the world. … The point, however, is to change it.’ She relished his determination to wrest higher thought from the supine realm of the Greek symposium and thrust it into the ring of political activism, challenging, as she wrote, ‘the philosophers’ resignation to do no more than find a place for themselves in the world, instead of changing the world and making it ‘philosophical.’’ For Arendt, thinking that helped advance the cause of human freedom entailed a form of relentlessly critical examination that imperiled ‘all creeds, convictions and opinions.’ There could be no dangerous thoughts simply because thinking itself constituted so dangerous an enterprise.

Read the full article in the New York Times.

What can we learn when a clinical trial is stopped?

David Dobbs, Mosaic, 16 April 2018

But what about the treatment? In the months and years after the November 2013 halt, as data accrued from patients who continued with the treatment, it became clear that more and more of them were moving towards and past the 40 per cent improvement threshold; some were even in remission.

Most of the researchers had access to reports about the data as it accrued. But the first presentation of results – a presentation sharply constrained by journal guidelines, St Jude’s proprietary hold on the raw data, and authorial arguments about relevance – was made public only in October 2017.

The paper, finally published in Lancet Psychiatry, presented patient data through 24 months of activation. When tracked for two years instead of the six months used for the futility analysis, the percentage of active-treatment patients whose depression scores dropped by at least 40 per cent more than doubled, to 50 per cent of all those in the original active group. The remission rate also rose, from 10 per cent at six months to 31 per cent at 24 months. These rates come very close to Mayberg’s open-label trials.

Also encouraging was the response of patients in the control group when their implants were activated after six months, for they continued to roughly match that of the group whose implants had been active from the start. The control group’s full-remission rate once active, however, was lower. This may be because the control group’s smaller size can magnify the statistical impact of small differences in the depression scores of those patients. We don’t know, for instance, whether a high number of control-patient scores fell just short of remission (or, conversely, barely reached it) – just one of many things we can’t know because neither St Jude nor Abbott has published the individual-patient data.

In any case, this long-term Broaden data echoes Mayberg’s findings, in several open-label studies done before and during the time of the Broaden trail, that the treatment gains effectiveness over time. The two-year results for these intensely sick people – half reaching the 40 per cent improvement threshold, almost a third in remission – stand sharply at odds with the six-month scores.

What we have here is a failed clinical trial – of a treatment that seems to work.

Read the full article in Mosaic.

‘There is no such thing as past or future’: physicist Carlo Rovelli on changing how we think about time

Charlotte Higgins, Guardian, 14 April 2018

Rovelli’s view is that there is no contradiction between a vision of the universe that makes human life seem small and irrelevant, and our everyday sorrows and joys. Or indeed between ‘cold science’ and our inner, spiritual lives. ‘We are part of nature, and so joy and sorrow are aspects of nature itself – nature is much richer than just sets of atoms,’ he tells me. There’s a moment in Seven Lessons when he compares physics and poetry: both try to describe the unseen. It might be added that physics, when departing from its native language of mathematical equations, relies strongly on metaphor and analogy. Rovelli has a gift for memorable comparisons. He tells us, for example, when explaining that the smooth ‘flow’ of time is an illusion, that ‘The events of the world do not form an orderly queue like the English, they crowd around chaotically like the Italians.’ The concept of time, he says, ‘has lost layers one after another, piece by piece’. We are left with ‘an empty windswept landscape almost devoid of all trace of temporality … a world stripped to its essence, glittering with an arid and troubling beauty’.

More than anything else I’ve ever read, Rovelli reminds me of Lucretius, the first-century BCE Roman author of the epic-length poem, On the Nature of Things. Perhaps not so odd, since Rovelli is a fan. Lucretius correctly hypothesised the existence of atoms, a theory that would remain unproven until Einstein demonstrated it in 1905, and even as late as the 1890s was being written off as absurd.

What Rovelli shares with Lucretius is not only a brilliance of language, but also a sense of humankind’s place in nature – at once a part of the fabric of the universe, and in a particular position to marvel at its great beauty. It’s a rationalist view: one that holds that by better understanding the universe, by discarding false beliefs and superstition, one might be able to enjoy a kind of serenity. Though Rovelli the man also acknowledges that the stuff of humanity is love, and fear, and desire, and passion: all made meaningful by our brief lives; our tiny span of allotted time.

Read the full article in the Guardian.

Cecil Taylor and the art of noise

Alex Ross, New Yorker, 10 April 2018