The latest (somewhat random) collection of recent essays and stories from around the web that have caught my eye and are worth plucking out to be re-read.

‘It’s nothing like a broken leg’:

why I’m done with the mental health conversation

Hannah Jane Parkinson, Guardian, 30 June 2018

I will admit that I am not well. That writing this, right now, I am not well. This will colour the writing.

But it is part of why I want to write, because another part of the problem is that we write about it when we are out the other side, better. And I understand: it’s ugly up close; you can see right into the burst vessels of the thing. (Also, on a practical level, it is difficult to write when one is unwell.) But then what we end up with has the substance of secondary sources. When we do see it in its rawness – Sinéad O’Connor releasing a Facebook video in utter despair – who among us does not wince?

The primary danger used to be glamorising. It was cool to be a bit mad. It meant you were a genius or a creative. It wasn’t just that certain mental illnesses were acceptable, but certain mental illnesses were acceptable in certain types of people: if you had a special skill or talent or architect-set cheekbones. All of this remains true. Sure, Robert Lowell, great poet. Madness excused. Amy Winehouse, voice of a goddamn goddess. We’ll allow. Kathy, 54, works at Morrisons. Not so much. White woman who has recourse to a national newspaper (called Hannah). Perhaps. Black man who comes from a cultural background where mental illness isn’t recognised and whose symptoms might be put down to the racist trope of aggression in people of colour. Nah, mate.

But now there is also a new danger. It is ‘normalising’. This is meant to be a positive – as in, ‘What is normal, anyway?!’ Which is a fair question, but I don’t think it’s the woman who crept into my inpatient room, stole the newspapers I had, found me in the lounge and ripped them up slowly in front of my eyes. I don’t think it’s me, sitting in a tiny, airless hospital room, carving my name into the wall with a ballpoint pen, with three guards for company, one of whom later tries to add me on Facebook.

We should normalise the importance of good mental health and wellbeing, of course. Normalise how important it is to look after oneself – eat well, socialise, exercise – and how beneficial it can and should be to talk and ask for help. But don’t conflate poor mental health with mental illness, even if one can lead to the other. One can have a mental illness and good mental health, and vice versa.

Don’t pathologise normal processes such as grief, or the profound sadness of a relationship breakdown, or the stress of moving house. Conversely, don’t tell me it is normal when I go from being the type of person who will offer children piggyback rides up the steepness of north London to glaring at a crying baby on a bus. Or that it is normal to blow thousands of pounds on sporadically moving house without terminating a current lease, or to send friends bizarre, pugilistic texts in the night.

Read the full article in the Guardian.

The rise of bullshit jobs

David Graeber & Suzi Weissman, Jacobin, 30 June 2018

In the book, you start out by distinguishing the bullshit jobs from shit jobs. Maybe we should start doing that right now, so we can talk about what the bullshit jobs are?

DG Yeah, people often make this mistake. When you talk about bullshit jobs, they just think jobs that are bad, jobs that are demeaning, jobs that have terrible conditions, no benefits, and so forth. But actually, the irony is that those jobs actually aren’t bullshit. You know, if you have a bad job, chances are that it’s actually doing some good in the world. In fact, the more your work benefits other people, the less they’re likely to pay you, and the more likely it is to be a shit job in that sense. So, you can almost see it as an opposition.

On the one hand, you have the jobs that are shit jobs but are actually useful. If you’re cleaning toilets or something like that, toilets do need to be cleaned, so at least you have the dignity of knowing you’re doing something which is benefiting other people — even if you don’t get much else. And on the other hand, you have jobs where you’re treated with dignity and respect, you get good payment, you get good benefits, but you secretly labor under the knowledge that your job, your work, is entirely useless.

You divide your chapters into the different kinds of bullshit jobs. There’s flunkies, goons, duct-tapers, box-tickers, task-makers, and what I think of as bean-counters. Maybe we can go through what these categories are.

DG Sure. This came from my own work, of asking people to send me testimonies. I assembled several hundred testimonies from people who had bullshit jobs. I asked people, ‘What’s your most pointless job you ever had? Tell me all about it; how do you think it happened, what’s the dynamics, did your boss know?’ I got that kind of information. I did little interviews with people afterwards, follow-up stuff. And so, in a way, we came up with category-systems together. People would suggest ideas to me, and gradually it came together to five categories.

As you say, we have, first, the flunkies. That’s kind of self-evident. A flunky exists only to make someone else look good. Or feel good about themselves, in some cases. We all know what kind of jobs they are, but an obvious example would be, say, a receptionist at a place that doesn’t actually need a receptionist. Some places obviously do need receptionists, who are busy all the time. Some places the phone rings maybe once a day. But you still have to someone — sometimes two people — sitting there, looking important. So, I don’t have to call somebody on the phone, I’ll have someone who will just say, ‘There is a very important broker who wants to speak to you.’ That’s a flunky.

A goon is a little subtler. But I kind of had to make this category because people kept telling me they felt that their jobs were bullshit — if they were a telemarketer, if they were a corporate lawyer, if they were in PR, marketing, things like that. I had to come to terms with why it was they felt that way.

The pattern seemed to be that these are jobs that are actually useful in many cases for the companies they work for, but they felt the entire industry shouldn’t exist. They’re basically people there to annoy you, to push you around in some way. And insofar as it is necessary, it’s only necessary because other people have them. You don’t need a corporate lawyer if your competitor doesn’t have a corporate lawyer. You don’t need a telemarketer at all, but insofar as you can make up an excuse to say you need them, it’s because the other guys got one. Alright, so that’s easy enough.

Duct-tapers are people who are there to solve problems that shouldn’t exist in the first place. At my old university, we only seemed to have one carpenter, and it was really hard to get them. There was a point where the shelf collapsed in my office at the university where I was working in England. The carpenter was supposed to come, and there was a huge hole in the wall, you could look at the damage. And he never seemed to show up, he always had something else to do. We finally figured out that there was this one guy sitting there all day, apologizing for the fact that the carpenter never came.

He’s very good at the job, he’s very likable follow who always seemed a little sad and melancholy, and it was very hard to get angry at him, which is of course what his job was. Be a flak-catcher, effectively. But at one point I thought, if they fired that guy and hired another carpenter, they wouldn’t need him. So, that’s a classic example of a duct-taper.

Read the full article in Jacobin.

In Denmark, harsh new laws for immigrant ‘ghettos’

Ellen Barry & Martin Selsoe Sorensen,

New York Times, 1 July 2018

Yildiz Akdogan, a Social Democrat whose parliamentary constituency includes Tingbjerg, which is classified as a ghetto, said Danes had become so desensitized to harsh rhetoric about immigrants that they no longer register the negative connotation of the word ‘ghetto’ and its echoes of Nazi Germany’s separation of Jews.

‘We call them ‘ghetto children, ghetto parents,’ it’s so crazy,’ Ms. Akdogan said. ‘It is becoming a mainstream word, which is so dangerous. People who know a little about history, our European not-so-nice period, we know what the word ‘ghetto’ is associated with.’

She pulled out her phone to display a Facebook post from a right-wing politician, railing furiously at a Danish supermarket for selling a cake reading ‘Eid Mubarak,’ for the Muslim holiday of Eid. ‘Right now, facts don’t matter so much, it’s only feelings,’ she said. ‘This is the dangerous part of it.’

For their part, many residents of Danish ‘ghettos’ say they would move if they could afford to live elsewhere. On a recent afternoon, Ms. Naassan was sitting with her four sisters in Mjolnerparken, a four-story, red brick housing complex that is, by the numbers, one of Denmark’s worst ghettos: forty-three percent of its residents are unemployed, 82 percent come from ‘non-Western backgrounds,’ 53 percent have scant education and 51 percent have relatively low earnings.

The Naassan sisters wondered aloud why they were subject to these new measures. The children of Lebanese refugees, they speak Danish without an accent and converse with their children in Danish; their children, they complain, speak so little Arabic that they can barely communicate with their grandparents. Years ago, growing up in Jutland, in Denmark’s west, they rarely encountered any anti-Muslim feeling, said Sara, 32.

‘Maybe this is what they always thought, and now it’s out in the open,’ she said. ‘Danish politics is just about Muslims now. They want us to get more assimilated or get out. I don’t know when they will be satisfied with us.’

Rokhaia, her due date fast approaching, flared with anger at the mandatory preschool program approved by the government last month: Already, she said, her daughter was being taught so much about Christmas in kindergarten that she came home begging for presents from Santa Claus.

Read the full article in the New York Times.

Thomas Bayes and the crisis in science

David Papineau, TLS, 28 June 2018

Bayes’s reasoning works best when we can assign clear initial probabilities to the hypotheses we are interested in, as when our knowledge of the minting machine gives us initial probabilities for fair and biased coins. But such well-defined ‘prior probabilities’ are not always available. Suppose we want to know whether or not heart attacks are more common among wine than beer drinkers, or whether or not immigration is associated with a decline in wages, or indeed whether or not the universe is governed by a benign deity. If we had initial probabilities for these hypotheses, then we could apply Bayes’s methodology as the evidence came in and update our confidence accordingly. Still, where are our initial numbers to come from? Some preliminary attitudes to these hypotheses are no doubt more sensible than others, but any assignment of definite prior probabilities would seem arbitrary.

It was this ‘problem of the priors’ that historically turned orthodox statisticians against Bayes. They couldn’t stomach the idea that scientific reasoning should hinge on personal hunches. So instead they cooked up the idea of ‘significance tests’. Don’t worry about prior probabilities, they said. Just reject your hypothesis if you observe results that would be very unlikely if it were true.

This methodology was codified at the beginning of the twentieth century by the rival schools of Fisherians (after Sir Ronald Fisher) and Neyman-Pearsonians (Jerzy Newman and Egon Pearson). Various bells and whistles divided the two groups, but on the basic issue they presented a united front. Forget about subjective prior probabilities. Focus instead on the objective probability of the observed data given your hypothesized cause. Pick some level of improbability you won’t tolerate (the normally recommended level was 5 per cent). Reject your hypothesis if it implies the observed data are less likely than that.

In truth, this is nonsense on stilts. One of the great scandals of modern intellectual life is the way generations of statistics students have been indoctrinated into the farrago of significance testing. Take coins again. In reality you won’t meet a heads-biased coin in a month of Sundays. But if you keep tossing coins five times, and apply the method of significance tests ‘at the 5 per cent level’, you’ll reject the hypothesis of fairness in favour of heads-biasedness whenever you see five heads, which will be about once every thirty-second coin, simply because fairness implies that five heads is less likely than 5 per cent.

This isn’t just an abstract danger. An inevitable result of statistical orthodoxy has been to fill the science journals with bogus results. In reality genuine predictors of heart disease, or of wage levels, or anything else, are very thin on the ground, just like biased coins. But scientists are indefatigable assessors of unlikely possibilities. So they have been rewarded with a steady drip of ‘significant’ findings, as every so often a lucky researcher gets five heads in a row, and ends up publishing an article reporting some non-existent discovery.

Read the full article in the TLS.

The long shadow of 9/11

Robert Malley & Jon Finer,

Foreign Affairs, July/August 2018

But it’s not just American politics that suffers from an overemphasis on counterterrorism; the country’s policies do, too. An administration can do more than one thing at once, but it can’t prioritize everything at the same time. The time spent by senior officials and the resources invested by the government in finding, chasing, and killing terrorists invariably come at the expense of other tasks: for example, addressing the challenges of a rising China, a nuclear North Korea, and a resurgent Russia.

The United States’ counterterrorism posture also affects how Washington deals with other governments—and how other governments deal with it. When Washington works directly with other governments in fighting terrorists or seeks their approval for launching drone strikes, it inevitably has to adjust aspects of its policies. Washington’s willingness and ability to criticize or pressure the governments of Egypt, Pakistan, Saudi Arabia, and Turkey, among others, is hindered by the fact that the United States depends on them to take action against terrorist groups or to allow U.S. forces to use their territory to do so. More broadly, leaders in such countries have learned that in order to extract concessions from American policymakers, it helps to raise the prospect of opening up (or shutting down) U.S. military bases or granting (or withdrawing) the right to use their airspace. And they have learned that in order to nudge the United States to get involved in their own battles with local insurgents, it helps to cater to Washington’s concerns by painting such groups (rightly or wrongly) as internationally minded jihadists.

The United States also risks guilt by association when its counterterrorism partners ignore the laws of armed conflict or lack the capacity for precision targeting. And other governments have become quick to cite Washington’s fight against its enemies to justify their own more brutal tactics and more blatant violations of international law. It is seldom easy for U.S. officials to press other governments to moderate their policies, restrain their militaries, or consider the unintended consequences of repression. But it is infinitely harder when those other states can justify their actions by pointing to Washington’s own practices—even when the comparison is inaccurate or unfair.

These policy distortions are reinforced and exacerbated by a lopsided interagency policymaking process that emerged after the 9/11 attacks. In most areas, the process of making national security policy tends to be highly regimented. It involves the president’s National Security Council staff; deputy cabinet secretaries; and, for the most contentious, sensitive, or consequential decisions, the cabinet itself, chaired by either the national security adviser or the president. But since the Bush administration, counterterrorism has been run through a largely separate process, led by the president’s homeland security adviser (who is technically a deputy to the national security adviser) and involving a disparate group of officials and agencies. The result in many cases is two parallel processes – one for terrorism, another for everything else—which can result in different, even conflicting, recommendations before an ultimate decision is made.

Read the full article in Foreign Affairs.

An aversion to dolls and dresses

is no proof you’re a man

Hadley Freeman, Guardian, 5 July 2018

I thought about that period of my life this week when I read an interview in the Daily Mirror with a transgender teenager who was born female. At the age of six, he told his mother he wanted to climb trees with boys. ‘Don’t do that, it’s not what girls do,’ his mother replied. According to the report, the six-year-old replied, ‘Well, I’ll have a sex-change then.’

‘I never fitted in with the girls at school. I didn’t like makeup, or dresses, and I never wanted to shave my legs or armpits, like everyone else. I really wanted to do all the things boys did, and wear masculine clothes. I think even that young, deep down, I knew I wanted to be a man,’ he told the Mirror.

Whether somebody really decided to change sex because of climbing trees and makeup or, as is far more likely, this was oversimplified reporting on the Mirror’s part, nowhere in the article is there any suggestion that an aversion to feminine clothing doesn’t prove you’re a man. This interview was strikingly reminiscent of an interview on BBC Radio 4’s iPM programme, aired in 2016, in which a mother said she realised her three-year-old daughter wasn’t really a girl because she played with Wolverine toys instead of baby dolls. The interviewer did not challenge her. CBBC screened a documentary in the same year, I Am Leo, about a trans boy, in which transgenderism was explained with the help of pink brains for girls and blue brains for boys.

What kind of message is this sending to girls: that if they don’t like dolls, or pink, they are literally in the wrong body? It’s hard to see how it helps anyone – least of all transgender people – for the media to reduce transgenderism to feelings about makeup, and the female experience to femininity.

I once thought that definitions of gender would keep expanding until the idea of gender itself was rendered irrelevant; but, to my astonishment, they have shrunk and hardened, and this has had an effect on how men and women see each other and themselves. In Nanette, her excellent new standup show on Netflix, the comedian Hannah Gadsby talks about her weariness with being told by members of the public that she must be a trans man in denial, because she is a lesbian who wears trousers. Because that’s where some of the current gender debates have led us: to women being told that they’re not really women if they don’t enjoy wearing pretty dresses.

Far from gender being ‘on a spectrum’, as the current lingo has it, too many well-meaning articles about it are more binary than ever: girls are like this, boys are like that. This is what feminists like me object to because gender is restrictive and femininity in particular was conceived to be limiting. News that 40 British schools have banned skirts in favour of trousers, in a hamfisted attempt at gender neutrality, is merely a different side to the same coin, because the message being sent to children is, ‘Skirts are too dangerously feminine to be worn by boys.’

Read the full article in the Guardian.

Out of sight, out of our minds

Ed Burmilla, The Baffler, 20 June 2018

The tiny Pacific island nation of Nauru, all 8.1 square miles of it, used to be a pile of shit; that is, a pile of very valuable, phosphate-rich seabird guano. That explains why a tiny speck in the middle of nowhere was fought over and colonized by the Germans, then Australia and New Zealand (joint hall monitors of a League of Nations mandate), then the Japanese, and then the British.

When it achieved independence in 1968 it was better positioned for the future than most European colonies in the Pacific because the phosphate reserves were not yet depleted. More commonly the UK would grant independence immediately after the last phosphates were mined (as in Kiribati in 1979), and some cynics have suggested that such timing was not entirely coincidental. But Nauru appeared to do everything right. It set up a national Trust for its guano earnings and from 1968 to 1980 its roughly ten thousand citizens were the wealthiest people per capita on Earth.

Alas, the Trust fell to a series of zany investment schemes, including Air Nauru (repo men took its sole Boeing 737), luxury properties in Sydney and Melbourne (used mostly for Nauruan elites to treat themselves to luxury vacations), and, I shit you not, financing the 1993 West End flop Leonardo the Musical. By 2003 Nauru’s once-vaunted phosphate Trust had dwindled from well over $1 billion to $100 million. If that sounds like a lot of money, it isn’t for a nation with virtually no other substantial revenue stream. The guano was gone. Tourism was impossible (too remote, too underdeveloped). Manufacturing was nonexistent. Aside from a little fishing, there was no way to bring in money.

And that is how in 2001 the Nauru Regional Processing Centre came into being, an economic life preserver tossed at a desperate nation by Australia. The Aussies, as it happened, were in need of a place to stash thousands of would-be immigrants and asylum seekers it very much did not want on Australian soil. Legally, if migrants could be intercepted at sea and prevented from setting foot in Australia proper, the government could maintain legal cover for denying them a slew of rights and privileges.

In exchange for detaining this most inconvenient population indefinitely, Nauru received a steady and valuable influx of Australian dollars. The island was once again rich in resources, only this time the minerals were the poor, the abused, the war-ravaged, and the persecuted of Indonesia, Bangladesh, Afghanistan, Cambodia, and other troubled spots throughout Asia.

Read the full article in the Baffler.

Marketisation is destroying academic standards

through rampant grade inflation

Lee Jones, Medium, 21 June 2018

League tables are a crucial mechanism through which market pressures operate. One widely used metric is ‘value added’, a toe-curling term denoting the gap between the qualifications students enters university with, and their final degree classifications. ‘Value added’ is heavily weighted in many league tables, being used as a crude proxy for ‘teaching quality’.

Genuinely improving student attainment is hard, because it is shaped by many factors beyond lecturers’ control, like students’ background, home life, income, mental health, personal commitment, and so on. These factors are never accounted for in league tables. Conversely, it is very easy to improve a department’s ‘value added’ score: simply award higher grades, so the gap between entry qualifications and degree classifications widens.

The National Student Survey (NSS) has a similar impact. NSS scores on feedback and ‘overall satisfaction’ are incorporated into many league tables. Since NSS scores are correlated with degree results, one easy way to game them is to inflate grades. Many universities also cajole or bribe students to give good feedback, despite guidance to the contrary.

Since league tables heavily influence students’ choice of university, the temptation to fiddle one’s ranking is enormous. Moreover, when all of one’s ‘competitors’ are doing the same, resistance becomes suicidal, risking falling student recruitment and financial collapse.

In theory, a department’s external examiners — academics recruited from other institutions to assessment procedures are rigorous — should arrest this vicious spiral. However, if their own universities are inflating grades, externals are unlikely to be critical, and may even admonish departments for falling behind.

Universities have internalised the existential threat of market competition by building grade inflation into assessment practices. Degree classifications are typically generated by algorithms that unevenly weight different years and modules. In a 2015 survey, 39 percent of universities admitted to having changed their algorithms since 2010 so that they did not ‘disadvantage students in comparison with students in similar institutions’.

Read the full article on Medium.

Big Brother’s blind spot

Joannne McNeil, The Baffler, No 40, July 2018

Surveillance is ‘Orwellian when accurate, Kafkaesque when inaccurate,’ Privacy International’s Frederike Kaltheuner told me. These systems are probabilistic, and ‘by definition, get things wrong sometimes,’ Kaltheuner elaborated. ‘There is no 100 percent. Definitely not when it comes to subjective things.’ As a target of surveillance and data collection, whether you are a Winston Smith or Josef K is a matter of spectrum and a dual-condition: depending on the tool, you’re either tilting one way or both, not in the least because even data recorded with precision can get gummed up in automated clusters and categories. In other words, even when the tech works, the data gathered can be opaque and prone to misinterpretation.

Companies generally don’t flaunt their imperfection – especially those with Orwellian services under contract – but nearly every internet user has a story about being inaccurately tagged or categorized in an absurd and irrelevant way. Kaltheuner told me she once received an advertisement from the UK government ‘encouraging me not to join ISIS,’ after she watched hijab videos on YouTube. The ad was bigoted, and its execution was bumbling; still, to focus on the wide net cast is to sidestep the pressing issue: the UK government has no business judging a user’s YouTube history. Ethical debates about artificial intelligence tend to focus on the ‘micro level,’ Kaltheuner said. When ‘sometimes the broader question is, do we want to use this in the first place?’

This is precisely the question taken up by software developer Nabil Hassein in ‘Against Black Inclusion in Facial Recognition,’ an essay he wrote last year for the blog Decolonized Tech. Making a case both strategic and political, Hassein argues that technology under police control never benefits black communities and voluntary participation in these systems will backfire. Facial recognition commonly fails to detect black faces, in an example of what Hassein calls ‘technological bias.’ Rather than working to resolve this bias, Hassein writes, we should ‘demand instead that police be forbidden to use such unreliable surveillance technologies.’

Hassein’s essay is in part a response to Joy Buolamwini’s influential work as founder of the Algorithmic Justice League. Buolamwini, who is also a researcher at MIT Media Lab, is concerned with the glaring racial bias expressed in computer vision training data. The open source facial recognition corpus largely comprises white faces, so the computation in practice interprets aspects of whiteness as a ‘face.’ In a TED Talk about her project, Buolamwini, a black woman, demonstrates the consequences of this bias in real time. It is alarming to watch as the digital triangles of facial recognition software begin to scan and register her countenance on the screen only after she puts on a white mask. For his part, Hassein empathized with Buolamwini in his response, adding that ‘modern technology has rendered literal Frantz Fanon’s metaphor of ‘Black Skin, White Masks.’’ Still, he disagrees with the broader political objective. ‘I have no reason to support the development or deployment of technology which makes it easier for the state to recognize and surveil members of my community. Just the opposite: by refusing to don white masks, we may be able to gain some temporary advantages by partially obscuring ourselves from the eyes of the white supremacist state.’

Read the full article in the Baffler.

The complexities of Laura Ingalls Wilder

Terri Apter, TLS, 2 July 2018

Wilder’s descriptions of Native Americans are imbued with wonder and awe: these men were ‘thin and brown and bare . . . They sat straight up in their naked ponies and did not look right or left. But their black eyes glittered . . . The Indians’ faces were like the red-brown wood that Pa carved to make a bracket for Ma’. When one enters the house and greets Pa, Laura and Mary cannot take their eyes off the man sitting ‘so still that the beautiful eagle-feathers in his scalplock didn’t stir’. When he leaves, Pa notes his superior intelligence: ‘that was French he spoke. I wish I had picked up some of that lingo’. While Ma declares that ‘Indians’ should keep to themselves, Pa tells her there is nothing to worry about so long as ‘we treat them well’. The following day, when one man, passing on horseback, aims his rifle at the family dog, Jack, Pa says, ‘Well, it’s his path. An Indian trail, long before we came’.

Pa knew. He can articulate his knowledge of the Native Americans’ prior right and use it to understand their behaviour. But when he explains matters to Laura, he adopts the bland, unreflecting voice of privilege: ‘When white settlers come into a country, the Indians have to move on’. Laura is confused by the brutality of this displacement and protests, ‘But, Pa, I thought this was Indian Territory. Won’t it make the Indians mad to have to –’ and her father silences her: ‘No more questions, Laura’, he insists. ‘Go to sleep.’

Here Wilder depicts an age-old dynamic where a child speaks truth to an adult who insists that speaking such truth is not allowed. This is the all-too-common process of silencing a child’s grasp of natural justice, thereby disturbing the route from observation and empathy to understanding. It is also the process of instilling self-doubt and self-distrust in a child as she is told her views lack authority – a process repeated over and over in unequal societies, a process as old as the oldest myths, as the psychologist Carol Gilligan notes in The Birth of Pleasure (2002). Wilder portrays the subtle underpinnings of bias – a very different thing from endorsing bias.

Support for the degradation of Wilder’s reputation is also reported to have come from the pairing of two sentences explaining the father’s wish to go west ‘where there were no people. Only Indians lived there’. No reading of these sentences can exonerate their chilling implication, and Wilder herself apologized profusely, amending the text in 1953 to ‘where there were no settlers. Only Indians lived there’. Read in context, however, the initial bias revealed is not the stark assumption that ‘Indians are not people’. The ‘people’ Pa wants to escape are the people who frighten away the animals: ‘Pa . . . liked a country where the wild animals lived without being afraid’. Nor does the father like hearing ‘the thud of an axe which was not [his] axe, or the echo of a shot that did not come from his gun’. Wilder emphasizes the silence of Native Americans: ‘He stood in the doorway, looking at them, and they had not heard a sound’.

Read the full article in the TLS.

‘We will destroy everything’

Amnesty International, June 2018

Early in the morning of 25 August 2017, a Rohingya armed group known as the Arakan Rohingya Salvation Army (ARSA) launched coordinated attacks on security force posts in northern Rakhine State, Myanmar. In the days, weeks, and months that followed, the Myanmar security forces, led by the Myanmar Army, attacked the entire Rohingya population in villages across northern Rakhine State.

In the 10 months after 25 August, the Myanmar security forces drove more than 702,000 women, men, and children—more than 80 per cent of the Rohingya who lived in northern Rakhine State at the crisis’s outset— into neighbouring Bangladesh. The ethnic cleansing of the Rohingya population was achieved by a relentless and systematic campaign in which the Myanmar security forces unlawfully killed thousands of Rohingya, including young children; raped and committed other sexual violence against hundreds of Rohingya women and girls; tortured Rohingya men and boys in detention sites; pushed Rohingya communities toward starvation by burning markets and blocking access to farmland; and burned hundreds of Rohingya villages in a targeted and deliberate manner.

These crimes amount to crimes against humanity under international law, as they were perpetrated as part of a widespread and systematic attack against the Rohingya population. Amnesty International has evidence of nine of the 11 crimes against humanity listed in the Rome Statute of the International Criminal Court being committed since 25 August 2017, including murder, torture, deportation or forcible transfer, rape and other sexual violence, persecution, enforced disappearance, and other inhumane acts, such as forced starvation. Amnesty International also has evidence that responsibility for these crimes extends to the highest levels of the military, including Senior General Min Aung Hlaing, the Commander-in-Chief of the Defence Services.

Read the full report by Amnesty International.

Cages are cruel. The desert is, too.

Francisco Cantú , New York Times, 30 June 2018

It is important to understand that the crisis of separation manufactured by the Trump administration is only the most visibly abhorrent manifestation of a decades-long project to create a ‘state of exception’ along our southern border.

This concept was used by the Italian philosopher Giorgio Agamben in the aftermath of Sept. 11 to describe the states of emergency declared by governments to suspend or diminish rights and protections. In April, when the president deployed National Guard troops to the border (an action also taken by his two predecessors), he declared that ‘the situation at the border has now reached a point of crisis.’ In fact, despite recent upticks, border crossings remained at historic lows and the border was more secure than ever — though we might ask, secure for whom?

For most Americans, what happens on the border remains out of sight and out of mind. But in the immigration enforcement community, the militarization of the border has given rise to a culture imbued with the language and tactics of war.

Border agents refer to migrants as ‘criminals,’ ‘aliens,’ ‘illegals,’ ‘bodies’ or ‘toncs’ (possibly an acronym for ‘temporarily out of native country’ or ‘territory of origin not known’ — or a reference to the sound of a Maglite hitting a migrant’s skull). They are equipped with drones, helicopters, infrared cameras, radar, ground sensors and explosion-resistant vehicles. But their most deadly tool is geographic — the desert itself.

‘Prevention Through Deterrence’ came to define border enforcement in the 1990s, when the Border Patrol cracked down on migrant crossings in cities like El Paso. Walls were built, budgets ballooned and scores of new agents were hired to patrol border towns. Everywhere else, it was assumed, the hostile desert would do the dirty work of deterring crossers, away from the public eye.

Read the full article in the New York Times.

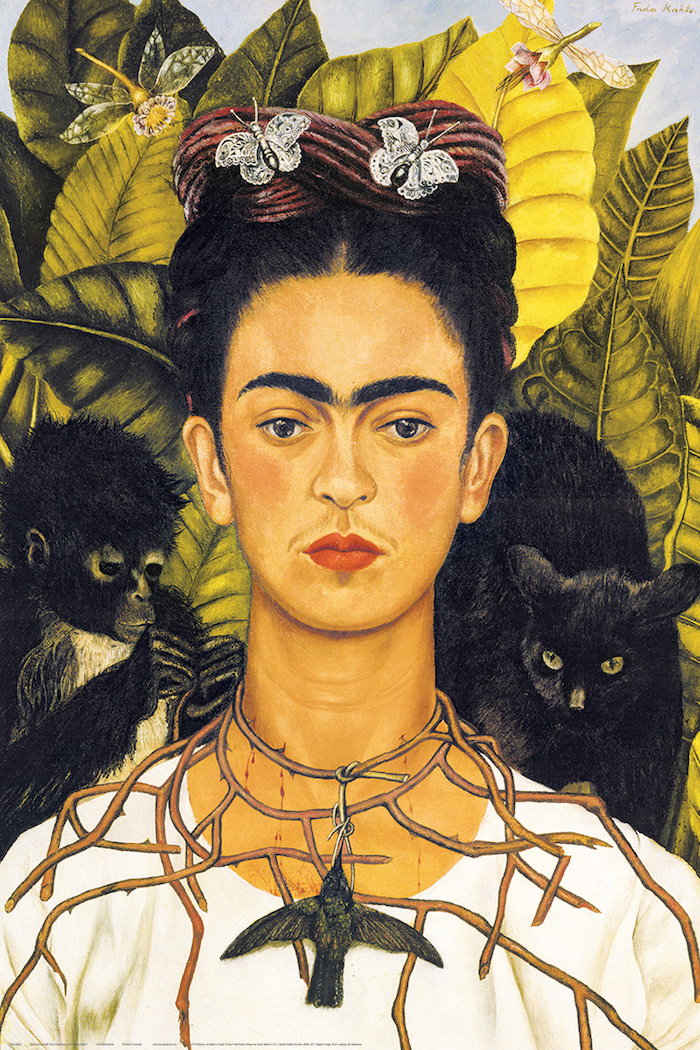

The many (marketable) faces of Frida Kahlo

Miranda France, Prospect, 13 May 2018

What makes Kahlo so marketable? Her art, characterised by gorgeous colours and enigmatic themes of injury, love, betrayal and thwarted motherhood, looks wonderful on the canvas—and transfers nicely to t-shirts. A quick internet search finds her gracing everything from trainers to sanitary towels. The famous face, gazing out of some 200 self-portraits, is both beautiful and unconventional, with thick eyebrows that join in the middle and the hint of a moustache. It’s part of the portraits’ appeal that her face always looks the same, as though worn like a mask. Kahlo described herself as la gran ocultadora—the great concealer—and there is something totemic about her portraits that speaks to an age when self-representation is both pervasive and untrustworthy. Kahlo can be what we want her to be: a feminist, wounded lover, cultural ambassador, survivor of disability, sexual adventurer. I can have my Frida; you can have yours. With the right software, that combination of beauty, defiance and colour can be scaled up or down as needed: cute Frida for emojis and children’s picture books; edgy Frida for a book on queer icons. It helps that the artist also produced a few soupy aphorisms. ‘You deserve a lover who takes away the lies and brings you hope, coffee and poetry,’ is currently playing well on Twitter.

These days Kahlo can be found in all the places we used to see Che Guevara, another Latin American communist who has shifted a fair bit of merchandise in his time. But whereas Che t-shirts and badges tend to end up on people who are at least partly sympathetic to his cause, Kahlo the communist is not popular with merchandisers, even though she was involved with the Party, one way or another, all her life. She joined the Mexican Communist Party at the age of 20. In one of her last paintings, Marxism Will Give Health to the Sick (1954), a saintly Marx passes down Das Kapital and enables Frida to throw away her crutches. Kahlo also had an affair with Leon Trotsky, three years before his assassination in Mexico, that so galvanised the old revolutionary, he wrote a nine-page letter begging her not to leave him. Her response was ‘estoy cansada del viejo’ (I’m tired of the old man).

While the orthodoxy of Kahlo’s communism is questionable, her socialist instincts and her commitment to marginalised groups were sincere and life-long. In the rush to commodification, though, ideology is easily cast aside; faces of famous communists are massified in defence of ideologies they did not espouse. So selfie queen Kim Kardashian can, without apparent irony, appear as Frida on Snapchat. Theresa May can wear a Frida bracelet at the Conservative Party conference with barely a murmur of surprise. Kahlo’s image has become so ubiquitous, so resilient to interpretation, that I can admire her monobrow en route to the threading salon, without feeling like a hypocrite or someone who’s missed the point.

Read the full article in Prospect.

Is it great to be a worker in the US?

Not compared with the rest of the developed world.

Andrew Van Dam, Washington Post, 4 July 2018

The US labor market is hot. Unemployment is at 3.8 percent, a level it’s hit only once since the 1960s, and many industries report deep labor shortages. Old theories of what’s wrong with the labor market — such as a lack of people with necessary skills — are dying fast. Earnings are beginning to pick up, and the Federal Reserve envisions a steady regimen of rate hikes.

So why does a large subset of workers continue to feel left behind? We can find some clues in a new 296-page report from the Organization for Economic Cooperation and Development (OECD), a club of advanced and advancing nations that has long been a top source for international economic data and research. Most of the figures are from 2016 or before, but they reflect underlying features of the economies analyzed that continue today.

In particular, the report shows the United States’s unemployed and at-risk workers are getting very little support from the government, and their employed peers are set back by a particularly weak collective-bargaining system.

Those factors have contributed to the United States having a higher level of income inequality and a larger share of low-income residents than almost any other advanced nation. Only Spain and Greece, whose economies have been ravaged by the euro-zone crisis, have more households earning less than half the nation’s median income — an indicator that unusually large numbers of people either are poor or close to being poor.

Joblessness may be low in the United States and employers may be hungry for new hires, but it’s also strikingly easy to lose a job here. An average of 1 in 5 employees lose or leave their jobs each year, and 23.3 percent of workers ages 15 to 64 had been in their job for a year or less in 2016 — higher than all but a handful of countries in the study.

If people are moving to better jobs, labor-market churn can be a healthy sign. But decade-old OECD research found that an unusually large amount of job turnover in the United States is due to firing and layoffs, and Labor Department figures show the rate of layoffs and firings hasn’t changed significantly since the research was conducted.

Read the full article in the Washington Post.

Why read Aristotle today?

Edith Hall, Aeon, 29 May 2018

But what did Aristotle mean by ‘happiness’ or eudaimonia? He did not believe it could be achieved by the accumulation of good things in life – including material goods, wealth, status or public recognition – but was an internal, private state of mind. Yet neither did he believe it was a continuous sequence of blissful moods, because this could be enjoyed by someone who spent all day sunbathing or feasting. For Aristotle, eudaimonia required the fulfilment of human potentialities that permanent sunbathing or feasting could not achieve. Nor did he believe that happiness is defined by the total proportion of our time spent experiencing pleasure, as did Socrates’ student Aristippus of Cyrene.

Aristippus evolved an ethical system named ‘hedonism’ (the ancient Greek for pleasure is hedone), arguing that we should aim to maximise physical and sensory enjoyment. The 18th-century utilitarian Jeremy Bentham revived hedonism in proposing that the correct basis for moral decisions and legislation was whatever would achieve the greatest happiness for the greatest number. In his manifesto An Introduction to the Principles of Morals and Legislation (1789), Bentham actually laid out an algorithm for quantitative hedonism, to measure the total pleasure quotient produced by any given action. The algorithm is often called the ‘hedonic calculus’. Bentham spelled out the variables: how intense is the pleasure? How long will it last? Is it an inevitable or only possible result of the action I am considering? How soon will it happen? Will it be productive and give rise to further pleasure? Will it guarantee no painful consequences? How many people will experience it?

Bentham’s disciple, John Stuart Mill, pointed out that such ‘quantitative hedonism’ did not distinguish human happiness from the happiness of pigs, which could be provided with incessant physical pleasures. So Mill introduced the idea that there were different levels and types of pleasure. Bodily pleasures that we share with animals, such as the pleasure we gain from eating or sex, are ‘lower’ pleasures. Mental pleasures, such as those we derive from the arts, intellectual debate or good behaviour, are ‘higher’ and more valuable. This version of hedonist philosophical theory is usually called prudential hedonism or qualitative hedonism.

There are few philosophers advocating hedonist theories today, but in the public understanding, when ‘happiness’ is not defined as the possession of a set of ‘external’ or ‘objective’ good things such as money and career success, it describes a subjective hedonistic experience – a transient state of elation. The problem with both such views, for Aristotle, is that they neglect the importance of fulfilling one’s potential. He cites approvingly the primordial Greek maxim that nobody can be called happy until he is dead: nobody wants to end up believing on his deathbed that he didn’t fulfil his potential. In her book The Top Five Regrets of the Dying (2011), the palliative nurse Bronnie Ware describes exactly the hazards that Aristotle advises us to avoid. Dying people say: ‘I wish I’d had the courage to live a life true to myself, not the life others expected of me.’ John F Kennedy summed up Aristotelian happiness thus: ‘the exercise of vital powers along lines of excellence in a life affording them scope’.

Read the full article in Aeon.

Towards the disappearance of politics?

Valeria E Bruno, Social Europe, 5 July 2018

Exactly sixty years ago, in 1958, Hannah Arendt published The Human Condition. In her philosophical masterpiece, Arendt indicated that the ‘Vita Activa’, a life actively encompassing public political debate and political action, is the only place of real freedom for citizens and sole remedy for totalitarian regimes and powerful economic oligarchies. Today, erosion of the public sphere is once more a harsh reality, as is particularly evident in the current weakness of ‘Western Democracies’.

The substantial risk of leaving democratic institutions only formally alive, but significantly lacking any content, is encouraging a deep reflexion on the reasons behind this erosion of the space for public debate and active political action. However, some frequent arguments are employed by different people (belonging to political parties, policy-makers, academia, analysts, media) to justify the impossibility of developing a comprehensive political debate within contemporary democracies. At least four main orders of arguments can be identified that are used to justify the shrinking of the Vita Activa: technical complexity, global issues, trivialization of politics and ideological taboo…

Open, pluralistic, debate within democratic societies is unfortunately the first victim of the current political environment, as seen recently in the US and Italy. The limitation of the public debate by reason of technical complexity and growingly global issues, or trivial generalization and ideological taboos, is dangerously forcing democracies’ citizens into political passivity, narrowing de facto the window of alternatives to an extreme degree, and resulting is an increased polarization of the ‘the offer of political proposals to meet citizens’ demands for public solutions.

This is the exact opposite of the Vita Activa portrayed by Hannah Arendt masterfully: societies where public life, once politics is dismissed, merely exists in terms of labor-power and consumption.

Read the full article in Social Europe.

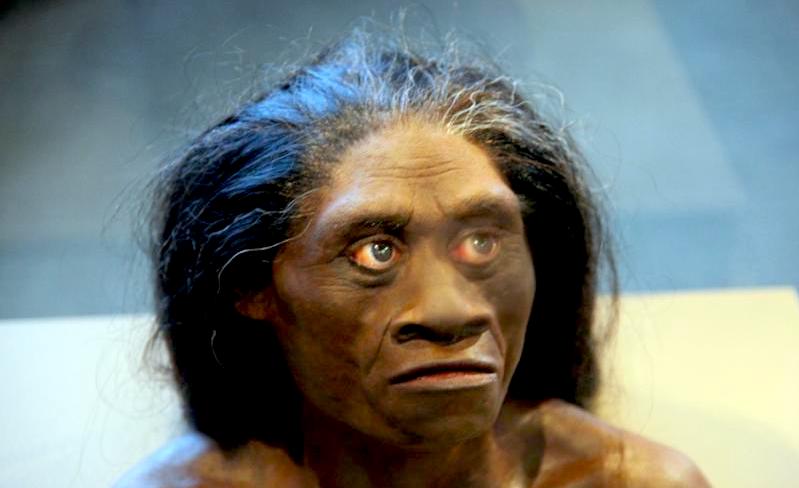

This is where scientists may find the next hobbits

John Hawks, Medium, 28 June 2018

Great archaeological detective stories start with unexpected discoveries in unusual places. In May, an international team of scientists led by Thomas Ingicco revealed new archaeological findings from Kalinga, in the northernmost part of Luzon, Philippines. Until now, scientists have mostly assumed that the Philippines were first inhabited by modern humans, only after 100,000 years ago. But the artifacts unearthed by Ingicco and coworkers were much older, more than 700,000 years old.

They didn’t find any hominin fossil skeletons, but the stone tools and the butchered remains of a rhinoceros show that somebody lived on this island long before modern people evolved in Africa.

Luzon was never connected to the Asian mainland, even when sea level was at its lowest during the Ice Ages. To get there, ancient hominins had to float. Who were they, and how did they get there?

Luzon isn’t the first deepwater island to produce such ancient evidence. In 2003, Indonesian and Australian archaeologists uncovered skeletal remains and ancient tools on the island of Flores. The bones were so strange, so primitive, that scientists named a new species, Homo floresiensis. Most people know them by their nickname, the ‘hobbits.’

The best-known fossil specimens from Flores come from Liang Bua cave, where they are between around 100,000 and 60,000 years old. Those bones include LB1, a skeleton which had a tiny brain, a small body, elongated feet and toes, and apelike wrist bones.

Scientists called her ‘Flo’, and she was like nothing they had ever seen. The discovery gave rise to debates that are still raging, 15 years later. Who were the ancestors of the hobbits, and how did they reach Flores? We still don’t know for sure, and the mystery has only deepened since 2004.

Read the full article on Medium.

Was a renowned literary theorist also a spy?

Richard Wolin, Chronicle of Higher Education,

20 June 2018

On this side of the Atlantic, Kristeva’s professions of innocence have been echoed by her biographer, Alice Jardine, a literature professor at Harvard University. Jardine told The New York Times that, ‘Nobody who knows anything about her or her work believes this.’

If that is the case, it is very likely because Kristeva’s supporters, instead of heeding the facts and circumstances of the Bulgarian intelligence dossier, remain mesmerized by her aura as a luminary of the French Theory vogue, which, during the 1980s, had a far-reaching impact on literary studies in North America. Thus in a 1996 article, the French political historian François Hourmant described the peculiar ‘recognition cum veneration’ that greeted Tel Quel’s work in the United States. François Dosse’s two-volume History of Structuralism(University of Minnesota Press, 1998) also attests to the widespread mood of uncritical adulation that surrounded Kristeva’s work at the time. His chapter recounting Kristeva’s arrival in France — an episode that, among acolytes of French Theory, would become the stuff of legend — is entitled: ‘1966: Annum Mirabile: Julia Comes to Paris!’

However, at this point, a panoply of experts has described the Bulgarian intelligence dossier as reliable and convincing. In the words of Ekaterina Boncheva — a former dissident who is now a member of the commission in charge of vetting State Security service files in accordance with lustration legislation passed in the 1990s to prevent former Communists and informants from regaining a foothold in public life — ‘In my 10 years of work on behalf of the Commission, there has never been a case of counterfeit files. … If Kristeva has a problem with the materials that have come to light, she should take it up with the DS, not with us.’

Ironically, it was Kristeva herself who unwittingly set in motion the chain of events that resulted in the embarrassing disclosure when she sought to join the editorial board of a Sofia-based literary organ, Literaturen Vestnik (‘Literary Journal’). According to the requirements of the post-Communist era lustration law, intelligence files of all persons who aspire to positions of cultural or political leadership and were born before 1976 require vetting by the seven-member Declassification Commission.

Read the full article in the Chronicle of Higher Education.

Trolley problems: You’re doing it all wrong

Justin Weinberg, Daily Nous, 2 July 2018

Please note that the value of trolley problems and the like does not depend on:

- Whether the trolley problem is realistic, that is, whether trolley systems are engineered to avoid these kinds of problems, whether one is very unlikely to encounter any trolleys in one’s life, whether one is unlikely to be confronted with a situation in which they must quickly choose between options in which different numbers of people die

- Whether what people in simulations or experiments actually choose to do in trolley-like situations matches with what they say they would do in such situations

- Which parts of people’s brains show increased activity when they choose one option or another

- The idea that what one thinks one should do in such problems tells us straightforwardly what one should do in various real life situations

But, you might ask, if trolley problems are not realistic, or predictive, or a means by which science can solve moral problems, or action-guiding, then what value do they have? Their value is in raising questions and problems for our moral thinking, such as:

- whether the numbers of people being harmed or benefit matter to what we should do, and if so, why?

- whether there is a distinction worth making between acting and not acting, and if so, whether that distinction makes a difference to the value of resultant benefits and harms, and if so, why?

- whether there is a distinction worth making between what an agent intends and what an agent merely foresees, and if so, whether that distinction makes a difference to the value of resultant benefits and harms, and if so, why?

Each of these produces more questions: what is a harm or benefit? What is it to harm or benefit? How do good and bad add up across persons? What is an action? What is an intention? What is the relationship between metaphysics and ethics? And so on.

Read the full article on the Daily Nous.

Journal trials accepting all articles

it sends for peer review

Rachael Pells, THES, 29 June 2018

An open-access bioscience journal is to commit to publishing all articles that it accepts for peer review, in a radical trial.

eLife said that it would test the approach in an experiment involving 300 papers, with the goal of giving authors greater control of the publication process.

Non-profit eLife currently invites about 30 per cent of submitted articles for full peer review and ultimately accepts about half of these at the end of this process.

Under the new process, however, each initial submission will be assessed by one or more senior editors and, once they have decided to invite it to go through peer review, eLife will be committed to publishing the paper, alongside a decision letter, the review reports, and an author response, as well as a statement from an editor on the process.

The final decision on whether to publish will lie with the author, who might choose to withdraw the paper if serious flaws are revealed during peer review.

The experiment is seen as a way of removing the gatekeeping role played by peer reviewers who are, in effect, rival authors.

But it has raised concerns that eLife’s rejection rate will rise even higher, if the initial decision stage becomes more selective, and that the articles identified by peer reviewers as having serious flaws could still go on to be published.

eLife, which was set up with funding from the Howard Hughes Medical Institute, the Max Planck Society and the Wellcome Trust, says that the new approach, if adopted more widely, could stop journal names being seen as proxies for the possible quality of articles. Instead, periodicals could be ‘a venue for the critical and transparent evaluation of work that is judged to be making important claims for a field’, says an article by editor-in-chief Randy Schekman and executive director Mark Patterson.

Read the full article in the THES.

The physics of glass opens a window into biology

Lisa Manning & Jordana Cepelewicz,

Quanta Magazine, 11 June 2018

So how do you formulate questions about embryogenesis, about organ formation during development, through the lens of this physics problem?

What’s striking is that during development — especially during the early stages, when different layers begin to form in the embryo — cells have to flow over one another for relatively long distances. But then, during later stages of development and as an adult, an animal has to behave more like a solid to support walking and motion.

That means groups of cells have to execute a genetic program fairly regularly to go from being fluidlike, where the cells are all jumbled up and move past one another easily, to a system where they’re locked in place. Meanwhile, you can see the opposite, where fluidization of the tissue might happen, in wound healing, where cells have to move to close an injury, or in cancer, where cells have to move away from a tumor to metastasize. The instructions for all this are in the DNA, at the single-cell level. So what do single cells do to change the global mechanical properties of a whole bunch of cells at the tissue level?

The models for glass transitions have typically been based on molecules or particles, meaning that interactions depend on how far apart, say, one atom is from another. But we’re interested in confluent embryonic tissues, where ‘confluent’ means that there are no gaps or overlaps between the cells. And that means we’re not changing any of the variables we typically associate with a fluid-solid transition, like temperature or how densely the particles are packed. How do you get a fluid-solid transition in a system that doesn’t have any of these properties?

We took an existing model, called a vertex model, which imagines a tight packing of cells in two dimensions as a tiling of polygons, where each vertex moves in response to forces such as surface tension. We used this model to examine properties such as the energy barriers between physical states, or how difficult it was for a cell to move. Those properties in the tissue system showed the hallmarks of what you’d see in a typical glass transition in ordinary materials.

What insights into development have you gained from studying that transition?

We’d like to understand how organs form in development, because if they form improperly, that leads to congenital disease. One hypothesis we have is that some organs actively move through a tissue as they form. In a paper that we just posted on arxiv.org, we discovered that the drag forces — the mechanical fluidlike forces exerted on an organ as it moves — can be sufficient to change the shapes of the cells in ways that help the organ be functional. The fact that the organ is moving through a material that’s either more fluidlike or more solidlike can actually help the organ form properly and do its job. In this paper, we looked at the organ in zebra fish that organizes their left-right asymmetry and helps put, say, their heart on the correct side of the body. We’re really excited about that result, because it suggests that these material properties of the embryo can play a fine-tuned role in helping it develop properly.

Read the full article in Quanta.

In praise of (occasional) bad manners

Freya Johnston, Prospect, 18 June 2018

Thomas finds it ‘paradoxical’ that ‘the English were deeply involved in the slave trade at a time when their enthusiasm for personal liberty had never been greater.’ But mightn’t the English enthusiasm for freedom have been so marked precisely because of their involvement in the slave trade? The denial of liberty to a group of people whose value as traded commodities permitted the rise of English wealth, and therefore refinement and polished manners, would have fostered a sharp awareness of the value of freedom—whether or not those enthusiasts for liberty openly acknowledged its mirror image in the slave trade. Beyond observing that ‘Internal civility, it seemed, was wholly compatible with external barbarism,’ Thomas does not try to answer Samuel Johnson’s question about the Americans who wanted independence (which he quotes): ‘How is it that we hear the loudest yelps for liberty among the drivers of negroes?’ But this kind of rude enquiry must be absolutely central to any history of civilised life, whether we are thinking about the imposition of western values on other nations or about the more general claim that there can be, as Friedrich Nietzsche pointed out, no feast without cruelty.

Refinement is inevitably a paradox, since to the same extent that human beings can be shown to have advanced culturally and socially we can also be shown to have declined in virtue and vigour—not least because what has allowed us to advance is, among other things, the exploitation of other people. The first book of William Cowper’s great rambling poem The Task (1785) traces the British ascent to civility from the ‘hardy chief’ who took his brief repose on a ‘rugged rock’ to the modern poet, lolling about indoors on a nice comfy sofa. Cowper, a keen abolitionist, enumerates ‘the blessings of civilised life,’ to be sure, concluding that it is a desirable state. But his final view is of ‘the fatal effects of dissipation and effeminacy,’ a loss of innocence and native strength that will always accompany greater riches and sophistication.

The first appearance in print of Norbert Elias’s The Civilising Process (1939), the broadest and most influential study to date of European manners, coincided with the beginning of the Second World War, and with good reason. Elias was attempting to explain the necessary interplay between violence and civilisation, rather than the ceding of one to the other. His book gained little attention for the next three decades, however, until the first volume—on the history of manners—was translated into English. In that same year, 1969, Kenneth Clark’s lavish documentary series Civilisation first aired on BBC2 (its nine-episode sequel, Civilisations, was shown earlier this year). Elias argued that post-medieval attitudes to sex, cruelty, bodily functions, table manners and forms of speech had been gradually redefined by higher and higher thresholds of shame and repugnance, and by the increasing exercise of self-restraint in individual behaviour.

Elias’s work was criticised, as was Kenneth Clark’s, by some who thought it assumed too remorseless a model of European progress from barbarism to refinement. However, as Elias himself pointed out, he never equated western sophistication with superiority to other cultures, while the subtitle of Clark’s series, ‘A Personal View’, was intended to disclaim comprehensiveness.

Read the full article in Prospect.

Perpetual motion

Rafia Zakaria, TLS, 20 June 2018

‘What, then, shall that language be? One-half of the committee maintain that it should be the English. The other half strongly recommend the Arabic and Sanscrit. The whole question seems to me to be – which language is the best worth knowing?’ So asked Lord Macaulay of the British Parliament on February 2, 1835. He went on, of course, to answer his own question; there was no way that the natives of the subcontinent over which they now ruled could be ‘educated by means of their mother-tongue’, in which ‘there are no books on any subject that deserve to be compared to our own’. And even if there had been, it did not matter, for English ‘was pre-eminent even among languages of the West’. English, it was decided, would be the language that would be taught to the natives. By 1837, English replaced Persian as the language of courtrooms and official business in Muslim India and took with it the cultural ascendancy of the Persian speakers.

This sordid story of tainted beginnings is aptly recounted in Muneeza Shamsie’s Hybrid Tapestries: The development of Pakistani literature in English, which traces the history of an often vexed but always intriguing literary lineage from the nineteenth century until today. It is a tricky tale to tell, not least because the moment of origin is also the moment of imposition and conquest. The development of Pakistani literature is directly linked to those deposed Muslims and their cherished Persian, which adds further flavours of resentment and betrayal to the mixture. The Indian Muslims who had dominated cultural production until then felt the demotion, and hence the inauthenticity and subjugation of adopting a foreign language, more acutely; Hindus less so, perhaps because they were merely exchanging one set of conquerors for another. The bifurcation, with each group turning to a different vernacular language to anchor their evolving identity, would have more than just linguistic consequences: it would result in two separate nation states.

It is because of this history, perhaps, that Shamsie begins her story of Pakistani literature in English around 1835 rather than in 1947, when Pakistan was actually born. Shamsie’s book treads carefully through the troubled terrain to make the case that what begins badly need not be terrible in perpetuity, and can even produce happy outcomes. In 1901 came Rudyard Kipling’s Kim, whose ‘search for identity between two cultures’ would recur in South Asian literature a century later in Salman Rushdie’s Midnight’s Children, Hanif Kureishi’s Buddha of Suburbia and Kamila Shamsie’s Burnt Shadows. Other early forays were unique and prophetic; in 1905 Rokeya Sakhawat Hossain, who had taught herself English, wrote a radical feminist science fiction story entitled Sultana’s Dream. In it, Hossain conjures a world called ‘Ladyland’ where gender roles are reversed and men must stay in seclusion, but energy can be harnessed and travel through air is common.

Read the full article in the TLS.

The phrase ‘necessary and sufficient’

blamed for flawed neuroscience

Nature, 12 June 2018

In his 1946 classic essay ‘Politics and the English language’, George Orwell argued that ‘if thought corrupts language, language can also corrupt thought’. Can the same be said for science — that the misuse and misapplication of language could corrupt research? Two neuroscientists believe that it can. In an intriguing paper published in the Journal of Neurogenetics, the duo claims that muddled phrasing in biology leads to muddled thought and, worse, flawed conclusions

The phrase in the crosshairs is ‘necessary and sufficient’. It’s a popular one: figures suggest the wording pops up in some 3,500 scientific papers each year across genetics, cell biology and neuroscience alone. It’s not a new fad: Nature’s archives show consistent use since the nineteenth century.

Used properly, the phrase indicates a specific relationship between two events. For example, the statement, ‘I’ll pay for lunch if, and only if, you pay for breakfast,’ can be written as, ‘You paying for breakfast is necessary and sufficient for me paying for lunch.’

But, argue Motojiro Yoshihara and Motoyuki Yoshihara, use of the phrase in research reports is problematic, and should be curtailed.

The logic of the term is at the heart of the dispute. It’s too often used as shorthand to mean ‘linked to’ or ‘important for’, the authors say. And this sloppy use, they argue, can lead scientists in the wrong direction, especially in genetics.

Read the full article in Nature.

How we got to be so self-absorbed: The long story

Anthony Gottlieb, New York Times, 21 June 2018

In 1986, John Vasconcellos, a somewhat tortured California state assemblyman who had attended programs at Esalen, persuaded Gov. George Deukmejian to fund a ‘task force to promote self-esteem and personal and social responsibility.’ Professors from the University of California were to study the links between self-esteem and healthy personal development. And California — nay, the world — could then design programs to nip homelessness, drug abuse and crime in the bud, by teaching people to value themselves and achieve their potential. At first, the task force was ridiculed. It was lampooned in Garry Trudeau’s ‘Doonesbury’ cartoons: The character of Barbara Ann ‘Boopsie’ Boopstein served on the task force, when not busy channeling one of her previous incarnations. Johnny Carson, The Wall Street Journal and many others joined in the fun.

All this bad publicity turned out to be useful, though. Everyone got to hear about the task force, so when the first findings of the California professors were announced in January 1989, it was big news. Newswires carried the story that impeccable academic research had found the correlations that the task force wanted: Low self-esteem was linked to social problems. Word got around that the data was in, and that those flaky Californians had been proved right.

The task force’s final report in 1990 was endorsed by Oprah Winfrey and Bill Clinton, among many others. In 1992, a Gallup Poll found that 89 percent of Americans regarded self-esteem as ‘very important’ for success in life, and schools in America and Britain were soon busy trying to instill it.

But actually the flaky Californians had not been proved right. The academic research found some correlations, but no solid evidence of causes: Alcohol abuse, for example, might cause low self-esteem rather than the other way around. Because Vasconcellos was chairman of the State Assembly’s Ways and Means Committee, he was in a position to make life difficult for the University of California. It seems that the professors responsible for the research did not want to make trouble by pointing out that their work was being misrepresented by the task force’s publicists. Perhaps those involved in the deception had too much self-esteem to be ashamed of what they had done.

There was always a dark side to the Human Potential Movement. If a positive attitude and a sense of self-worth are what matters for success, then failure is always your own fault. Storr argues that this uncompassionate edge of self-esteemery dovetails with the economic ideas of Ayn Rand and the competitive individualism of her followers in neoliberal politics. Rand’s acolyte and onetime lover, Nathaniel Branden, worked closely with the task force, and was the author of the best-selling ‘The Psychology of Self-Esteem.’ As Storr colorfully puts it, the self-esteem craze was ‘a rapturous copulation of the ideas of Ayn Rand, Esalen and the neoliberals.’

Read the full article in the New York Times.

The images are, from top down, René Magritte, ‘The False Mirror’; Frida Kahlo, ‘Self-Portrait with Thorn Necklace and Hummingbird’; Reconstruction of Homo floresiensis, photo by John Gurche, National Museum of Natural History.

Can I add something to “plucked off the web”?

From Radio 4 on Friday

“Former gang member Simeon Moore (aka Zimbo) sets out to explore, challenge and understand the complex relationship between certain urban art forms and knife violence.”

https://www.bbc.co.uk/programmes/b0b42t4p

It should raise some questions within any discussions of diversity, immigration and racism in Britain today.

A Few Thoughts on the Plight of the Working Class and the Effects of Mass Immigration: What Happened and Why it Continues to Happen.

Kenan – you and I have an ongoing interest in the plight of working men and women at this point in history, partly because it’s an age of disruption and growing inequality, triggering an angry collective backlash called populism that is destabilizing our countries and poisoning our political culture, and because we fear these developments have the potential to evolve into something much more destructive and bloody if corrective measures aren’t adopted soon. But from a personal point of view the subject of workers in distress matters a great deal to me – I’ve been a manual labourer of one sort or another all my adult life, working for a decade in the mining exploration industry in Australia, before returning to Ireland, my country of birth, in 2001 to work as a brickie’s labourer in the construction industry, where I was also a trade union shop-steward. I was at the coalface, so to speak, when the land rights debate was taking place in Australia during the 1990s, and I was in the thick of it again when over three hundred thousand foreign nationals, mainly from Eastern Europe, flocked to Ireland during the noughties to work their way through the boom. These experiences gave me a good insight into the issues of colonialism, race, and identity politics, along with the social ravages of hyper-capitalism, globalization and the displacement of a native workforce by a large and rapid influx of immigrants. It’s the latter issue I want to talk about here today, after reading the extract above by Andrew Van Dam, Washington Post, 4 July 2018: ”Is it great to be a worker in the US? Not compared with the rest of the developed world.” Personally, having read many of your comments on the issue of immigration, and having agreed with much of what you say, I still believe you are mistaken in some respects about the matter. Without taking issue with anything you’ve written in particular, I’ll try to explain my concerns in the following few paragraphs.

To understand this issue properly, we have to broach five subjects: 1. The Facts. 2. Government Policy. 3. Real Time Consequences. 4. Perception. 5. Political Consequences.

1. The Facts:

Does a rapid and large influx of working immigrants drive down wages? Answer: Yes. In some instances, the wages and standards of living of those members of the native workforce who work in the sectors most affected by immigration really do decline over time. The issue should not be about whether this occurs or not, it should be about the extent of the effect and the actual identity of those disadvantaged by it. I won’t focus on the native workforce here, as I’ve touched on that matter before in a previous post. But I will touch on the experience of the immigrant workforce, since it is often overlooked by commentators and statisticians.

While working on the Irish building sites during the boom, new immigrants, many of them illegal, arrived at the gates of Ireland’s building sites every day looking for trades and labouring work. In response to this unregulated surplus of man power, the construction companies set up their own bogus employment agencies over the border in Northern Ireland, and employed the foreign workforce via those agencies on their sites at rates of pay that were substantially lower than those of the native workforce. By law, there should have been no distinction, which is why I intervened to put matters right. I couldn’t bear to see vulnerable lads exploited so openly and cruelly by Irish employers. Most of the lads were organized into gangs, ‘housed’ on site in shipping containers, locked in overnight, and separated from the native workforce – either by preventing them from eating in the same canteens, using the same ablution blocks, or sharing the same dry-rooms. The employers also changed their assignments and lunch hours to further prevent ‘mixing’. It was a form of working class apartheid. If the two workforces couldn’t mix, men like myself couldn’t befriend the new arrivals and find out how they were being exploited. These simple measures were surprisingly effective, as many native shop-stewards in the construction industry were ‘company men’, and preferred to ignore the plight of foreign nationals rather than rock the boat and risk undermining their own job security. These practices were not confined to Dublin in the noughties, but were typical of what was going on all over the British Isles. And they remain typical today.

The key point to keep in mind here is that as each year passed, and wave after wave of foreign nationals arrived on Irish shores, the hourly rate of the immigrant worker plummeted. In 2005, I intervened in one case in which several Polish labourers were being paid a little over a euro an hour – and that was by no means unusual. Throughout the midlands most foreign nationals were paid between four and five euros an hour. All the reports I received from other shop stewards and building industry workers, from Ireland and the UK, suggested these practices were universal. This is why I and others were motivated to act.

The employers responded to my interference in two ways – by pressurizing me to quit my job, and by ‘subcontracting’ the immigrant workforce out to another company that could only secure a contract with the builder by availing of the builder’s cheap workforce. This was how the main contractor immunized himself from any accusation that he was directly involved in exploitation, and it was also how law-abiding contractors were continually screwed out of work – they simply couldn’t underbid or compete with the construction company’s subcontracting cohorts. Either they ‘adapted’ to the reality of the situation on site, or they went under. Most adapted. They had no other choice.

2. Government Policy:

Why did most law-abiding employers have to ‘adapt’?

Because the government deliberately employed too few inspectors to police the industry properly. And because in Ireland’s case the existence of something called ‘social partnership’, whereby government agencies, employers and trade unions conspired to establish the working conditions of the native workforce, made any other response all but impossible. Here’s how it worked: construction industry heads were close to trade union heads. They had a deal – all native workers and foreign nationals, without exception, had to have their union dues paid promptly. If that was done, trade union delegates would turn a blind eye to the exploitation of foreign nationals. This arrangement produced a huge financial windfall for both parties. Thanks to the steep rise in revenue from union dues, trade union heads were receiving astronomical annual salaries (€220,000 a year for the head of my own union, with an extraordinary array of additional perks and direct access to the heads of government departments, as well as to government ministers themselves). Naturally enough, the construction companies were profiting hugely too, from a cheap and ‘flexible’ workforce.

If a government inspector ever appeared on site, the following chain of events took place: by law, he or she had to call to the site manager’s office first, where they’d be kept waiting for the 15 minutes it took the secretary to warn the site’s on-duty supervisors of the inspector’s visit. They would then enlist the help of their trade union lackeys, and together they would rapidly set about ordering men down from dangerous platforms, to cease dangerous activities, or to leave the site altogether until they received the all-clear from their ganger. The inspection would then take place, and suggestions would be made to fine tune an apparently safe and ‘compliant’ working environment. When I began to stop this sort of ruse from happening, to permit the inspector to see what was actually going on, all hell broke loose. Not only was the inspector shitting bricks (she really didn’t want to be placed in the middle of a potentially huge and costly scandal), but both my employer and my union reps came down on me like a ton of bricks. Even the foreign nationals, who on €5 euros an hour were making more than five times what they’d make at home, wanted me to back off. But by August 2005 I had already appeared on national radio to talk about what was going on, and to state that it was my belief that Ireland was headed for an economic meltdown. In that event, I said, the scramble for a dwindling number of jobs in the construction industry might descend into an anti-immigrant free-for-all, something that had to be avoided at all costs. For this, I was labelled by some as an anti-immigrant scare-monger. You really couldn’t make it up.