The latest (somewhat random) collection of essays and stories from around the web that have caught my eye and are worth plucking out to be re-read.

‘The biggest monster’ is spreading. And it’s not the coronavirus.

Apoorva Mandavilli, New York Times, 3 August 2020

Until this year, TB and its deadly allies, H.I.V. and malaria, were on the run. The toll from each disease over the previous decade was at its nadir in 2018, the last year for which data are available.

Yet now, as the coronavirus pandemic spreads around the world, consuming global health resources, these perennially neglected adversaries are making a comeback.

“Covid-19 risks derailing all our efforts and taking us back to where we were 20 years ago,” said Dr. Pedro L. Alonso, the director of the World Health Organization’s global malaria program.

It’s not just that the coronavirus has diverted scientific attention from TB, H.I.V. and malaria. The lockdowns, particularly across parts of Africa, Asia and Latin America, have raised insurmountable barriers to patients who must travel to obtain diagnoses or drugs, according to interviews with more than two dozen public health officials, doctors and patients worldwide.

Fear of the coronavirus and the shuttering of clinics have kept away many patients struggling with H.I.V., TB and malaria, while restrictions on air and sea travel have severely limited delivery of medications to the hardest-hit regions.

About 80 percent of tuberculosis, H.I.V. and malaria programs worldwide have reported disruptions in services, and one in four people living with H.I.V. have reported problems with gaining access to medications, according to U.N. AIDS. Interruptions or delays in treatment may lead to drug resistance, already a formidable problem in many countries.

In India, home to about 27 percent of the world’s TB cases, diagnoses have dropped by nearly 75 percent since the pandemic began. In Russia, H.I.V. clinics have been repurposed for coronavirus testing.

Malaria season has begun in West Africa, which has 90 percent of malaria deaths in the world, but the normal strategies for prevention — distribution of insecticide-treated bed nets and spraying with pesticides — have been curtailed because of lockdowns.

According to one estimate, a three-month lockdown across different parts of the world and a gradual return to normal over 10 months could result in an additional 6.3 million cases of tuberculosis and 1.4 million deaths from it.

A six-month disruption of antiretroviral therapy may lead to more than 500,000 additional deaths from illnesses related to H.I.V., according to the W.H.O. Another model by the W.H.O. predicted that in the worst-case scenario, deaths from malaria could double to 770,000 per year.

Read the full article in the New York Times.

Mobilising against southern Africa’s ruling-party rot

Editorial, New Frame, 23 August 2020

The parties that now hold state power across southern Africa once carried the hopes of millions of people. When they were popular movements or military organisations, people of great principle and courage committed themselves to them and were willing to go to prison – and to war – in their name. They carried collective and redemptive visions.

In 1906, Pixley ka Isaka Seme, a central figure in the founding of the ANC, gave a prize-winning oration at Columbia University in New York in which he anticipated shining cities, humming with science and commerce, and that Africa “walking with that morning gleam” will “shine as thy sister lands with equal beam”.

By the late 1980s, millions of people were mobilised in the name of the ANC. But today, throughout southern Africa, most of the former liberation movements have become repressive and predatory excrescences on society. In Angola, South Africa, Zimbabwe and Mozambique, the ruling parties are a direct hindrance to any prospect of the development of societies that are viable for the majority, let alone the prospect of an emancipatory politics.

Politicians and their families accumulate vast wealth while the majority faces deeping impoverishment and what Martin Luther King called “life as a long and desolate corridor with no exit sign”. Elites inhabit a fully privatised life in terms of residence, security, education and health, while the institutions accessed by the majority are crumbling. Autonomous organisation is met with serious repression: arrest, torture, murder. There have been massacres in Angola, South Africa and Zimbabwe.

Elites in South Africa, just like the racist Right in Europe and the United States, actively incite xenophobia to scapegoat African and Asian migrants for the steady decline of society under their management. There are regular pogroms.

None of this is new. In 1961, Frantz Fanon, dying and writing with the last of his energies in a flat in Tunis, warned against the collapse into xenophobia and excoriated the ruling parties and the national bourgeoisies in the newly independent states. The party, he wrote, ought to be a “living” movement enabling the “free exchange of ideas which have been elaborated according to the real needs of the mass of the people”. Instead it had become the “modern form of the dictatorship of the bourgeoisie, unmasked, unpainted, unscrupulous and cynical”. Fanon spoke with withering contempt of a “little greedy caste, avid and voracious, with the mind of a huckster”.

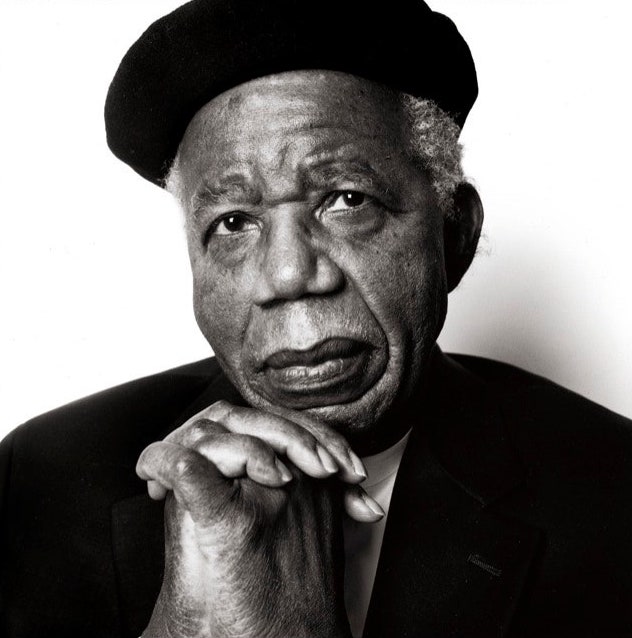

The novels that emerged out of the early experience of postcolonial disappointment are often just as uncompromising in their critique. Ayi Kwei Armah’s The Beautyful Ones Are Not Yet Born, published in 1968, is saturated with a sense of rot and decay, of stench and filth, which is both material and moral. This is counterposed to frequent references to the gleam of wealth and the clean life it seems to enable. But the novel’s chief protagonist, a railway clerk, observes: “Some of that cleanness has more rottenness in it than the slime at the bottom of a garbage dump.”

Read the full article in New Frame

The death of Hannah Fizer

Adam Rothman & Barbara J Fields, Dissent, 24 July 2020

Hannah Fizer was driving to work at a convenience store in Sedalia, Missouri, late on a Saturday night in June when a police officer pulled her over for running a red light. According to police reports, Fizer was “non-compliant” and threatened to shoot the officer, so the officer shot and killed her. In fact, Fizer did not have a gun and, now that she is dead, cannot tell her side of the story. Her friends and family want answers, and they want justice. “She was a beautiful person,” a coworker recalled.

Amid widespread protests against police killings of black people, it seems a familiar story: an unarmed person smart-mouths a police officer and dies for it. But Hannah Fizer was white. That should not surprise anyone. According to a database of police shootings in the United States since 2015, half of those shot dead by police—and four of every ten who were unarmed—have been white. People in poor neighborhoods are a lot more likely to be killed by police than people in rich neighborhoods. Living for the most part in poor or working-class neighborhoods as well as subject to a racist double-standard, black people suffer disproportionately from police violence. But white skin does not provide immunity.

Nor does white skin provide immunity against police clad in riot gear and armed with military-grade weapons violating freedom of speech, assembly, and worship. Just ask Martin Gugino, the seventy-five-year-old man who spent a month in the hospital with a fractured skull after he was knocked down by police in Buffalo, and received death threats as a reward. Or ask white clergy and others beset by tear gas and military helicopters to clear space for a photo-op for President Trump during the “Battle of Lafayette Square” in early June. Militarized attacks on unarmed, peaceful protesters have taught thousands of previously uninvolved Americans that they, too, have a stake in curbing the excessive use of force by the police…

those seeking genuine democracy must fight like hell to convince white Americans that what is good for black people is also good for them. Reining in murderous police, investing in schools rather than prisons, providing universal healthcare (including drug treatment and rehabilitation for addicts in the rural heartland), raising taxes on the rich, and ending foolish wars are policies that would benefit a solid majority of the American people. Such an agenda could be the basis for a successful political coalition rooted in the real conditions of American life, which were disastrous before the pandemic and are now catastrophic.

Attacking “white privilege” will never build such a coalition. In the first place, those who hope for democracy should never accept the term “privilege” to mean “not subject to a racist double standard.” That is not a privilege. It is a right that belongs to every human being. Moreover, white working people—Hannah Fizer, for example—are not privileged. In fact, they are struggling and suffering in the maw of a callous trickle-up society whose obscene levels of inequality the pandemic is likely to increase. The recent decline in life expectancy among white Americans, which the economists Anne Case and Angus Deaton attribute to “deaths of despair,” is a case in point. The rhetoric of white privilege mocks the problem, while alienating people who might be persuaded.

Read the full article in Dissent.

The mythology of Karen

Helen Lewis, Atlantic, 19 August 2020

What does it mean to call a woman a “Karen”? The origins of any meme are hard to pin down, and this one has spread with the same intensity as the coronavirus, and often in parallel with it. Karens are “the policewomen of all human behavior.” Karens don’t believe in vaccines. Karens have short hair. Karens are selfish. Confusingly, Karens are both the kind of petty enforcers who patrol other people’s failures at social distancing, and the kind of entitled women who refuse to wear a mask because it’s a “muzzle.”

Oh, and Karens are most definitely white. Let that ease your conscience if you were beginning to wonder whether the meme was, perhaps, a little bit sexist in identifying various universal negative behaviors and attributing them exclusively to women. “Because Karen is white, she faces few meaningful repercussions,” wrote Robin Abcarian in the Los Angeles Times. “Embarrassing videos posted on social media is usually as bad as it gets for Karen.”

Sorry, but no. You can’t control a word, or an idea, once it’s been released into the wild. Epithets linked to women have a habit of becoming sexist insults; we don’t tend to describe men as bossy, ditzy, or nasty. They’re not called mean girls or prima donnas or drama queens, even when they totally are. And so Karen has followed the trajectory of dozens of words before it, becoming a cloak for casual sexism as well as a method of criticizing the perceived faux vulnerability of white women.

To understand why the Karen debate has been so fierce and emotive, you need to understand the two separate (and opposing) traditions on which it draws: anti-racism and sexism. You also need to understand the challenge that white women as a group pose to modern activist culture. When so many online debates involve mentally awarding “privilege points” to each side of an encounter or argument to adjudicate who holds the most power, the confusing status of white women jams the signal. Are they the oppressors or the oppressed? Worse than that, what if they are using their apparent disadvantage – being a woman – as a weapon?

One phrase above all has come to encapsulate the essence of a Karen: She is the kind of woman who asks to speak to the manager. In doing so, Karen is causing trouble for others. It is taken as read that her complaint is bogus, or at least disproportionate to the vigor with which she pursues it. The target of Karen’s entitled anger is typically presumed to be a racial minority or a working-class person, and so she is executing a covert maneuver: using her white femininity to present herself as a victim, when she is really the aggressor.

Read the full article in the Atlantic

Two decades of pandemic war games

failed to account for Donald Trump

Amy Maxmen & Jeff Tollefson, Nature, 4 August 2020

While the CDC scrambled to fix the faulty tests, labs lobbied for FDA authorization to use tests that they had been developing. Some finally obtained the green light on 29 February, but without coordination at the federal level, testing remained disorganized and limited. And despite calls from the WHO to implement contact tracing, many city health departments ditched the effort, and the US government did not offer a national plan. Beth Cameron, a biosecurity expert at the Nuclear Threat Initiative in Washington DC, which focuses on national-security issues, says that coordination could have been aided by a White House office responsible for pandemic preparedness. Cameron had led such a group during Barack Obama’s presidency, but Trump dismantled it in 2018.

In March, the CDC stopped giving press briefings and saw its role diminished as the Trump administration reassured the public that the coronavirus wasn’t as bad as public-health experts were saying. An editorial in The Washington Post in July by four former CDC directors, including Frieden, described how the Trump administration had silenced the agency, revised its guidelines and undermined its authority in trying to handle the pandemic. Trump has also questioned the judgement of Anthony Fauci, director of the National Institute of Allergy and Infectious Diseases and a leading scientist on the White House Coronavirus Task Force.

Confusion emerged in most pandemic simulations, but none explored the consequences of a White House sidelining its own public-health agency. Perhaps they should have, suggests a scientist who has worked in the US public-health system for decades and asked to remain anonymous because they did not have permission to speak to the press. “You need gas in the engine and the brakes to work, but if the driver doesn’t want to use the car, you’re not going anywhere,” the scientist says.

By contrast, New Zealand, Taiwan and South Korea showed that it was possible to contain the virus, says Scott Dowell, an infectious-disease specialist at the Gates Foundation who spent 21 years at the CDC and has participated in several simulations. The places that have done well with COVID-19 had “early, decisive action by their government leaders” he says. Cameron agrees: “It’s not that the US doesn’t have the right tools — it’s that we aren’t choosing to use them.”

Read the full article in Nature.

Racial categories are reactionary

Ralph Leonard, Unherd, 14 August 2020

The real problem with uppercasing racial categories is that — like many of the symbolic actions that have followed this ‘racial awakening’, from toppling statues to woke rebranding by corporations — it creates the illusion that wide-ranging change is ‘finally’ happening. But real progress will only occur when the material conditions of black Americans has improved, and when laws and institutional practices that empower police to brutalise citizens have been overturned. In other words, the prize is not symbolic concession, but radical social transformation. The former is easy and superficial; the latter is hard and substantive.

The growing influence of identity politics and racial essentialism in the media, academia and other mainstream institutions is all in the name of equality and diversity. Nevertheless, it is a way of thinking that permanently categorises human beings, putting them into rigid racial, ethnic and cultural boxes. Race isn’t regarded as a social construct that can be explained and analysed historically, but as an omnipresent state of being which we must ‘come to terms with’. Ironically this undermines the lived experience of belonging to a diverse society with all of its messy, complicated and very human dynamics.

Under the identitarian worldview, America is portrayed as a mosaic of different volks (or ‘races’) each existing in their own unique universe with particular histories, values and volkgeist, which must be ‘respected’ and not transgressed against. It leaves little room for overarching common identities like nationality that can go beyond racial and ethnic affiliations. Is it any surprise that numerous controversies over so-called ‘cultural appropriation’ have become mainstream political issues in the past few years? Once you enter into the cycle of essentialising human beings through race and identity, it, crackpot racialist ideas inevitably become legitimised.

One of the most banal and vulgar ways to think about humanity is to classify and categorise by ‘race’, and especially by skin pigmentation. Racial thinking, no matter how ‘progressively’ arrived at, can only be reactionary. It is irrational, anti-scientific and anti-humanist. It is a fetter on the social development of human beings and their flourishing. Racialism and racism are twin brothers. Solidifying racial categories in mainstream discourse is a grave mistake. Real progress should mean challenging racial thinking at its root and ultimately transcending it.

Read the full article in Unherd

Taking hard line, Greece turns back migrants

by abandoning them at sea

Patrick Kingsley & Karam Shoumali, New York Times, 14 August 2020

The Greek government has secretly expelled more than 1,000 refugees from Europe’s borders in recent months, sailing many of them to the edge of Greek territorial waters and then abandoning them in inflatable and sometimes overburdened life rafts.

Since March, at least 1,072 asylum seekers have been dropped at sea by Greek officials in at least 31 separate expulsions, according to an analysis of evidence by The New York Times from three independent watchdogs, two academic researchers and the Turkish Coast Guard. The Times interviewed survivors from five of those episodes and reviewed photographic or video evidence from all 31.

“It was very inhumane,” said Najma al-Khatib, a 50-year-old Syrian teacher, who says masked Greek officials took her and 22 others, including two babies, under cover of darkness from a detention center on the island of Rhodes on July 26 and abandoned them in a rudderless, motorless life raft before they were rescued by the Turkish Coast Guard.

“I left Syria for fear of bombing — but when this happened, I wished I’d died under a bomb,” she told The Times.

Illegal under international law, the expulsions are the most direct and sustained attempt by a European country to block maritime migration using its own forces since the height of the migration crisis in 2015, when Greece was the main thoroughfare for migrants and refugees seeking to enter Europe.

The Greek government denied any illegality.

“Greek authorities do not engage in clandestine activities,’’ said a government spokesman, Stelios Petsas. “Greece has a proven track record when it comes to observing international law, conventions and protocols. This includes the treatment of refugees and migrants.”

Since 2015, European countries like Greece and Italy have mainly relied on proxies, like the Turkish and Libyan governments, to head off maritime migration. What is different now is that the Greek government is increasingly taking matters into its own hands, watchdog groups and researchers say.

For example, migrants have been forced onto sometimes leaky life rafts and left to drift at the border between Turkish and Greek waters, while others have been left to drift in their own boats after Greek officials disabled their engines.

“These pushbacks are totally illegal in all their aspects, in international law and in European law,” said Prof. François Crépeau, an expert on international law and a former United Nations special rapporteur on the human rights of migrants.

“It is a human rights and humanitarian disaster,” Professor Crépeau added.

Read the full article in the New York Times.

On ‘black antisemitism’ and antiracist solidarity

Michael Richmond, New Socialist, 30 July 2020

As a Jew, I have faced antisemitism. My ancestors faced more. The emblematic, representative figure of an antisemite, both historically as well as the majority that I’ve encountered, have not tended to look like Wiley. And yet a boycott and a thousand headlines have been launched for this. Not for when the Daily Telegraph put antisemitic conspiracy theory on its front page. Not when Conservative politicians like Suella Braverman and Jacob Rees Mogg have barked and whistled about “Cultural Marxism” and George Soros. And certainly not for the countless racists, many of them with verified blue ticks, up to and including the President of the United States, who have had free rein on the platform for years to launch bigoted invectives and incite violence against Black people/ trans people/ Muslims/ immigrants/ Jews/Mexican people and more.

Recent stories, and much longer-run patterns of discourse, have seen many people, including some Jews, attempting to “ethnicise” antisemitism. To construct it as a peculiarly “Black problem” or “Muslim Problem.” This is not a new phenomenon but it does seem to be gaining ground in the current moment – not coincidentally amidst the largest global Black liberation struggle in several generations. The thinker Adolph Reed has aptly noted in his essay “What Colour is Anti-Semitism” that,

Anti-semitism is a form of racism, and it is indefensible and dangerous wherever it occurs. What doesn’t exist is Blackantisemitism, the equivalent of a German compound word, a particular – and particularly virulent – strain of anti-Semitism. Black anti-Semites are no better or worse than white or other anti-Semites, and they are neither more nor less representative of the ‘black community’ or ‘black America’.”

Part of the specification of a particularised “Black antisemitism” seems to be connected to the seeming relish with which many white people, including some white Jews, see instances such as Wiley’s outburst as an opportunity to call Black people “racist.” There is a tendency to impose collective responsibility on Black or Muslim “communities” for instances of antisemitism (and for many things other things) in ways that Neo-Nazis like David Duke or Nick Griffin (both of whom, incidentally, not banned from Twitter) are never held to be representative of white, English or American culture. Except, of course, that is what they are. The discourses and violence that make up the long history of antisemitism are far more organic to the cultures of white, Christian Europe and its settler colonies than anywhere else. Attempts to ethnicise antisemitism today are regularly mobilised, often by non-Jews, as a means to derail and discredit justice and liberation movements and their demands. This comes with its own grim irony, given that justice and liberation movements aim at the undoing of precisely these cultures of white, Christian Europe that were formative for and continue to reproduce antisemitism.

Read the full article in New Socialist.

Beyond the end of history

Daniel Steinmetz-Jenkins,

Chronicle of Higher Education, 14 August 2020

Little wonder, then, that the press has been awash in historical analogies trying to make sense of things. The new watch guards of fascism, like the Holocaust historian Timothy Snyder, the journalist Anne Applebaum, and the Yale philosopher Jason Stanley, make recourse to Europe’s fascist past of the 1930s to explain the contemporary political right. Their critics have been quick to claim that their reading of history is rather politicized, and that their appeal to historical analogies obfuscates, rather than clarifies, the complexity of current events.

The coronavirus crisis is another case in point. Some scholars have offered historical analogies to the war economies of World Wars I and II, while others have insisted that looking to past wars for inspiration could mislead us. In the words of one historian, “Wars lead us to look for enemies and scapegoats, war solutions are directed from the top rather than resourced from local communities.” Others still, most prominently the economic historian Adam Tooze, have stressed the historical uniqueness of the pandemic.

With the eruption of the George Floyd protests, pundits and scholars such as Niall Ferguson, David Frum, and Max Boot have suggested similarities with the May 1968 protests, even as other scholars have pointed out the shortcomings of this historical comparison.

Scholars and nonscholars alike are struggling to make sense of what is happening today. The public is turning to the past — through popular podcasts, newspapers, television, trade books and documentaries — to understand the blooming buzzing confusion of the present. Historians are being called upon by their students and eager general audiences trying to come to grips with a world again made strange.

But they face an obstacle. The Anglo-American history profession’s cardinal sin has been so-called “presentism,” the illicit projection of present values onto the past. In the words of the Cambridge University historian Alexandra Walsham, “presentism … remains one of the yardsticks against which we continue to define what we do as historians.”

It is difficult to grasp the force of the prohibition on “presentism” without understanding the political backdrop against which it developed: the Cold War and the liberal internationalism endorsed by most Anglo-American historians. The profession’s current anxiety over presentism is a legacy of the Cold War university, which sought to resist the radicalism of a new generation of historians emerging in the 1950s and ‘60s, as well as push back against the postmodern turn of the 1970s. This inherited resistance inhibits a more thoughtful engagement with our current crises. We have been left strangely ill-equipped to confront history’s return.

Read the full article in the Chronicle of Higher Education.

How OfQual failed the algorithm test

Timandra Harkness, Unherd, 18 August 2020

That’s the embarrassing truth about algorithms. They are prejudice engines. Whenever an algorithm turns data from the past into a model, and projects that model into the future to be used for prediction, it is working on a number of assumptions. One of the more basic assumptions is that the future will look like the past and the present, in significant ways.

You may think you can beat the odds stacked against you by your low-attaining school, and your lack of extra-curricular extras, and your having to do homework perched on your bed in a shared bedroom, but the algorithm thinks otherwise. Isn’t it strange that we are repelled by prejudice in other contexts, but accept it when it’s automated?

Until now. Now school students shouting “Fuck The Algorithm” have forced a Government U-turn. Some of them seem to think the whole business was an elaborate ploy to punish the poor, instead of a clumsy attempt at automated fairness on a population scale. But some of them must be wondering what other algorithms are ignoring their human agency and excluding them from options in life because of what others did before them: Car insurance? Job adverts? Dating apps? Mortgage offers?

Some disgruntled Further Maths students will no doubt go on to write better algorithms, but that won’t solve the problem. As the RSS wrote to the Office of Statistical Regulation, “‘Fairness’ is not a statistical concept. Different and reasonable people will have different judgments about what is ‘fair’, both in general and about this particular issue.”

You don’t need Maths, or Further Maths, or even a 2:2 in Maths and Statistics, to question what assumptions are being designed into mathematical models that will affect your chances in life. Anyone can argue for their idea of what fairness means. Algorithms, and what we let them decide, are too important to be left to statisticians.

Read the full article in Unherd.

Isabel Wilkerson’s world historical theory of race and caste

Sunil Khilnani, New Yorker, 7 August 2020

Writing with calm and penetrating authority, Wilkerson discusses three caste hierarchies in world history—those of India, America, and Nazi Germany—and excavates the shared principles “burrowed deep within the culture and subconsciousness” of each. She identifies several “pillars” of caste, including inherited rank, taboos related to notions of purity and pollution, the enforcement of hierarchies through terror and violence, and divine sanction of superiority. (The American equivalent to the Laws of Manu is, of course, the Old Testament.) In Wilkerson’s first book, “The Warmth of Other Suns,” which documented the Great Migration of American Blacks in the twentieth century, she wrote about past lives with finer precision and texture than most professional historians have done. So she must have considered the risks involved in compressing into a single frame India’s roughly three-thousand-year-old caste structure, America’s four-hundred-year-old racial hierarchies, and the Third Reich’s twelve-year enforcement of Aryanism. Even on her home terrain, where she focusses on what she calls the “poles of the American caste system,” Blacks and whites, her analysis sometimes seems more ahistorical than transhistorical, as temporal specificities collapse into an eternal present. But this effect is consonant with the view of history she presents in her book—one involving more grim continuity than hopeful departures, more regression to the mean than moments of progress…

Mustering old and new historical scholarship, sometimes to shattering effect, “Caste” brings out how systematically, through the centuries, Black lives were destroyed “under the terror of people who had absolute power over their bodies and their very breath.” In considering the present, though, she often focusses on questions of dignity. Many scenes involve whites failing to recognize the status of successful Blacks—like the white man, having recently moved into a wealthy suburb, who mistakes his elegant Black neighbor for the woman who picks up his laundry. As for how caste dynamics affect those Black Americans who really do pick up the laundry—or shell the shrimp, or clean the motel rooms—Wilkerson has little to say. At one point, she implies that poor people of color are in some ways more fortunate than wealthier ones, because they have fewer stress-related health problems. She surmises that this has to do with low-income people of color getting less white pushback. But the claim isn’t supported by most recent research, and she doesn’t mention the significant diagnostic gap created by unequal access to health care. Considerations of material resources, in her analysis, can disappear in the shadow of status.

Applying a single abstraction to multiple realities inevitably creates friction—sometimes productive, sometimes not. In the book’s comparison of the Third Reich to India and America, for example, a rather jarring distinction is set aside: the final objective of Nazi ideology was to eliminate Jewish people, not just to subordinate them. While American whites and Indian upper castes exploited Blacks and Dalits to do their menial labor, the Nazis came to see no functional role for Jews. In Nazi propaganda, Jews weren’t backward, bestial, natural-born toilers; they were cunning arch-manipulators of historical events. (When Goebbels and other Nazis reviled “extreme Jewish intellectualism” and claimed that Jews had helped orchestrate Germany’s defeat in the Great War, they were insisting on Jewish iniquity, not occupational incapacity.) The violence exercised against Dalits in India and Black people in America provides an ill-fitting template for eliminationist anti-Semitism.

Read the full article in the New Yorker.

Racist research must be named, but often allowed

Elizabeth Harman, Daily Princetonian, 27 July 2020

Let’s start with an obvious truth: if research is racist, then it is immoral. Proponents of the committee might make this claim: any research that is immoral constitutes research misconduct, and thus warrants punishment by the University. As a moral philosopher and an academic, I think it is important to see that this claim is false. Research misconduct is one kind of immorality. But there are many kinds of immorality — and there are many ways that research can be immoral, although it should still be protected by academic freedom. Consider the ethics of abortion (one of the topics of my own research). In my view, most opposition to abortion can be correctly described as “sexist.” If I’m right, does that mean that essays that argue that abortion is morally wrong should be subject to investigation and punishment? It does not. Similarly, research might be immoral in other ways — indeed, it might be racist — without constituting the kind of research misconduct that warrants investigation or punishment. For example, philosophical arguments against affirmative action might be correctly described as “racist” without it being appropriate to investigate or punish those who make those arguments. For another example, arguments against the legalization of same-sex marriage might be correctly called “homophobic”; they might correctly be said to constitute “bigotry.” But as appalled as I am by such arguments, I would never support any investigation or punishment of a colleague for making these arguments.

Why should academic freedom protect immoral research? I will offer two reasons.

We need academics to investigate the serious, pressing moral questions of the human condition and of our particular moment. For many of these questions, we have not figured out the right answers. We need people to argue on different sides of important questions so that we can make progress. Even when some of us have figured out the right answers, we need those who disagree to put forward their best arguments so that we can try to communicate and make progress together. For these two reasons — to pursue the right answers for ourselves, and to communicate with those caught in the grip of wrong answers — we need some people to articulate and argue for false moral views. But research that argues for false moral views may ultimately be correctly seen as itself immoral.

Read the full article in the Daily Princetonian.

African literature is a country

Lily Saint & Bhakti Shringarpure, Africa is a Country, 8 August 2020

African literary studies today is a site of deep paradox. On one hand, the last two decades have seen astonishing growth for African literature in the global North and South, evidenced by lucrative publishing deals; new prizes and grants; literature festivals; the establishment of many new presses and imprints; and an increase in blogs and platforms that disseminate and discuss these developments. On the other hand, African literature continues to exist on the margins of the academic mainstream and is also underrepresented within larger reading publics.

For example, in a recent attempt to “create a fully digitized corpus of 20th-century fiction” Achebe’s Things Fall Apart was the only African novel repeatedly mentioned. African literatures are usually classified and taught within a continental framework—as in the category of “African literature”—a geographical term that implicitly disregards the myriad regional, national, cultural, and economic differences in a continent comprised of fifty-four countries. Indeed the colonial invention of a composite and singular “Africa” remains as entrenched in academic institutions as it is in the global imaginary. That’s how we came to survey instructors of African literature at the university level to find out which works and writers are most regularly taught in their courses.

We sent out emails to over 250 academics, a majority of whom were listed as members of the African Literature Association in addition to several others drawn from our own professional networks. One-hundred and five individuals replied, mainly residents in the US or Europe, and a number of Africa-based professors also contributed to the study…

Though it was expected that Nigerian and South African texts would dominate the field, we did not anticipate how extreme this would be. Most countries on the continent are represented on curricula by less than five individual texts, whereas 62 Nigerian literary works and a whopping 106 South African are regularly taught.

When it comes to languages, English and French are the source languages of the majority of texts, though a smattering of African languages taught in translation are represented by Acoli, Afrikaans, Arabic, Tigrinya, Xhosa, and Yoruba literary works. A fair number of works written in Spanish and Portuguese are also regularly taught.

Read the full article in Africa is a Country.

The problem with the ‘Judeo-Christian tradition’

James Loeffler, Atlantic, 1 August 2020

The “Judeo-Christian tradition” was one of 20th-century America’s greatest political inventions. An ecumenical marketing meme for combatting godless communism, the catchphrase long did the work of animating American conservatives in the Cold War battle. For a brief time, canny liberals also embraced the phrase as a rhetorical pathway of inclusion into postwar American democracy for Jews, Catholics, and Black Americans. In a world divided by totalitarianism abroad and racial segregation at home, the notion of a shared American religious heritage promised racial healing and national unity.

Yet the “Judeo-Christian tradition” excluded not only Muslims, Native Americans, and other non-Western religious communities, but also atheists and secularists of all persuasions. American Jews themselves were reluctant adopters. After centuries of Christian anti-Semitic persecution and philo-Semitic fantasies of Jewish conversion, many eyed the award of an honorary hyphen with suspicion. Even some anti-communist politicians themselves recognized the concept as ill-suited to America’s postwar quest for global primacy in a decolonizing world.

The mythical “Judeo-Christian tradition,” then, proved an unstable foundation on which to build a common American identity. Today, as American democracy once again grasps for root metaphors with which to confront our country’s diversity and its place in the world, the term’s recuperation should rightfully alarm us: It has always divided Americans far more than it has united them.

Although the Jewish and Christian traditions stretch back side by side to antiquity, the phrase Judeo-Christian is a remarkably recent creation. In Imagining Judeo-Christian America: Religion, Secularism, and the Redefinition of Democracy, the historian K. Healan Gaston marshals an impressive array of sources to provide us with an account of the modern genesis of Judeo-Christian and its growing status as a “linguistic battlefield” on which conservatives and liberals proffered competing notions of America and its place in the world from the 1930s to the present.

Before the 20th century, the notion of a “Judeo-Christian” tradition was virtually unthinkable, because Christianity viewed itself as the successor to an inferior, superseded Jewish faith, along with other inferior creeds… Religious freedom meant freedom for Christians. Jews might be accommodated, though not necessarily with full equality, on a temporary basis until their eventual conversion.

Read the full article in the Atlantic.

British spy’s account sheds light

on role in 1953 Iranian coup

Julian Borger, Guardian, 17 August 2020

A first-hand account of Britain’s role in the 1953 coup that overthrew the elected prime minister of Iran and restored the shah to power has been published for the first time.

The account by the MI6 officer who ran the operation describes how it took British intelligence years to persuade the US to take part in the coup. Meanwhile, MI6 recruited agents and bribed members of Iran’s parliament with banknotes transported in biscuit tins.

Together the MI6 and CIA even recruited Shah Reza Pahlavi’s sister in an effort to persuade the reluctant monarch to back the coup to overthrow Mohammad Mossadegh.

“The plan would have involved seizure of key points in the city by what units we thought were loyal to the shah … seizure of the radio station etc … the classical plan,” recalled Norman Darbyshire, the head of MI6’s Persia station in Cyprus at the time of the coup.

Britain’s role in a pivotal moment in Iran’s history left an enduring mark on Iranian perceptions of Britain, but details of the role of its spies have remained obscure.

Darbyshire gave his version of events in an off-record interview with the makers of Granada TV’s 1985 film End of Empire: Iran. He refused to appear on camera, so the interview was not used directly in the programme.

The transcript was forgotten until it was rediscovered in the course of research for a new documentary, Coup 53, due to be released on Wednesday, the 67th anniversary of the coup. The role of Darbyshire, who died in 1993, will be dramatised by Ralph Fiennes.

Taghi Amirani, the director of Coup 53, said: “Even though it has been an open secret for decades, the UK government has not officially admitted its fundamental role in the coup. Finding the Darbyshire transcript is like finding the smoking gun. It is a historic discovery.”

The typewritten transcript was published on Monday morning by the National Security Archive at George Washington University in the US.

Read the full article in the Guardian.

A new intelligentsia is pushing back against wokeness

Batya Ungar-Sargon, Forward, 20 July 2020

William Lloyd Garrison, one of the United States’ most important abolitionists, lived with a bounty on his head for much of his life. His newspaper, The Liberator, advocated for an immediate end to slavery, and he faced down a lynch mob more than once for his writing. One of his avid readers was a formerly enslaved person who went by the name of Frederick Douglass. “His paper took its place with me next to the bible,” Douglass wrote of The Liberator in his memoir.

The two met at an abolitionist meeting in 1841 after Douglass stood up and described to the white crowd what it was like to live as someone else’s property. It was a powerful address, though Douglass, only three years removed from slavery, was so nervous he later couldn’t remember what he had said. Garrison became his mentor, retaining Douglass as a representative of the Anti-Slavery Society, publishing Douglass’s work, encouraging his book and sending him around the country to speak about the evils of slavery.

But the two had a bitter falling out in 1847 over the United States Constitution. Garrison believed that the document was pro-slavery, “the formal expression of a corrupt bargain made at the founding of the country and that it was designed to protect slavery as a permanent feature of American life,” writes Christopher B. Daly in “Covering America: A Narrative History of a Nation’s Journalism.”

Douglass initially agreed. But by the time he published “My Bondage and My Freedom,” he had reconsidered, and believed that “the constitution of the United States not only contained no guarantees in favor of slavery, but, on the contrary, it is, in its letter and spirit, an anti-slavery instrument, demanding the abolition of slavery as a condition of its own existence, as the supreme law of the land.”

By then Douglass had his own newspaper, The North Star, and he began to advocate for political tactics to end slavery, something Garrison could not abide. In 1851, Garrison withdrew the American Anti-Slavery Society’s endorsement from Douglass’s paper. And then he went further: He denounced Douglass from the pages of The Liberator and did not speak to him for 20 years.

A white abolitionist tried to cancel Frederick Douglass, a formerly enslaved person, for disagreeing about whether America could ever free itself from racism.

Today we are having a new national debate about whether the United States is redeemable, about the nature of its founding figures and documents – even the date of its founding — and what to do with those who dissent. But one side is winning. Since George Floyd’s horrifying murder, an anti-racist discourse that insists on the primacy of race is swiftly becoming the norm in newsrooms and corporate boardrooms across America. But as in Douglass’s day, the sides are not clearly divided along racial lines. A small group of Black intellectuals are leading a counter-culture against the newly hegemonic wokeness.

Read the full article in Forward.

Part of a larger battle:

A conversation with Thomas Chatterton Williams

Otis Houston & Thomas Chatterton Williams,

LA Review of Books, 16 July 2020

White liberals have been quick to recognize our complicity in George Floyd’s death, but were we bothered to be complicit in his life? That is, facing the abstract notion of racial guilt is easier than addressing Floyd’s poverty would have been. I also see this thinking reflected in the now-common corporate statements, which seem to express contrition rather than actual concern for the lives of black people. Many of them seem to be speaking mainly to a white audience, and the implicit message seems to be, “We all have to examine our privilege in the name of racial justice, but nobody’s asking you to give up Netflix.”

The whole class-based revolution that Sanders was trying to inspire was tough and challenging, so the Democratic establishment closed ranks and killed his candidacy.

Now, all of the Democratic leadership can kneel in kente cloth, and even Mitt Romney has marched with Black Lives Matter. It’s certainly not wrong for Romney and the others to start to say that they care about black men being killed by the police. But the point I tried to make in my Guardian piece a couple of weeks ago is that George Floyd was not just a black man who was killed by the police; he was a poor black man who was killed by the police. The incident that had initiated his contact with law enforcement was that he had lost his job during the pandemic and was passing a counterfeit bank note. This was not just a function of his race; it was a function of his economic condition.

We do now have a movement that I am very hopeful about, which is a movement to challenge and rein in and hopefully reform an extraordinarily abusive policing culture. But what is also starting to happen — and it began very early on — is that we’re getting a corporate-sanctioned effort to diversify certain elite spaces. So, you’ll get the Poetry Foundation that will replace its board, or the National Book Critics Circle, or certain university spaces, or, as you said, Netflix will make some gestures. And we’ll probably get some more black-created content, and we’re certainly getting more diverse op-ed pages. But what does that have to do with a man who is so poor that he’s passing a fake bank note? And he’s being killed just as 500 mostly poor white people get killed every year by police, just as many Latinos and Native Americans are killed. And Native Americans are, in fact, the group with the highest proportion of fatal encounters with the police, and we rarely talk about that.

What happens to black people is very much a part of our nation’s history, but we have a broader problem with police violence. And police basically kill poor people — they break people of all colors. So, what the Douthat column was saying, and what I think really gets to the heart of the matter, is that Sanders’s proposals were much more challenging to the status quo than adding some seats at the table for “BIPOC faces.”

Read the full article in the LA Review of Books.

The inheritance of loss progression

Anuradha Bhasin Jamwal, Caravan, 5 August 2020

On 5 August last year, the people of the entire state, irrespective of the competitive politics and aspirations of the different identities, were united in their surprise as their world turned upside down. But they were disunited in the ways that they received and absorbed the news of the upheaval—in horror or with joy. Jammu, where I am based, seemed a small privileged oasis with phones and broadband internet functioning. Friends and acquaintances, people I share the same air with, had chosen to celebrate the denial of their democratic rights, a demoted political status and a move that would rob them of their own privileges and rights. Reality would dawn on them later, but even then, their experiences of the accompanying restrictions remained varied, like hierarchies in a prison, in this case mapped by lengths of coiled barbed wires and weight of military jack-boots.

Others, in India, too celebrated the move. Jammu and Kashmir’s full integration with India was music to their ears. Some were happier still that Kashmiris had been “shown their place.”

Ten days later, on India’s Independence Day, the Indian prime minister, Narendra Modi, made Kashmir’s purged special status the centrepiece of his address to the nation. He spoke about how the effective removal of a special status would protect democracy, combat terrorism and Indianise Kashmiris. It was a victor’s mockery, directed at the brutalised and vanquished Kashmiris. Democratic triumphs are expected to come with peoples’ participation, but not in Kashmir.

In essence, a conglomerate territory that came together as a quirk of history was forcefully integrated into the landmass of India, politically and administratively, while keeping its people locked, invisible and silenced by an unprecedented militarised lockdown. So, in the year since, what has this meant for them?

The helplessness of Kashmiris evinced itself in the breakdown of daily lives. Security forces raided home and picked up men and boys—some as young as nine years old—and tortured them brutally. They were beaten up with rifles, hanged upside down, given electric shocks, their bodies covered with purple bruises before they were let off, if they were. In villages in South Kashmir, the shrieks of torture victims were amplified on loudspeakers to create terror among the listeners. A few days after 5 August, a friend who flew from Srinagar to Delhi called me on the phone on their arrival, and cried inconsolably. All I could hear was my name and then, “Everything’s finished,” being said between sobs. Everything is finished. The words rang in my ears. If it was not the end, it was surely the beginning of it.

Read the full article in Caravan.

UCL has a racist legacy, but can it move on?

Andrew Anthony, Observer, 2 August 2020

Even by the standards of his own time, Galton was undoubtedly an egregious racist. Here is a not untypical example of his perspective, taken from a 1904 essay on eugenics: “But while most barbarous races disappear, some, like the negro, do not. It may therefore be expected that types of our race will be found to exist which can be highly civilised without losing fertility.”

As the inquiry report stated: “Through the financial donation of Galton to UCL, racism was allowed to be married to science and within UCL this link between science and racism was embraced.” It also noted that “some students felt distress at sitting through lectures and exams in rooms celebrating eugenics”.

Steve Jones, the former head of the Department of Genetics, Evolution and Environment at UCL, has little truck with such sensitivities. About one student who is alleged to have burst into tears when she discovered she had to go into the Galton Lecture Theatre, he says: “Well, my rather brutal response to that is you shouldn’t be coming to UCL then.”

Against the backdrop of the Black Lives Matter movement, and in an era in which vigilance to micro-aggressions is deemed an essential aspect of academic pastoral care, Jones risks sounding dangerously out of date. He blames the “weak” provost, Michael Arthur, for capitulating to a “woke” campaign.

“UCL used to be known as ‘the godless university’ because it was set up for people who didn’t have faith and for Jews [only members of the Church of England were eligible to go to Oxford and Cambridge ],” he says. “Now it is spineless.”

His friend and former student, the author Adam Rutherford, says Jones is “old and angry now” and annoyed by the way denaming has become the answer to problems within academia. But Jones is not indifferent to Galton’s racism. Far from it. For several decades he has given a lecture on eugenics, looking at its history, its science, and most glaringly its racism, examining the legacies of Galton and his fellow UCL eugenicists Karl Pearson and Ronald Fisher. He doesn’t shy away from their obnoxious opinions but sets them within the context of their times and against their remarkable contributions to science.

The fact is, he says, belief in eugenics was widespread among the British intelligentsia in the late 19th century and especially in the early decades of the last one – all the way up to the Nazis: the Holocaust effectively destroyed its reputation.

Read the full article in the Observer.

‘We want to build a life’:

Europe’s paperless young people speak out

Charlotte Alfred, Guardian, 3 August 2020

‘Why do you sound so British?’ the immigration officer asked 15-year-old Ijeoma Moore as she followed orders to pack for herself and her 10-year-old brother. Officers had arrived at their London home that morning in 2010, as they were eating breakfast and getting ready to leave for school. “Because I am British,” the teenager replied.

What else could she be? She had lived in the UK since she was two years old. She loved tea and toast and “stupid telly”. But her mother’s repeated residency applications to the Home Office had all been rejected. Moore was not a British citizen.

Still in school uniform, the children and their visiting father – her mother was not there that day – were put in the Home Office van. Moore felt like she was watching someone else’s life on TV. They were taken to an immigration detention centre, where she narrowly avoided deportation three times. Eventually she and her brother were placed in foster care and her father sent to Nigeria. “I had to grow up really quick and become like a mum to my brother,” Moore says.

A decade on, Moore is still not a UK citizen. She will not officially become British until she turns 33 – 31 years after she arrived in the country and picked up a London accent. And it is not guaranteed if she runs out of money to pay the soaring fees, or the Home Office loses a document from the required stack of evidence, or if the rules change yet again.

In the US, they are known as the Dreamers, undocumented or “alien” minors who as a group have for two decades campaigned for legal status under the Development, Relief and Education for Alien Minors (Dream) Act. Their struggle, associated in the public mind with the American dream, has won broad public and bipartisan political support. It prompted the intervention of Barack Obama, who in 2012 created the Deferred Action for Childhood Arrivals (Daca) programme to allow people brought illegally to the US as children to stay and work. This protection is still under threat from Donald Trump’s administration despite a recent US supreme court ruling in the Dreamers’ favour. As Obama said in 2012: “They are American in their heart, in their minds, in every single way but one: on paper.”

Europe has its own dreamer generation, but unlike the US Dreamers, their stories are largely unknown. It is only when someone like the Glasgow-born singer Bumi Thomas is ordered to leave the UK that the immigration rules stopping people from staying in the countries they were born or grew up in stir public outrage. Millions of young people across Europe have, like Thomas, been born on the wrong side of little-known laws – in her case a 1981 restriction on automatic citizenship at birth. They grew up feeling British or French or Italian or European, but are now trapped in a state of limbo. The threat of deportation hangs over them because, like the US Dreamers, they lack the right piece of paper.

Read the full article in the Guardian.

Their family bought land one generation after slavery.

The Reels brothers spent eight years in jail for refusing to leave it.

Lizzie Presser, ProPublica, 15 July 2019

Melvin and Licurtis stood in court and refused to leave the land that they had lived on all their lives, a portion of which had, without their knowledge or consent, been sold to developers years before. The brothers were among dozens of Reels family members who considered the land theirs, but Melvin and Licurtis had a particular stake in it. Melvin, who was 64, with loose black curls combed into a ponytail, ran a club there and lived in an apartment above it. He’d established a career shrimping in the river that bordered the land, and his sense of self was tied to the water. Licurtis, who was 53, had spent years building a house near the river’s edge, just steps from his mother’s.

Their great-grandfather had bought the land a hundred years earlier, when he was a generation removed from slavery. The property — 65 marshy acres that ran along Silver Dollar Road, from the woods to the river’s sandy shore — was racked by storms. Some called it the bottom, or the end of the world. Melvin and Licurtis’ grandfather Mitchell Reels was a deacon; he farmed watermelons, beets and peas, and raised chickens and hogs. Churches held tent revivals on the waterfront, and kids played in the river, a prime spot for catching red-tailed shrimp and crabs bigger than shoes. During the later years of racial-segregation laws, the land was home to the only beach in the county that welcomed black families. “It’s our own little black country club,” Melvin and Licurtis’ sister Mamie liked to say. In 1970, when Mitchell died, he had one final wish. “Whatever you do,” he told his family on the night that he passed away, “don’t let the white man have the land.”

Mitchell didn’t trust the courts, so he didn’t leave a will. Instead, he let the land become heirs’ property, a form of ownership in which descendants inherit an interest, like holding stock in a company. The practice began during Reconstruction, when many African Americans didn’t have access to the legal system, and it continued through the Jim Crow era, when black communities were suspicious of white Southern courts. In the United States today, 76% of African Americans do not have a will, more than twice the percentage of white Americans.

Many assume that not having a will keeps land in the family. In reality, it jeopardizes ownership. David Dietrich, a former co-chair of the American Bar Association’s Property Preservation Task Force, has called heirs’ property “the worst problem you never heard of.” The U.S. Department of Agriculture has recognized it as “the leading cause of Black involuntary land loss.” Heirs’ property is estimated to make up more than a third of Southern black-owned land — 3.5 million acres, worth more than $28 billion. These landowners are vulnerable to laws and loopholes that allow speculators and developers to acquire their property. Black families watch as their land is auctioned on courthouse steps or forced into a sale against their will.

Between 1910 and 1997, African Americans lost about 90% of their farmland. This problem is a major contributor to America’s racial wealth gap; the median wealth among black families is about a tenth that of white families. Now, as reparations have become a subject of national debate, the issue of black land loss is receiving renewed attention. A group of economists and statisticians recently calculated that, since 1910, black families have been stripped of hundreds of billions of dollars because of lost land. Nathan Rosenberg, a lawyer and a researcher in the group, told me, “If you want to understand wealth and inequality in this country, you have to understand black land loss.”

Read the full article in ProPublica.

The revolt of the upper castes

Jean Drèze, India Forum, 6 March 2020

The Hindutva project can also be seen as an attempt to restore the traditional social order associated with the common culture that allegedly binds all Hindus. The caste system, or at least the varna system (the four-fold division of society), is an integral part of this social order. In We or Our Nationhood Defined, for instance, Golwalkar clearly says that the “Hindu framework of society”, as he calls it, is “characterised by varnas and ashrams” (Golwalkar 1939, p. 54). This is elaborated at some length in Bunch of Thoughts (one of the foundational texts of Hindutva), where Golwalkar praises the varna system as the basis of a “harmonious social order”.1 Like many other apologists of caste, he claims that the varna system is not meant to be hierarchical, but that does not cut much ice.

Golwalkar and other Hindutva ideologues tend to have no problem with caste. They have a problem with what some of them call “casteism”. The word casteism, in the Hindutva lingo, is not a plain reference to caste discrimination (like “racism” is a reference to race discrimination). Rather, it refers to various forms of caste conflict, such as Dalits asserting themselves and demanding quotas. That is casteism, because it divides Hindu society.

The Rashtriya Swayamsevak Sangh (RSS), the torch-bearer of Hindu nationalism today, has been remarkably faithful to these essential ideas. On caste, the standard line remains that caste is part of the “genius of our country”, as the National General Secretary of the Bharatiya Janata Party, Ram Madhav, put it recently in Indian Express (Madhav, 2017), and that the real problem is not caste but casteism.

An even more revealing statement was made by Yogi Adityanath, head of the BJP government in Uttar Pradesh, in an interview with NDTV three years ago. Much like Golwalkar, he explained that caste was a method for “managing society in an orderly manner”. He said: “Castes play the same role in Hindu society that furrows play in farms, and help in keeping it organised and orderly… Castes can be fine, but casteism is not…”.2

To look at the issue from another angle, Hindutva ideologues face a basic problem: how does one “unite” a society divided by caste? The answer is to project caste as a unifying rather than a divisive institution.3 The idea, of course, is unlikely to appeal to the disadvantaged castes, and that is perhaps why it is rarely stated as openly as Yogi Adityanath did in his interview. Generally, Hindutva leaders tend to abstain from talking about the caste system, but there is a tacit acceptance of it in this silence. Few of them, at any rate, are known to have spoken against the caste system.

Read the full article in the India Forum.

The reason wars are over. Reason won

Hanno Sauer, Medium, 13 August 2020

Perhaps the most profound attack on human rationality worth taking seriously — I am ignoring “postmodernist” anti-rationalism here, with its peculiar mix of confusion and anxiety — came from the so-called “heuristics and biases”-approach pioneered by Daniel Kahneman and Amos Tversky.[ii]

Here, the main idea is that our mind operates on two tracks: System I is fast and efficient, but inflexible; System II is flexible and precise, but slow and easily exhausted. We can show with cleverly designed studies how much of our thinking is driven by automatic and often subconscious System I processes, and how deeply and frequently our intuitive cognition leads us astray.

This paradigm proved particularly fruitful in economics, where it promised to finally do away with the much ridiculed and wildly unpopular theory of homo economicus, according to which people by and large make decisions on the basis of rationally shaped utility functions. The heuristics and biases-approach, together with its partner in crime behavioral economics[iii], tried to expose this idea as a myth, and to show that people couldn’t care less about the axioms of probability theory or cost/benefit-analysis. Instead, they almost always go with their gut, and make snap decisions on the basis of quick-and-dirty rules of thumb, rather than to weigh the pros and cons of all available options and to go with the optimal one.

But many of the most famous and striking effects generated in this paradigm don’t seem so damning on a closer look. Consider the famous “Linda the bank teller” scenario. People are introduced (on paper) to Linda, and are given all sorts of information about her: that she majored in philosophy, cares about social justice, was active in various environmental causes, and so on. People are then asked whether it is more likely that a) Linda is a bank teller or that b) Linda is a bank teller and active in the feminist movement. Many go for b), thereby apparently committing the “ conjunction fallacy”: A&B can never be more likely than A alone.

In the meantime, many other alleged biases have been vindicated as well. Hindsight bias is the phenomenon that after an event occurred, people think that it was more likely to happen than they would have thought ex ante. But this is not a bias. When an event occurs, people thereby acquire new evidence how likely it was, and how good their total evidence regarding its likelihood was beforehand. People take this into account, as they should.

Read the full article on Medium.

Kansas should go f— itself

Matt Taibi, Substack, 2 August 2020

At the conclusion of The People, No, Frank sums up the book’s obvious subtext, seeming almost to apologize for its implications:

My point here is not to suggest that Trump is a “very stable genius,” as he likes to say, or that he led a genuine populist insurgency; in my opinion, he isn’t and he didn’t. What I mean to show is that the message of anti-populism is the same as ever: the lower orders, it insists, are driven by irrationality, bigotry, authoritarianism, and hate; democracy is a problem because it gives such people a voice. The difference today is that enlightened liberals are the ones mouthing this age-old anti-populist catechism.

The People, No is more an endorsement of 1896-style populism as a political solution to our current dilemma than it is a diatribe against an arrogant political elite. The book reads this way in part because Frank is a cheery personality whose polemical style tends to accentuate the positive. In my hands this material would lead to a darker place faster — it’s infuriating, especially in what it says about the last four years of “consensus” propaganda, in particular the most recent iteration.

The book’s concept also reflects the Sovietish reality of post-Trump media, which is now dotted with so many perilous taboos that it sometimes seems there’s no way to get audiences to see certain truths except indirectly, or via metaphor. The average blue-state media consumer by 2020 has ingested so much propaganda about Trump (and Sanders, for that matter) that he or she will be almost immune to the damning narratives in this book. Protesting, “But Trump is a racist,” they won’t see the real point – that these furious propaganda campaigns that have been repeated almost word for word dating back to the 1890s are aimed at voters, not politicians.

In the eighties and nineties, TV producers and newspaper editors established the ironclad rule of never showing audiences pictures of urban poverty, unless it was being chased by cops. In the 2010s the press began to cartoonize the “white working class” in a distantly similar way.

This began before Trump. As Bernie Sanders told Rolling Stone after the 2016 election, when the small-town American saw himself or herself on TV, it was always “a caricature. Some idiot. Or maybe some criminal, some white working-class guy who has just stabbed three people.” These caricatures drove a lot of voters toward Trump, especially when he began telling enormous crowds that the lying media was full of liars who lied about everything.

Read the full article on Substack.

The economic origins of mass incarceration

John Clegg & Adaner Usmani, Catalyst, Fall 2019

The standard story is that mass incarceration is a system of racialized social control, fashioned by a handful of Republican elites in defense of a racial order that was being challenged by the Civil Rights Movement. “Law and order” candidates catalyzed this white anxiety into a public panic about crime, which furnished cover for policies that sent black Americans to prison via the War on Drugs. It is difficult to overstate how influential this story has become. Michelle Alexander’s The New Jim Crow, which makes the case most persuasively, has been cited at more than twice the rate of the next most-cited work on American punishment.3 In a review of decades of research, the sociologists David Jacobs and Audrey Jackson call this story “the most plausible for the rapid increase in U.S. imprisonment rates.”

Yet this conventional account has some fatal flaws. Numerically, mass incarceration has not been characterized by rising racial disparities in punishment, but rising class disparity. Most prisoners are not in prison for drug crimes, but for violent and property offenses, the incidence of which increased dramatically before incarceration did. And the punitive turn in criminal justice policy was not brought about by a layer of conniving elites, but was instead the result of uncoordinated initiatives by thousands of officials at the local and state levels.

So what should replace the standard story? In our view, there are two related questions to answer. The first concerns the rise in violence. Partisans of the standard account argue that trends in punishment were unrelated to trends in crime, but this claim is mistaken. The rise in violence was real, it was unprecedented, and it profoundly shaped the politics of punishment. Any account of the punitive turn must address the question that naturally follows from this fact: why did violence rise in the 1960s?

The key to understanding the rise in violence lies in the distinctively racialized patterns of American modernization. The post-war baby boom increased the share of young men in the population at the same time that cities were failing to absorb the black peasantry expelled by the collapse of Southern sharecropping. This yielded a world of blocked labor-market opportunities, deteriorating central cities, and concentrated poverty in predominantly African-American neighborhoods. As a result, and especially in urban areas, violence rose to unprecedented heights.

This pattern of economic development generated a racialized social crisis. But this raises a second question: why did the state respond to this crisis with police and prisons rather than with social reform? Violence cannot be a sufficient cause of American punishment because punishment is just one of the ways in which states can respond to social disorder. Some states ignore crime waves. Others seek to attack the root causes of violence. Why did America respond punitively?

Read the full article in Catalyst.

Publish and perish

Agnes Callard, The Point, 29 July 2020

These words exist for you to read them. I wrote them to try to convey some ideas to you. These are not the first words I wrote for you—those were worse. I wrote and rewrote, with a view to clarifying my meaning. I want to make sure that what you take away is exactly what I have in mind, and I want to be concise and engaging, because I am mindful of competing demands on your time and attention.

You might think that everything I am saying is trivial and obvious, because of course all writing is like this. Writing is a form of communication; it exists to be read. But that is, in fact, not how all writing works. In particular, it is not how academic writing works. Academic writing does not exist in order to communicate with a reader. In academia, or at least the part of it that I inhabit, we write, most of the time, not so much for the sake of being read as for the sake of publication.

Let me illustrate by way of a confession regarding my own academic reading habits. Although I love to read, and read a lot, little of my reading comes from recent philosophy journals. The main occasions on which I read new articles in my areas of specialization are when I am asked to referee or otherwise assess them, when I am helping someone prepare them for publication and when I will need to cite them in my own paper.

This tells you something about academic writing, and how deeply it is shaped—mostly not at a conscious level—by the refereeing process. The simple fact is that “success” in academia is a matter of journal-acceptance, which in turn makes for a line in one’s CV. The number of such citations, taken together with the prestige of the relevant journals, is what counts for getting, keeping and being promoted in an academic job.

“Counts” being the operative word. What can be counted is what will get done. In the humanities, no one counts whether anyone reads our papers. Only whether they are published, and where. I have observed these pressures escalate over time: nowadays it is unsurprising when those merely applying to graduate schools have already published a paper or two.

Writing for the sake of publication—instead of for the sake of being read—is academia’s version of “teaching to the test.” The result is papers few actually want to read. First, the writing is hypercomplex. Yes, the thinking is also complex, but the writing in professional journals regularly contains a layer of complexity beyond what is needed to make the point. It is not edited for style and readability. Most significantly of all, academic writing is obsessed with other academic writing—with finding a “gap in the literature” as opposed to answering a straightforwardly interesting or important question.

Read the full article in The Point.

Escaping Flatland – when determinism falls,

it takes reductionism with it

Kevin Mitchell, Wiring the Brain, 31 July 2020

For the reductionist, reality is flat. It may seem to comprise things in some kind of hierarchy of levels – atoms, molecules, cells, organs, organisms, populations, societies, economies, nations, worlds – but actually everything that happens at all those levels really derives from the interactions at the bottom. If you could calculate the outcome of all the low-level interactions in any system, you could predict its behaviour perfectly and there would be nothing left to explain. It’s turtles all the way down.

Reductionism is related to determinism, though not in a straightforward way. There are different types of determinism, which are intertwined with reductionism to varying degrees.

The reductive version of determinism claims that everything derives from the lowest level AND those interactions are completely deterministic with no randomness. There are things that seem random, to us, but that is only a statement about our ignorance, not about the events themselves. The randomness in this scenario is epistemological (relating to our knowledge or lack of it), not ontological (a real thing in the world, that we can observe, but that does not depend on us for its existence).

That’s the clockwork universe – the one where Laplace’s Demon (an omniscient being) could unerringly predict the future of the entire universe from a fully detailed snapshot of the state of all the particles in it at any given instant. It’s pretty boring, that kind of universe.

There is also an ostensibly non-reductive flavour of determinism, which simply affirms that every event has some antecedent physical cause(s). Nothing “just happens”. Under this view, however, causes don’t have to be located solely in the interactions of all the particles or limited to the actions of basic physical forces (which also act at the macroscopic scale, determining the orbits of the planets, for example). The causality, or some of it at least, could inhere in the organisation of a system and the constraints that it places on the interactions of its constituents. In this kind of scheme, there is room for a why as well as a how.

That’s the argument, at least, though it’s a little incoherent, in my view. If there is no real randomness in the system (or in the universe as a whole), then I don’t see how you can escape from pure reductionism. In a deterministic system, whatever its current organisation (or “initial conditions” at time t) you solve Newton’s equations or the Schrodinger equation or compute the wave function or whatever physicists do (which is in fact what the system is doing) and that gives the next state of the system. There’s no why involved. It doesn’t matter what any of the states mean or why they are that way – in fact, there can never be a why because the functionality of the system’s behaviour can never have any influence on anything. I would go even further and say you can never get a system that does things under strict determinism. (Things would happen in it or to it or near it, but you wouldn’t identify the system itself as the cause of any of those things).

But what if determinism is false? Let’s see what happens to reductionism when you introduce some randomness, some indeterminacy in the system.

Read the full article in Wiring the Brain.

Wrinkles in time

Haider Shahbaz, Caravan, 30 June 2020

Baig is the author of three novels, a collection of short stories and hundreds of plays for television. He published his first novel, Ghulam Bagh, in 2007. The success of this nine-hundred-page, relentlessly experimental novel secured his reputation as one of the most important Urdu writers of the twenty-first century. His last novel, Hassan Ki Soorat-e-Haal, which I translated as Hassan’s State of Affairs, continues Baig’s efforts to introduce innovative narrative forms to Urdu literature. The novel splinters in different directions, following numerous narrative threads at once, and occasionally introduces sub-plots that have nothing to do with the main story. Baig also disrupts the narratives by inserting “editorial notices,” “optional” chapters that discuss theoretical nuances, and an entire surrealist screenplay. Throughout, he experiments with different styles, including imitating those of academic manuscripts and diary entries, and challenges the social-realist mode entrenched in Urdu literature.