The latest (somewhat random) collection of essays and stories from around the web that have caught my eye and are worth plucking out to be re-read.

The Brazilianization of the world

Alex Hochuli, American Affairs, Summer 2021

The reality is that the twentieth century – with its confident state machines, forged in war, applying themselves to determine social outcomes – is over. So are its other features: organized political conflict between Left and Right, or between social democracy and Christian democracy; competition between universalist and secular forces leading to cultural modernization; the integration of the laboring masses into the nation through formal, reasonably paid employment; and rapid and shared growth.

We now find ourselves at the End of the End of History. Unlike in the 1990s and 2000s, today many are keenly aware that things aren’t well. We are weighed down, as the late cultural theorist Mark Fisher wrote, by “the slow cancellation of the future,” of a future promised but not delivered, of involution in the place of progression.

The West’s involution finds its mirror image in the original country of the future, the nation doomed forever to remain the country of the future, the one that never reaches its destination: Brazil. The Brazilianization of the world is our encounter with a future denied, and in which this frustration has become constitutive of our social reality. While the closing of historical horizons has often been a leftist, indeed Marxist, concern, the sense that things don’t work as they should is now widely shared across the political spectrum.

Welcome to Brazil. Here the only people satisfied with their situation are financial elites and venal politicians. Everyone complains, but everyone shrugs their shoulders. This slow degradation of society is not so much a runaway train, but more of a jittery rollercoaster, occasionally holding out promise of ascent, yet never breaking free from the tracks. We always come back to where we started, shaken and disoriented, haunted by what might have been.

Most often, “Brazil” has been a byword for gaping inequality, with favelas perched on hillsides overlooking millionaire high-rises. In his 1991 novel Generation X, Douglas Coupland referred to Brazilianization as “the widening gulf between the rich and the poor and the accompanying disappearance of the middle classes.”1 Later that decade, Brazilianization was deployed by German sociologist Ulrich Beck to mean the cycling in and out of formal and informal employment, with work becoming flexible, casual, precarious, and decentered.2 Elsewhere, the process of becoming Brazilian refers to its urban geography, with the growth of favelas or shantytowns, the gentrification of city centers with poverty pushed to the outskirts. For others, Brazil connotes a new ethnic stalemate between a racially mixed working class and a white elite.

This mix-and-match portrait of Brazilianization is superficially compelling, as growing inequality and precarity fractures cities across Europe and North America. But why Brazil? Brazil is a middle-income country—developed, modern, industrialized. But Brazil is also burdened by mass poverty, backwardness, and a political class that seems to have advanced little since its days as a slaveholding landed elite. It is a cipher for the past, for an earlier stage of development that the Global North passed through—and thought it left behind.

Read the full article in American Affairs.

History as end

Matthew Karp, Harper’s Magazine, July 2021

Whatever birthday it chooses to commemorate, origins-obsessed history faces a debilitating intellectual problem: it cannot explain historical change. A triumphant celebration of 1776 as the basis of American freedom stumbles right out of the gate—it cannot describe how this splendid new republic quickly became the largest slave society in the Western Hemisphere. A history that draws a straight line forward from 1619, meanwhile, cannot explain how that same American slave society was shattered at the peak of its wealth and power—a process of emancipation whose rapidity, violence, and radicalism have been rivaled only by the Haitian Revolution. This approach to the past, as the scholar Steven Hahn has written, risks becoming a “history without history,” deaf to shifts in power both loud and quiet. Thus it offers no way to understand either the fall of Richmond in 1865 or its symbolic echo in 2020, when an antiracist coalition emerged whose cultural and institutional strength reflects undeniable changes in American society. The 1619 Project may help explain the “forces that led to the election of Donald Trump,” as the Times executive editor Dean Baquet described its mission, but it cannot fathom the forces that led to Trump’s defeat—let alone its own Pulitzer Prize.

The political limits of origins-centered history are just as striking. The theorist Wendy Brown once observed that at the end of the twentieth century liberals and Marxists alike had begun to lose faith in the future. Collectively, she wrote, left-leaning intellectuals had come to reject “a historiography bound to a notion of progress,” but had “coined no political substitute for progressive understandings of where we have come from and where we are going.” This predicament, Brown argued, could only be understood as a kind of trauma, an “ungrievable loss.” On the liberal left, it expressed itself in a new “moralizing discourse” that surrendered the promise of universal emancipation, while replacing a fight for the future with an intense focus on the past. The defining feature of this line of thought, she wrote, was an effort to hold “history responsible, even morally culpable, at the same time as it evinces a disbelief in history as a teleological force.”

Today’s historicism is a fulfillment of that discourse, having migrated from the margins of academia to the heart of the liberal establishment. Progress is dead; the future cannot be believed; all we have left is the past, which must therefore be held responsible for the atrocities of the present. “In order to understand the brutality of American capitalism,” one essay in the 1619 Project avers, “you have to start on the plantation.” Not with Goldman Sachs or Shell Oil, the behemoths of the contemporary order, but with the slaveholders of the seventeenth century. Such a critique of capitalism quickly becomes a prisoner of its own heredity. A more creative historical politics would move in the opposite direction, recognizing that the power of American capitalism does not reside in a genetic code written four hundred years ago. What would it mean, when we look at U.S. history, to follow William James in seeking the fruits, not the roots?

Read the full article in Harper’s Magazine.

A grave humanitarian crisis is unfolding in Ethiopia.

“I never saw hell before, but now I have.”

Lynsey Addario & Rachel Hartigan,

National Geographic, 28 May 2021

Araya Gebretekle had six sons. Four of them were executed while harvesting millet in their fields on the outskirts of the town of Abiy Addi in west Tigray. Araya says Ethiopian soldiers approached five of his sons with their guns raised; as his children begged for their lives in the fields—explaining they were simply farmers—a female soldier ordered them dead. They pleaded for the troops to spare one of the brothers in order to help their elderly father work the fields. The soldiers let the youngest—a 15-year-old—go free. He lived to recount the story to his parents. Now, says Araya, “my wife is staying at home always crying. I haven’t left the house until today, and every night I dream of them.… There were six sons. I asked the oldest one to be there, too, but thank God he refused.”

Kesanet Gebremichael wails as nurses try to change the bandages and clean the wounds on her charred flesh at Ayder Hospital in the regional capital Mekele. The 13-year-old was inside her home in the village of Ahferom, near Aksum, when it was hit by long-range artillery. “My house was destroyed in the fire,” says her mother, Genet Asmelash. “My child was inside.” The girl suffered burns on more than 40 percent of her body.

Senayit was raped by soldiers on two separate occasions—in her home in Edagahamus, and as she tried to flee to Mekele with her 12-year-old son. (The names of the rape victims mentioned in this story are pseudonyms.) The second time, she was pulled from a minibus, drugged, and brought to a military base, where she was tied to a tree and sexually assaulted repeatedly over the course of 10 days. She fell in and out of consciousness from the pain, exhaustion, and trauma. At one point, she awoke to a horrifying sight: Her son, along with a woman and her new baby, were all dead at her feet. “I saw my son with blood from his neck,” she says. “I saw only his neck was bleeding. He was dead.” Senayit crumpled into her tears, her fists clenched against her face, and howled a visceral cry of pain and sadness, unable to stop weeping. “I never buried him,” she screamed, between sobs. “I never buried him.”

A political conflict between Ethiopian Prime Minister Abiy Ahmed and Tigray’s ruling party, the Tigray People’s Liberation Front (TPLF), has exploded into war and a grave humanitarian crisis. As many as two million people in the region have been displaced and thousands have been killed. Yet the full extent of the catastrophe is unknown because the Ethiopian government has shut down communications and limited access to Tigray.

Read the full article in National Geographic.

Stonewall risks all it has fought for

in accusing those who disagree with it of hate speech

Sonia Sodha, Observer, 6 June 2021

Two of Stonewall’s founders have accused the charity of losing its way. An independent review by a QC into the unlawful no-platforming of two female academics found that Essex University’s policy on supporting trans staff, reviewed by Stonewall, misrepresented the law “as Stonewall would prefer it to be, rather than as it is”, to the detriment of women. And following the Equality and Human Rights Commission leaving Stonewall’s Diversity Champions programme, the equalities minister, Liz Truss, has reportedly pushed for government departments to follow suit.

Stonewall has characterised this as a series of bad faith attacks by a rightwing media and the political establishment. There are certainly some criticising Stonewall whose track record on equality is dubious, but focusing on the intentions of the messenger does not get you off the hook for criticisms that have substance. It is not just the right: many gender-critical feminists, whose views Kelley appeared to put in the same bucket as antisemitism, believe Stonewall, a once-mighty civil rights organisation that overturned section 28, the law that forbade the “promotion” of homosexuality, now poses a risk to women.

Gender-critical feminists believe that in a patriarchal society women’s bodies and their role in sex and reproduction play a major role in their oppression. Gender identity – the feeling of being a man or a woman regardless of one’s biological sex – can therefore never wholly replace sex as a protected characteristic in equalities law and women have the right to organise on the basis of their sex and to access single-sex spaces.

The 18-year-old me who regarded feminism as little more than the fight for equal pay might have rolled her eyes at this. But two decades of womanhood later, my feminism has matured into the understanding that male violence is a more important tool of oppression in a patriarchal society than board appointments. In case you think I’m exaggerating, almost one in three women will experience domestic abuse in her lifetime, a woman is killed by her partner or ex-partner every four days in the UK and seven in 10 of us have been sexually harassed in public spaces. That’s why women’s rights to single-sex services, such as refuges and women’s prisons, where two-thirds of women are victims of domestic abuse, are so important: to protect against male violence.

There is a clash here with Stonewall’s campaign to abolish legal provisions for single-sex spaces, so that males who identify as women have the same rights to access them as those born female. Disagreement on what it means to be a woman – whether it is solely based on a feeling or whether it is related to sex – is one thing, although gender-critical feminists regard the reduction of womanhood to gender expression as reinforcing regressive gender norms. But it is extraordinary that Kelley believes that she is justified in likening these views to antisemitism and arguing that women’s freedom to express them should be legally curtailed.

Read the full article in the Observer.

The myth of coexistence in Israel

Diana Buttu, New York Times, 25 May 2021

Two weeks ago, I was in my family’s home in Haifa, a city in northern Israel where both Palestinians and Israelis live. I saw groups of young men carrying Israeli flags and tire irons march by, shouting, “The people of Israel live” and “Death to Arabs!”

My father and I watched on live television as a crowd of Jewish men in another mixed town, Lod, asked a man if he was an Arab, then pulled him out of his car and beat him. Some Palestinian citizens of Israel vented their frustration and anger against Jewish Israelis and symbols of the Jewish state that oppresses them by burning down a synagogue in Lod.

Haifa, whose population is 85 percent Jewish and 15 percent Palestinian, has long been presented along with Lod and other mixed cities in Israel as a model of coexistence. Which is why, in the past few weeks, the question has repeatedly been asked: How could these cities suddenly be transformed into sites of mob violence?

The truth is that the Palestinian citizens of Israel and the Jewish majority of the country have never coexisted. We Palestinians living in Israel “sub-exist,” living under a system of discrimination and racism with laws that enshrine our second-class status and with policies that ensure we are never equals.

This is not by accident but by design. The violence against Palestinians in Israel, with the backing of the Israeli state, that we witnessed in the past few weeks was only to be expected.

Palestinian citizens make up about 20 percent of Israel’s population. We are those who survived the “nakba,” the ethnic cleansing of Palestine in 1948, when more than 75 percent of the Palestinian population was expelled from their homes to make way for Jewish immigrants during the founding of Israel.

My father was among the 25 percent of the Palestinian population that remained. He was 9 years old when he was forced out of his home in Mujaydil, a Palestinian village near Nazareth. My father and his family moved to Nazareth. Because they fled to Nazareth, a mere 1.8 miles away, Israeli laws declared him and his family as “present absentees,” which meant that Israel could take away their property.

And so it did: Israel destroyed his house, his school and his entire community to make way for Jewish immigrants. In place of Mujaydil, Israel created a Jewish-only town called Migdal Haemek. He was rendered an unwanted non-Jew in the “Jewish state” of Israel, rather than an equal citizen in his homeland.

From 1948 until 1966, he and other Palestinians in Israel lived under military rule — much like that which exists in the West Bank today — having most of their land taken from them and required to get permits to travel from one place to the next. My father had to wait years before he could make the short journey to see what had become of his home and school.

Read the full article in the New York Times.

Illusions of empire

Amartya Sen, Guardian, 29 June 2021

A similar scepticism is appropriate about the British claim that they had eliminated famine in dependent territories such as India. British governance of India began with the famine of 1769-70, and there were regular famines in India throughout the duration of British rule. The Raj also ended with the terrible famine of 1943. In contrast, there has been no famine in India since independence in 1947.

The irony again is that the institutions that ended famines in independent India – democracy and an independent media – came directly from Britain. The connection between these institutions and famine prevention is simple to understand. Famines are easy to prevent, since the distribution of a comparatively small amount of free food, or the offering of some public employment at comparatively modest wages (which gives the beneficiaries the ability to buy food), allows those threatened by famine the ability to escape extreme hunger. So any government should be able stop a threatening famine – large or small – and it is very much in the interest of a government in a functioning democracy, facing a free press, to do so. A free press makes the facts of a developing famine known to all, and a democratic vote makes it hard to win elections during – or after – a famine, hence giving a government the additional incentive to tackle the issue without delay.

India did not have this freedom from famine for as long as its people were without their democratic rights, even though it was being ruled by the foremost democracy in the world, with a famously free press in the metropolis – but not in the colonies. These freedom-oriented institutions were for the rulers but not for the imperial subjects.

In the powerful indictment of British rule in India that Tagore presented in 1941, he argued that India had gained a great deal from its association with Britain, for example, from “discussions centred upon Shakespeare’s drama and Byron’s poetry and above all … the large-hearted liberalism of 19th-century English politics”. The tragedy, he said, came from the fact that what “was truly best in their own civilisation, the upholding of dignity of human relationships, has no place in the British administration of this country”. Indeed, the British could not have allowed Indian subjects to avail themselves of these freedoms without threatening the empire itself.

The distinction between the role of Britain and that of British imperialism could not have been clearer. As the union jack was being lowered across India, it was a distinction of which we were profoundly aware.

Read the full article in the Guardian.

The secret deportations: how Britain betrayed

the Chinese men who served the country in the war

Dan Hancox, Guardian, 25 May 2021

On 19 October 1945, 13 men gathered in Whitehall for a secret meeting. It was chaired by Courtenay Denis Carew Robinson, a senior Home Office official, and he was joined by representatives of the Foreign Office, the Ministry of War Transport, and the Liverpool police and immigration inspectorate. After the meeting, the Home Office’s aliens department opened a new file, designated HO/213/926. Its contents were not to be discussed in the House of Commons or the Lords, or with the press, or acknowledged to the public. It was titled “Compulsory repatriation of undesirable Chinese seamen”.

As the vast process of post-second world war reconstruction creaked into action, this deportation programme was, for the Home Office and Clement Attlee’s new government, just one tiny component. The country was devastated – hundreds of thousands were dead, millions were homeless, unemployment and inflation were soaring. The cost of the war had been so great that the UK would not finish paying back its debt to the US until 2006. Amid the bombsites left by the Luftwaffe, poverty, desperation and resentment were rife. In Liverpool, the city council was desperate to free up housing for returning servicemen.

During the war, as many as 20,000 Chinese seamen worked in the shipping industry out of Liverpool. They kept the British merchant navy afloat, and thus kept the people of Britain fuelled and fed while the Nazis attempted to choke off the country’s supply lines. The seamen were a vital part of the allied war effort, some of the “heroes of the fourth service” in the words of one book title about the merchant navy. Working below deck in the engine rooms, they died in their thousands on the perilous Atlantic run under heavy attack from German U-boats.

Following the Whitehall meeting, in December 1945 and throughout 1946, the police and immigration inspectorate in Liverpool, working with the shipping companies, began the process of forcibly rounding up these men, putting them on boats and sending them back to China. With the war over and work scarce, many of the men would have been more than ready to go home. But for others, the story was very different.

In the preceding war years, hundreds of Chinese seamen had met and married English women, had children and settled in Liverpool. These men were deported, too. The Chinese seamen’s families were never told what was happening, never given a chance to object and never given a chance to say goodbye. Most of the Chinese seamen’s British wives would go to their graves never knowing the truth, always believing their husbands had abandoned them.

Read the full article in the Guardian.

Medical algorithms have a race problem

Kaveh Waddell, Consumer Reports, 18 September 2020

Eventually, the condition left Eli’s kidneys so damaged that it was time to consider an organ transplant. But kidneys are in short supply: About 23,400 transplants took place last year, and more than 92,000 people are on a national waitlist.

To get a spot on the list, a patient must have severely compromised kidneys, which doctors watch for using a number called the glomerular filtration rate, or GFR. The figure indicates how fast a person’s kidneys can filter blood. Only people with a GFR of 20 or below can get in line for a kidney from a deceased donor, the main source of kidney transplants. (Sixty is considered the threshold of normal kidney function.)

The most common procedures for estimating GFR measure a substance called creatinine with a blood test, then do simple calculations that factor in a patient’s sex and age.

They also consider the patient’s race. The laboratory handling the blood test will take its initial GFR score and multiply it by a race adjustment coefficient for Black patients, or instruct doctors to do the math. For the test Eli underwent, the result is multiplied by 1.212.

So when Grubbs ordered a GFR test for Eli, she got two numbers back. The report said his GFR was estimated at 20 “if not African American,” and 24 “if African American.” There were no other racial categories.

If Eli had been white, his blood test result would have qualified him for a spot on the transplant waitlist. Because he is Black, he did not appear to make the cut. The use of two different numbers, one for Black patients and another for everyone else, dates to a 1999 study on kidney function. Similar race adjustments (also called race corrections) crop up in all sorts of clinical algorithms in medicine.

Some of the algorithms help doctors decipher test results like Eli’s. Others combine medical and demographic information to recommend a specific diagnostic test, or produce a risk score that helps determine whether a patient is a good candidate for a particular treatment. Algorithms like these sometimes adjust for age, sex, and other factors that can help account for broad physiological differences among patients. But the race adjustments are more controversial.

Grubbs, who has long been skeptical of race-adjusted formulas, didn’t stop with Eli’s initial GFR test results. “I didn’t believe that just because he was Black he had higher kidney function,” she says.

Read the full article in Consumer Reports.

How students are furthering academe’s corporatization

Amna Khalid, Chronicle of Higher Education, 4 May 2021

This past fall, the Core Strike Collective, a collection of student groups at Bryn Mawr College, submitted a list of 16 demands to the college administration. At the top was a call for mandatory diversity, equity, and inclusion training for students, faculty, and staff. The students, insisting on robust “quantitative and qualitative assessments,” asked for a data dashboard to track 38 proposed equity metrics concerning recruitment, retention, and financing.

Demands for diversity training and other DEI initiatives such as bias response teams have been central to student protests against racial injustice since 2015 and have only proliferated in the wake of George Floyd’s murder. Many student demands have been framed in terms of resisting capitalism, corporate logic, and labor exploitation. The Core Strike Collective called out Bryn Mawr as “a corporation that poses itself as an educational institution.” Indeed, the University of Virginia scholars Rose Cole and Walter Heinecke applaud recent student activism as a “site of resistance to the neoliberalization of higher education” that offers a “blueprint for a new social imaginary in higher education.”

But this assessment gets things backward. By insisting on bureaucratic solutions to execute their vision, replete with bullet-pointed action items and measurable outcomes, student activists are only strengthening the neoliberal “all-administrative university” — a model of higher education that privileges market relationships, treats students as consumers and faculty as service providers, all under the umbrella of an ever-expanding regime of bureaucratization. Fulfilling student DEI demands will weaken academe, including, ironically, undermining more meaningful diversity efforts.

The rampant growth of the administration over the years at the expense of faculty has been well documented. From 1987 to 2012 the number of administrators doubled relative to academic faculty. A 2014 Delta Cost Project report noted that between 1990 and 2012, the number of faculty and staff per administrator declined by roughly 40 percent. This administrative bloat has helped usher in a more corporate mind-set throughout academe, including the increased willingness to exploit low-paid and vulnerable adjuncts for teaching, and the eagerness to slash budgets and eliminate academic departments not considered marketable enough.

College leaders, for their part, have been more than happy to comply with the recent demands for trainings and DEI personnel. Nothing is more convenient from an institutional perspective than hiring more administrators and consultants. It simultaneously assuages angry students and checks the box of doing the work of improving campus inclusivity, without having to contend with the sticking points of university policies and procedures where real change could be achieved: tenure-review processes, limited protections for contingent faculty, and student admission and aid policies that produce inequities.

Read the full article in the Chronicle of Higher Education.

Palestinianism

Adam Shatz, London Review of Books, 6 May 2021

Brennan’s title alludes to Said’s memoir, Out of Place, as well as to his family’s dispossession and his own experiences of being attacked by Israel’s apologists in the US, and later by the Palestinian Authority. Yet the emphasis on place is misleading. What captured Said’s imagination wasn’t place or territory so much as time: the drama of beginnings, the defiance of late style. Nor did he lack for a home: New York fitted him as well as his bespoke suits. He once asked Ignace Tiegerman why he hadn’t left Cairo for Israel. ‘Why should I go there?’ Tiegerman replied. ‘Here I am unique.’ In New York, Said was unique, and whatever loneliness he experienced was offset by his love of a place where ‘you can be anywhere in it and still not be of it.’ In New York he had a stage: a professorship at Columbia, where he was the highest paid member of the humanities faculty; access to nightly talk shows and news stations, where he became the face of Palestine; and, not least, the world of literary parties and salons, where ‘Eduardo’ (as friends teasingly called him) cut an alluring profile.

For all his admiration of men of the left who threw themselves into insurgent struggles – and although his own activism attracted the surveillance of the FBI – Said led the life of a celebrity intellectual. Brennan places him in the tradition of revolutionary intellectuals, but Said doesn’t resemble Gramsci or Fanon so much as Susan Sontag, born two years before him, and, like him, a dissident heir of the New York intellectuals. Both were literary critics who first made a name for themselves as interpreters of French theory for Anglo-American audiences but later broke free of its textual games and jargon in favour of a more readerly style. Despite a shared loathing of American consumerism and provincialism, each was possessed of a peculiarly American energy and drive. They were each American in their rejection of cultural pessimism, and they shared a reverence for traditional Western culture: they may have expressed ‘radical styles of will’, but they also invoked the authority of canonical critics. In their writings on photography, both drew inspiration from John Berger, moved, if not quite persuaded, by his insistence on the medium’s insurrectionary potential. Their best-known books, Orientalism and Illness as Metaphor, both published in 1978, were quarrels with oppressive systems of representation by which they had felt personally victimised. They often wrote in praise of Marxist intellectuals but were never Marxists themselves. Neither took part in civil rights or labour struggles at home, devoting their political energies to foreign causes.

But unlike Sontag, who had a thick skin, Said remembered every slight he’d suffered, every award he’d been denied, every note he missed when he played the piano. According to Brennan, he lived ‘in agony’ most of the time. The life of a closeted Palestinian would have been much easier.

Sontag’s Jewishness made her an American establishment insider. For much of his career, Said was not just the lone Arab, but the Palestinian, liable to be portrayed as an enemy of the Jews, as a dangerous radical who, as he put it, did ‘unspeakable, unmentionable things’ when he wasn’t giving lectures on Conrad and Jane Austen. Eventually even those lectures would come to be seen by his critics as a threat to Western culture, if not an extension of his work for the PLO. Said’s insecurity, as much as his colonial origins, may explain why, unlike Sontag, he attached himself to institutions: Columbia, the Palestine National Council, the Century Club, the West-Eastern Divan Orchestra. Institutional affiliation wasn’t simply a comfort: it offered an escape from feelings of awkwardness, of being ‘not quite right’ – the original title of his memoir.

Read the full article in the London Review of Books.

The EFF will not bring the change South Africans need

Andile Zulu, Africa Is A Country, 2 July 2021

An overview of the EFF in the past and present reveals a party bloated by ideological ambiguity and contradiction. Lacking an ideological anchor, they often misdiagnose the source of the country’s major crisis, offering antiquated solutions while also undermining their ability to achieve their stated objectives.

So how is the party of Julius Malema to be understood?

They can be classified as electoral populists, where populism is as a style of politics and set of strategies used to amass power. Because the party is entangled in ideological disorder, issues of race are dangerously misunderstood and exploited for electoral gains or trivial wars of identity. The project of kindling class consciousness and building working class power from the bottom up is cast aside. And once again, citizens are left yearning for radical change through a political party that cannot deliver it.

“In South Africa, we are still to deal with class divisions. At the core of our divisions is racism,” Malema has stated.

Populists on both the right and left often portray the struggle for political power as one erupting between corrupt elites and oppressed masses. For the EFF, the great divide is between a wealthy white minority and the destitute black masses, whose oppression and exploitation both produces and sustains white economic domination.

Racial inequality is the central factor which fuels the EFF’s program. This presents a problem not only for the EFF but for anyone seeking to diagnose the source of our society’s major ills. No one awake to South Africa’s reality can reasonably deny the disastrous impacts of systemic racism. But as an explanation for all social and economic issues, racism only takes us so far when trying to understand the nature of oppression…

The EFF’s obsession with racial divisions is misleading. The reclaiming of white wealth will not lead to black liberation. Moreover, the rhetoric of anti-racism can be co-opted by black people hoping to change the racial composition of inequality but not inequality itself. And such ambitions are often expressed as a desire to rid our economy of white supremacy.

But if relations of class domination and exploitation persist, so will destitution for most black people. Remember that a small black elite has found success in the postapartheid era, but those black faces in high places have no interest in advancing liberation. Their class positions—as corporate managers, career politicians and successful tenderpreneurs—compel them to pursue self-enrichment. And, importantly, to keep intact an economic order which keeps the working class exactly where they are.

Read the full article in Africa Is A Country.

How to be an anti-anti-racist

John Torpey, Noema, 8 June 2021

Illiberal anti-racism is driven by the ideas of intersectionality, which (reasonably enough) holds that a person’s social and political identities expose them to different kinds of discrimination, and critical race theory, which views white supremacy as the driving force in American politics and law, as well as by their outrider on the international front, postcolonialism. The notion of intersectionality was originally built chiefly around the triad of race, class and gender, but over time, cultural issues came to trump class issues, and class has been largely abandoned in academic debates about the intellectual future of the left. As with anti-racism, culture has carried the day.

Insofar as anti-racism frames the barrier to racial equality as “systemic racism,” the stress on reforming people’s personal views — which today are far less racist than was the case before the civil-rights era — is puzzling. “Systemic racism” would suggest a focus on structural disparities such as income, wealth, unemployment rates, health inequities, mortality differences and more, and on solutions to those problems. Instead, the emphasis in anti-racist discourse tends to be on plumbing the depths of the white soul in search of racist beliefs. The puritanical roots of this approach are hard to miss, and its judgments are hard to bear. The stress on personal feelings is suffocating for many people; being called a racist can be severely damaging for one’s reputation or career, so many people — including those sympathetic to a politics of racial justice — simply keep their distance…

It is important to note that by anti-anti-racism I don’t mean the term in the negative sense that Jelani Cobb used it recently when he referred in The New Yorker to the “broader offensive that the Republican Party has been coordinating since Trump’s reelection loss … to facilitate the more overtly racist portions of the party’s agenda.” Cobb deploys the term “anti-anti-racism” to describe the right’s attempt to deny, as he writes, “that racism remains a vital force in American life [and] that it is deeply rooted in the American past.”

In contrast, the anti-anti-racism I am talking about is a response to the overweening character of much anti-racism — a pushback against the inadvertent way in which a promising movement for change is being channeled into dead-end illiberalism. Think of it in the way that a double-negative becomes a kind of positive.

Anti-anti-racism can be (imperfectly) compared to anti-anti-communism. The latter arose in the 1960s and 70s with the “New Left,” which tried to distance itself from a communist-dominated Old Left often seen as too supportive of a retrograde Soviet Union. Rejecting both Soviet communism and the anti-communism of the American establishment, the New Left wanted to get past stale disputes and focus on more urgent concerns, such as voting rights for Blacks and opposition to anti-communism’s signature case of overreach, the Vietnam War. Anti-anti-racists and anti-anti-communists both refuse to be held to either pole of the debate.

Read the full article in Noema.

Who owns Frantz Fanon’s legacy?

Bashir Abu-Manneh, Catalyst, Spring 2021

To read Wretched is to enter a world of colonial division, national conflict, and emancipatory yearning. As a text, it combines dynamic critique with political passion, historical probing with denunciation of injustice, reasoned argument with moral indignation against suffering. This is how it inspired a whole generation of radicals around the world to transform societies that were slowly emerging from colonial domination. By identifying the racism and structural subordination of the colonial predicament, as well as charting a humanist route out of it, Fanon defined a politics of liberation whose terms and aims remain relevant today.

But many of Fanon’s recent academic critics, and even some of his sympathizers, continued to distort and misconstrue Wretched. They inflated the significance of one element in the book over all others: violence. And they underplayed Fanon’s socialist commitment and class analysis of capitalism, which are two essential components of his anti-imperialist arsenal. Nowhere is this truer than in recent postcolonial theory. Indeed, postcolonial theory has come to posit violence as the theoretical core of Wretched. Homi K. Bhabha, for example, has turned Fanon’s work into a site of “deep psychic uncertainty of the colonial relation” that “speaks most effectively from the uncertain interstices of historical change.”1 In his recent preface to Wretched, Bhabha reads colonial violence as a manifestation of the colonized’s subjective crisis of psychic identification “where rejected guilt begins to feel like shame.” Colonial oppression generates “psycho-affective” guilt at being colonized, and Bhabha’s Fanon becomes an unashamed creature of violence and poet of terror. He concludes that “Fanon, the phantom of terror, might be only the most intimate, if intimidating, poet of the vicissitudes of violence.” This flawed interpretation eviscerates Fanon as a political intellectual of the first order. It also skirts far too close to associating Fanon’s contributions with terrorism — a bizarre interpretation for Bhabha to advance in the age of America’s “war on terror.” Rather than emancipation, it is terror, Bhabha posits, that marks out Fanon’s life project.

It is hardly surprising that, in order to turn Fanon into a poet of violence, postcolonial theorists have had to deny his socialist politics. This begins with Bhabha himself, whose intellectual project is premised on undermining class solidarity and socialism as subaltern political traditions.3 Ignoring Fanon’s socialist commitments is also evident in Edward Said’s reading of him in Culture and Imperialism, which is historically sparked by the First Intifada and Said’s critical disenchantment with Palestinian elite nationalism. If Said is profoundly engaged with Fanon’s politics of decolonization and universalist humanism, he nonetheless fails to even mention the word “socialism” in association with Fanon, let alone read him as part of the long tradition of the socialist critique of imperialism. This dominant postcolonial disavowal of socialist Fanon is also articulated by Robert J. C. Young when he bluntly states that Fanon is not interested in “the ideas of human equality and justice embodied in socialism.”

Read the full article in Catalyst.

Displacing peasants to “protect” the Amazon

Justin Podur, New Frame, 6 July 2021

Colombia witnessed a series of mass protests at the end of April following a call for a national strike. Still ongoing, the protests have many causes: an apparent “tax reform” that was going to transfer even more wealth to the 1% in Colombia; the failure of the most recent peace accords; and the inability of Colombia’s privatised healthcare system to contain the Covid-19 crisis. In response to these ongoing protests, the government has killed dozens, disappeared hundreds, imposed curfews on multiple cities and called in the army. But the protests continue – because they are, at least in part, a repudiation of the militarisation of everything in the country.

In the background of the uprising in Colombia is the question of land. A multi-decade civil war has led to millions of peasants being thrown off of their land, which ended up in the hands of large landowners or was used for corporate megaprojects. In the ongoing corporate land grab that has been taking place in Colombia for the last few years, there is a new and frightening weapon: the militarisation of environmental conservation. In a countrywide series of military operations beginning in February, involving a large number of soldiers and police, the army captured 40 people, whom the attorney general accused of deforestation and illegal mining, in six different locations in the country.

In an earlier operation, the army captured four people for crimes against the environment, who have been labelled as “dissidents of the guerrillas of the Revolutionary Armed Forces of Colombia (FARC)” by Colombia’s President Iván Duque, according to an article in Mongabay. In another operation in March 2020, soldiers trying to capture illegal ranchers in national parks picked up 20 people, 16 of whom turned out to be peasants who did not own land or cattle, according to Mongabay. According to the Colombian military, eight operations were carried out in 2020, through which it had “recovered more than 9 000 hectares of forest”, while capturing 68 people, 20 of whom were minors, stated the article in Mongabay.

What the military calls “recovered” forest is a territory emptied of its people. The overall initiative, which began in 2019, is labelled “Operation Artemis”. It deploys what one article in the City Paper (Bogotá) calls “Colombia’s full-metal eco-warriors” in an effort to reduce deforestation by 50%, as Duque told Reuters. With so much military defence of the forest taking place, several questions arise: Is deforestation a problem that can be solved with the use of weapons? Can the forest be saved through mass arrests? Can the same military that killed thousands of innocent people, including peasants, in an attempt to inflate their body count statistics, be trusted to protect the environment?

Read the full article in New Frame.

The fog of history wars

David W. Blight, New Yorker, 9 June 2021

Two recent history wars offer cautionary tales. One arrived in the mid-nineties, when a debate flared in the media over the National Standards for History. The Standards were a colossal project: the country’s first attempt at establishing a nationally recognized set of criteria for how history should be taught. Initially funded by the George H. W. Bush Administration, the venture took around three years and two million dollars to complete, and involved every relevant constituency, including parents, teachers, school administrators, curriculum specialists, librarians, educational organizations, and professional historians. Yet, when the Standards were published, in 1994, a big-tent effort transformed into a ferocious political fight. Many historians entered the public arena for the first time during this debate, and droves of us have never left.

Generally, historians were no match for the right-wing assault on the Standards, which one conservative think-tank writer likened to propaganda “developed in the councils of the Bolshevik and Nazi parties and successfully deployed on the youth of the Third Reich and the Soviet empire.” Lynne Cheney, then a fellow at the American Enterprise Institute, blasted the Standards in the Wall Street Journal as “politically correct” and full of “politicized history.” (A few years earlier, as the chair of the National Endowment for the Humanities, Cheney had granted five hundred and twenty-five thousand dollars to help fund the project.) Radio host Rush Limbaugh accused historians of depicting America as “inherently evil,” and contended that the Standards should be “flushed down the sewer of multiculturalism.” The media swarmed to get Cheney debating celebrated historians such as Joyce Appleby, Eric Foner, and Gary B. Nash, who was one of the Standards’ lead authors. Critics often complained that the criteria too frequently mentioned Harriet Tubman, at the cost of eliding figures such as George Washington.

If such critics had read the Standards carefully, they would have known that the suggestions were merely guidelines, and fully voluntary for school districts. But the Senate, caving in to vicious op-eds and conspiracy theories about cabals of liberal academic historians, voted to repudiate the project, claiming that it showed insufficient respect to American patriotic ideals. The debate left an important legacy. As Nash and his co-authors wrote in “History on Trial,” a 1997 book on the controversy, curricula are often mere “artifacts” of their time, and necessarily vulnerable to “prevailing political attitudes” and “competing versions of the collective memory.” Nations have histories, and someone must write and teach them, but the Standards remain a warning to all those who try.

Read the full article in the New Yorker.

Republicans want you (not the rich) to pay for infrastructure

Brian Highsmith, New York Times, 23 June 2021

Rather than the government financing the rebuilding of roads and bridges that get you across town, you pay a private company operating in contract with the government — while policymakers pretend that they have avoided imposing new costs.

Chief among these schemes that Republicans have identified are so-called user fees, like road tolls and a new fee on vehicle miles traveled. The White House rejected such proposals as violating its own tax pledge: a promise not to increase taxes on families earning under $400,000 annually. As President Biden observed, “If everything is paid for by a user fee, the burden falls on working-class folks, who are having trouble.”

In recent decades, state and local governments increasingly have turned to these funding arrangements. And unlike progressive taxes, user fees — whether assessed by public entities or by private firms contracted with the state — are not generally varied by ability to pay. They are imposed at a flat rate, on the poorest and wealthiest alike, assessed in proportion to their use of public infrastructure. These extractive revenue models condition access to critical goods and services on families’ available resources. And unlike with consumer goods, people often have no choice but to use these spaces.

In 2008, to avoid raising property taxes, Chicago famously leased its parking meter infrastructure to a group of private investors. Shortly after the asset sale, residents parking downtown were paying more than double the prior rates. The privatization of local public services can be observed in soaring school lunch debt, exorbitant charges for prison phone calls and revenue-driven overpolicing.

The endpoint of this shift can be observed in rural Tennessee, where several jurisdictions instructed firefighters not to respond to emergencies without first confirming that occupants have paid an annual service fee; if they have not, city responders stand aside and let the homes burn to the ground. The lesson is clear: Flat-rate user-funded structures privatize social risks while shielding wealth from productive public use. This underlying dynamic does not change when such fees are imposed by unaccountable private investors rather than the state.

Some particular fees — including those for street parking, and congestion pricing schemes — are often justified as internalizing public costs of socially deleterious behavior, analogous to “sin taxes” assessed on alcohol and tobacco. But if the primary motivation is to shape individual decisions, this goal arguably is undermined by the flat-rate design of most fees, which may be debilitating for poor families but are hardly noticed by the wealthy.

Read the full article in the New York Times.

The problem with “white privilege” discourse

Cathy Young, Arc Digital, 30 June 2021

Quite often, “white privilege” is defined more narrowly as absence of the disadvantages and prejudices that specifically afflict black Americans, from lower income to lower life expectancy to disproportionate harassment and violence by law enforcement. Yet Asian-Americans fare better than white Americans on every single one of those measurements. Nor do Hispanics fit neatly into the “white privilege” framework, with lower median household income but higher life expectancy compared to whites. (The data on police violence are even more complicated: While Latino men are at higher risk of being killed by the police than white men, the disparity is reversed for women.) In this sense, “white privilege” rhetoric offers an incredibly simplistic and reductionist take on a complex multiracial, multiethnic society.

But it also has other, more pernicious effects.

For one thing, equating the absence of discrimination, mistreatment, or injustice with the presence of “privilege” turns one of liberalism’s core principles—the existence of fundamental, inalienable human and civil rights—on its head, and thus subverts it. Not being mistreated, abused, or discriminated against becomes a special advantage, an unearned reward granted to members of a privileged group. This troubling aspect of privilege discourse has been picked up even by some commentators who share the “social justice” view of endemic racism in modern American society. In a 2013 essay for Al Jazeera, University of California-Merced sociologist Tanya Golash-Boza questioned the language of “white privilege” in anti-racist activism: not being presumptively treated as a criminal by the police or by shop owners, she pointed out, is “not something that should be considered a privilege” but simply “how things should be.” More recently, Naomi Zack, a philosophy professor at the University of Oregon who works in the area of ethics and race and sees police killings and harassment of young black men as a clear example of racial injustice, made a similar point in a New York Times interview:

The term “white privilege” is misleading. A privilege is special treatment that goes beyond a right. It’s not so much that being white confers privilege but that not being white means being without rights in many cases. Not fearing that the police will kill your child for no reason isn’t a privilege. It’s a right.

The equation of “not being discriminated against” with “privilege” also opens the way to attacking minorities which, by and large, do not experience discrimination as a severe problem. It implies, for instance, that there is such a thing as “Jewish privilege” if Jews in America are generally not discriminated against for being Jewish. (Notably, while white supremacist trolls have openly floated that concept in a blatant attempt to co-opt the language of “social justice” for anti-Semitism, the social justice left has also dabbled in rhetoric that singles out “white Jews” for “upholding white supremacy” and failing to confront their “whiteness.”) The issue of “Asian privilege” has also reared its head, though social justice activists have also decried the concept as “harmful.”

Read the full article in Arc Digital.

We are critics of Nikole Hannah-Jones.

Her tenure denial is a travesty

Keith E. Whittington and Sean Wilentz,

Chronicle of Higher Education, 24 May 2021

Most recently, the University of North Carolina Board of Trustees has apparently balked at the recommendation that Nikole Hannah-Jones be appointed with tenure to the Knight chair in race and investigative journalism. She will instead hold the chair for a five-year term.

The board has said little about the decision, but it would appear that political considerations drove them to take the extraordinary step of intervening in the university’s hiring decision for an individual faculty position. Such an action would be a gross violation of the principles that ought to guide the governance of modern American universities and a clear threat to academic freedom. Unfortunately, the temptation for political tampering with the operation of universities is growing not just in North Carolina but across the country.

The final step in the process of making an appointment to the faculty of public and private universities alike routinely involves the approval of a board of trustees. At public universities, boards are often politically appointed, as is true at the University of North Carolina. At private universities, they are generally dominated by generous alumni and donors. There was a time when such boards regularly exercised real power over the hiring and firing of members of the faculty, and the tenure of faculty members was dependent on staying in the good graces of the political factions and personal interests of powerful board members. The long fight for academic freedom necessitated insulating the faculty from the board. Boards retain the power to approve of faculty-hiring decisions in the same way that the queen of England retains the power to approve legislation passed by Parliament — as a ceremonial formality only…

The sharp polarization of our politics threatens the foundations of teaching and scholarship, especially in areas of civics and American history. Efforts to create grounds where students can learn essential lessons about the structure of our constitutional government and the nation’s past run afoul of clashing, strident political agendas. It is against that deplorable background that the trustees of the University of North Carolina have blocked this appointment.

We have been critical of Hannah-Jones’s best-known work in connection with “The 1619 Project,” and we remain critical. We also respect the judgment and the authority of the University of North Carolina’s faculty and administration. For the Board of Trustees to interfere unilaterally on blatantly political grounds is an attack on the integrity of the very institution it oversees. The perception and reality of political intervention in matters of faculty hiring will do lasting damage to the reputation of higher education in North Carolina — and will embolden boards across the country similarly to interfere with academic operations of the universities that they oversee.

Read the full article in the Chronicle of Higher Education.

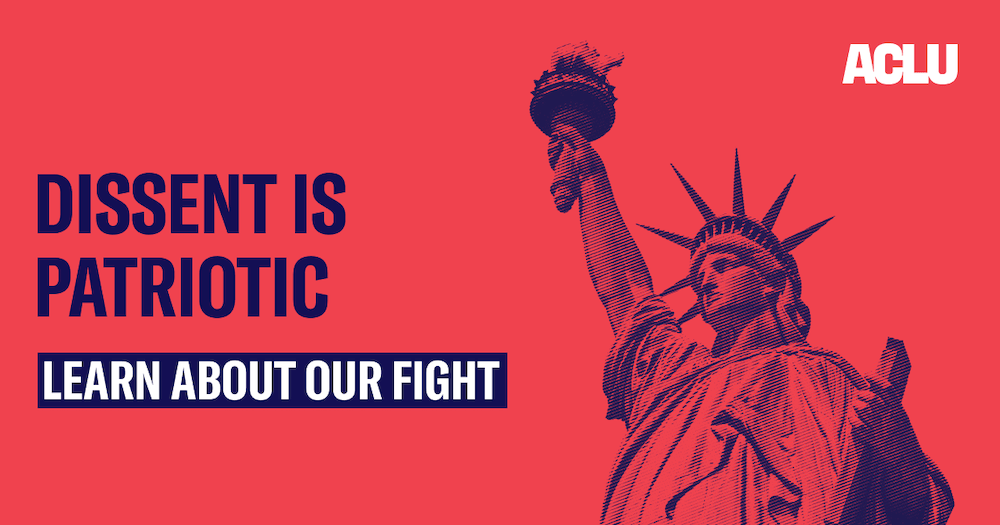

Once a bastion of free speech,

the ACLU faces an identity crisis

Michael Powell, New York Times, 6 June 2021

The A.C.L.U., America’s high temple of free speech and civil liberties, has emerged as a muscular and richly funded progressive powerhouse in recent years, taking on the Trump administration in more than 400 lawsuits. But the organization finds itself riven with internal tensions over whether it has stepped away from a founding principle — unwavering devotion to the First Amendment.

Its national and state staff members debate, often hotly, whether defense of speech conflicts with advocacy for a growing number of progressive causes, including voting rights, reparations, transgender rights and defunding the police.

Those debates mirror those of the larger culture, where a belief in the centrality of free speech to American democracy contends with ever more forceful progressive arguments that hate speech is a form of psychological and even physical violence. These conflicts are unsettling to many of the crusading lawyers who helped build the A.C.L.U.

The organization, said its former director Ira Glasser, risks surrendering its original and unique mission in pursuit of progressive glory.

“There are a lot of organizations fighting eloquently for racial justice and immigrant rights,” Mr. Glasser said. “But there’s only one A.C.L.U. that is a content-neutral defender of free speech. I fear we’re in danger of losing that.”

Founded a century ago, the A.C.L.U. took root in the defense of conscientious objectors to World War I and Americans accused of Communist sympathies after the Russian Revolution. Its lawyers made their bones by defending the free speech rights of labor organizers and civil rights activists, the Nation of Islam and the Ku Klux Klan. Their willingness to advocate for speech no matter how offensive was central to their shared identity.

One hears markedly less from the A.C.L.U. about free speech nowadays. Its annual reports from 2016 to 2019 highlight its role as a leader in the resistance against President Donald J. Trump. But the words “First Amendment” or “free speech” cannot be found. Nor do those reports mention colleges and universities, where the most volatile speech battles often play out.

Read the full article in the New York Times.

“The culture-war stuff just rots the brain”

Musa al-Gharbi, Chronicle of Higher Education, 30 June 2021

Your work sits at an interesting angle in relation to debates about postcoloniality and decolonization.

A lot of the postcolonial literature takes the prevailing Orientalist narrative and just reverses the signs on everything. Things that before were described as constructive and beneficent on the part of the West are now described as evil and exploitative, but the picture of the world remains roughly the same in both cases. Virtually all agency is still with whites, with powerful people. If you ask certain scholars, “How did ISIS come about?” they’ll talk about Sykes-Picot carving up the Middle East into arbitrary states, they’ll talk about the invasion and occupation of Iraq, U.S. meddling in Syria — and it’s not that those narratives are wrong per se. But they’re definitely incomplete. They’re focused exclusively on elites in the West, in Europe, in America. There’s no point in the story where some ordinary Iraqi or Syrian picks up a gun, and aims it at somebody else, and willfully pulls the trigger. When non-Westerners appear in the story at all, they’re like motes of dust being blown around by the “real” actors. That’s profoundly condescending, and it’s not really a picture of the world that’s any different than the imperial one in terms of who has agency and power…

And this goes back to your critique of the moral melodrama that a lot of scholars seem increasingly attracted to. But I wonder if part of the problem is that when you do get people pushing back on some of the more hypercritical narratives, the people doing the pushback are often reactionaries — though they wouldn’t describe themselves that way, obviously. You end up with a binary worldview where the people who claim to be introducing nuance are frank apologists for empire.

To go back to the example of ISIS: Part of the reason that people tell a story of ISIS that completely removes any agency from the people of the Middle East or Muslims is because there is a constellation of actors that is very keen to describe ISIS as resulting from pathologies unique to Islam, and to portray Islam as a danger that will destroy the West.

The culture-war stuff just rots the brain. You end up with these extreme pictures of the world that circulate almost exclusively among people already predisposed to believe them.

Read the full article in the Chronicle of Higher Education.

How the N-word became unsayable

John McWhorter, New York Times,30 April 2021

In 1934, Allen Walker Read, an etymologist and lexicographer, laid out the history of the word that, then, had “the deepest stigma of any in the language.” In the entire article, in line with the strength of the taboo he was referring to, he never actually wrote the word itself. The obscenity to which he referred, “fuck,” though not used in polite company (or, typically, in this newspaper), is no longer verboten. These days, there are two other words that an American writer would treat as Mr. Read did. One is “cunt,” and the other is “nigger.” The latter, though, has become more than a slur. It has become taboo.

Just writing the word here, I sense myself as pushing the envelope, even though I am Black — and feel a need to state that for the sake of clarity and concision, I will be writing the word freely, rather than “the N-word.” I will not use the word gratuitously, but that will nevertheless leave a great many times I do spell it out, love it though I shall not.

“Nigger” began as a neutral descriptor, although it was quickly freighted with the casual contempt that Europeans had for African and, later, African-descended people. Its evolution from slur to unspeakable obscenity was part of a gradual prohibition on avowed racism and the slurring of groups. It is also part of a larger cultural shift: Time was that it was body parts and what they do that Americans were taught not to mention by name — do you actually do much resting in a restroom?

That kind of concern has been transferred from the sexual and scatological to the sociological, and changes in the use of the word “nigger” tell part of that story. What a society considers profane reveals what it believes to be sacrosanct: The emerging taboo on slurs reveals the value our culture places — if not consistently — on respect for subgroups of people. (I should also note that I am concerned here with “nigger” as a slur rather than its adoption, as “nigga,” as a term of affection by Black people, like “buddy.”)

For all of its potency, in terms of etymology, “nigger” is actually on the dull side, like “damn” and “hell.” It just goes back to Latin’s word for “black,” “niger,” which not surprisingly could refer to Africans, although Latin actually preferred other words like “aethiops” — a singular, not plural, word — which was borrowed from Greek, in which it meant (surprise again) “burn face.”

English got the word more directly from Spaniards’ rendition of “niger,” “negro,” which they applied to Africans amid their “explorations.” “Nigger” seems more like Latin’s “niger” than Spanish’s “negro,” but that’s an accident; few English sailors and tradesmen were spending much time reading their Cicero. “Nigger” is how an Englishman less concerned than we often are today with making a stab at foreign words would say “negro.”

Read the full article in the New York Times.

Europe’s latest export: A bad disinformation strategy

Peter Cunliffe-Jones, Politico, 7 June 2021

The poster child of the EU’s tech heft is its data privacy law, the General Data Protection Regulation. “Since the adoption of the GDPR, we have seen the beginning of a race to the top for the adoption or upgrade of data protection laws around the world,” according to Estelle Massé, a policy analyst with digital rights campaigners Access Now.

The Protection of Personal Information Act (POPIA), which came into effect in South Africa in 2020, is often compared to GDPR and is expected to be brought more closely into line with it over time. Kenya’s data privacy law is also “largely modelled on” the EU regulations, (though not enough for its critics), analysts say.

That’s great in the case of the GDPR, where the model is broadly a good one. It would terrible if the same thing were to happen with the DSA and the Code of Practice.

Our research into the way misinformation causes harm suggests the two will fail to achieve one of their main goals: reducing the negative effects of misinformation.

Complex legislation though it is, the DSA’s approach is a simple one. It boils down to ordering tech companies to promptly remove “illegal content” once it has been identified or signaled to them, or face major fines if they don’t.

The accompanying Code, first introduced in 2018 and now being updated applies the same principle to misinformation: requiring companies to either remove or demote content deemed false and accounts that promote it.

The problems with the DSA, the Code of Practice, and similar models for tackling misinformation and disinformation are threefold:

First is the responsibility or license the laws give to privately-owned tech companies to decide, behind closed doors, what constitutes harmful content. Few object to takedowns of child pornography, terrorism-related material or hate speech. But all of those are quite well-defined. What counts as misinformation or disinformation is not, and working out what is harmful is harder still.

Second, even if the tech firms do identify harmful misinformation in way the public would agree with, simply forcing them to take it down after the fact does not reverse the harm caused, like other more proactive approaches, such as teaching misinformation literacy and fact-checking can.

Third, while the DSA and the Code are presented as a solution to harmful misinformation, it offers no answers to the wider problems of the information disorder (a technical term for the witting or unwitting sharing of falsehoods). As Christine Czerniak, technical lead of the World Health Organisation’s team fighting Covid-19 information disorder, said the “infodemic” is “a lot more than misinformation or disinformation.” She added she was speaking in general terms, not specifically in relation to the DSA or other proposals.

Read the full article in Politico.

Torn in the USA

James Bloodworth, The Critic, June 2021

Told through the lens of Amazon, Fulfillment is a tale of the growing divide between what the author calls “winner-takes-all cities” and declining towns and suburbs. MacGillis spends time in prosperous Seattle, the location of Amazon’s corporate HQ. From there he moves to rural and small-town America, which is experiencing a decline “unlike anything it had experienced since the offspring of farm families had started fleeing to cities en masse a century earlier”.

Dayton, Ohio was once an industrial powerhouse. Today its residents scratch out a living. We meet Todd and Sara, a young couple brought up amid a sense of genuine material progress. Yet during the George W. Bush era, one in three local manufacturing jobs vanished. Todd works at a local cardboard box factory — one of the few firms to survive Amazon’s retail dominance — and the couple live in a homeless shelter.

MacGillis notes that when Donald Trump won the presidency in 2016, he did so by “racking up big numbers” in depressed areas that had not voted Republican for decades — the sort of places Amazon has chosen to build its fulfilment centres. “He’s gonna raise the economy back to the way it was when my parents were working,” says Todd, who voted Obama twice before voting for Trump in 2016.

Fulfillment is one of the better books looking at “left behind” regions of America, not least because of the sheer breadth of its investigative reporting. It gets under the skin of America’s forgotten communities; it also shows compassion for its subjects at a time when liberal politics is preoccupied with ideological purity and rooting-out suspected “deplorables”.

Indeed, locating the material roots of rage and discontent is unfashionable among affluent Democratic party elites who reside in coastal cities. They were appalled by Trump, but also by the people who voted for him. The 2016 election result produced “an instant revulsion toward the parts of the country that had made him president,” MacGillis writes. “It was time, they said, to pull the plug on these places, economically and politically.”

That may yet be unnecessary: opioid and suicide epidemics are ripping the heart out of working-class America. “‘Deaths of despair’ — the astonishing rise in mortality among white people without college degrees caused by suicide, alcoholism, and drug addiction… was concentrated heavily in Ohio and neighbouring Pennsylvania, Kentucky, and Indiana,” writes MacGillis. “In just five years, from 2012 to 2017, death rates for white people between twenty-five and forty-four years old jumped by a fifth.”

Read the full article in The Critic.

When Pop History Bombs: A Response to

Malcolm Gladwell’s Love Letter to American Air Power

David Fedman & Cary Karacas, LA Review of Books, 12 June 2021

At the heart of Gladwell’s account sits a tight-knit group of officers who taught in the 1930s at the Air Corps Tactical School — what he calls “the Bomber Mafia,” but who referred to themselves simply as “the School.” What this group lacked in numbers it made up for in faith in their dream for the future of American air power: high-altitude daylight precision bombing. Steadfast in their conviction that rapid technological advancements would enable bombers to “drop a bomb in a pickle barrel at 30,000 feet,” these men, according to Gladwell, called for more humane approach to air power. The School, we’re told, was composed of “idealistic strategists” who wanted to make war less lethal, who wanted to make “a moral argument about how to wage war.”

In sharp contrast to area bombing proponents such as Arthur “Bomber” Harris, air marshall of Britain’s Royal Air Force, these visionaries were unwilling to cause mass suffering. They wouldn’t aim for whole cities but for strategic industrial or military sites that would cripple the country and force it to sue for peace. “The genius of the Bomber Mafia,” writes Gladwell, was to say, “We don’t have to slaughter the innocent, burn them beyond recognition, in pursuit of our military goals. We can do better.”

It’s a nice premise. And it helps to explain the supposed aberration on Guam. LeMay, following in the footsteps of that “psychopath” Harris, as Gladwell calls him, would lay entire Japanese cities to waste with incendiary bombs.

There’s just one catch to Gladwell’s portrayal of the School and its moral creed: it’s embellished at every turn. The School didn’t promote precision bombing out of some lofty ethical argument about humane warfare. They did so because they were convinced that, of all the strategies available to them, pinpointing key industries was the most effective means to dismantle the enemy state’s war economy. As they saw it, bombs did the most damage when they fell on vital lifelines that supported the “National Economic Structure.” With memories of trench warfare still fresh in their minds, many strategists saw air power as a new means to avoid protracted wars of attrition. The ultimate goal of precision bombing was to achieve suffering on two fronts at once: to cripple industrial production and, by destroying critical infrastructure, to unleash hardship on civilians who would in turn force their leaders to capitulate. The idea, as Michael Sherry has put it, was to attack “the enemy population indirectly, by disrupting and starving it rather than by blasting and burning.”

What motivated Hansell and other air power proponents, then, was less a moral crusade than a strategic outlook. In their public statements and lectures, the School may have played up the humanitarian aspects of their position. In briefings, studies, and planning documents, however, they were fixated on the practical exigencies of winning future wars. When the countries responsible for civilian bombings in Ethiopia, Spain, and China weren’t met with the international opprobrium many in the School were expecting, cracks in these principles only grew.

Read the full article in the LA Review of Books.

What Renaissance?

Sam Dresser, Aeon, 31 May 2021

Renaissance philosophy is often presented as a conflict between humanism and scholasticism, or sometimes it’s simply described as the philosophy of humanism. This is a deeply problematic characterisation, partly based on the assumption of a conflict between two philosophical traditions – a conflict that never actually existed, and was in fact constructed by the introduction of two highly controversial terms: ‘humanism’ and ‘scholasticism’. A telling example of how problematic these terms are as a characterisation of philosophy in the 16th century can be found in Michel de Montaigne (1533-92). He was critical of a lot of philosophy that came before him, but he didn’t contrast what he rejected with some kind of humanism, and his sceptical essay An Apology for Raymond Sebond (1580) wasn’t directed at scholastic philosophy. In fact, both these terms were invented much later as a means to write about or introduce Renaissance philosophy. Persisting with this simplistic dichotomy only perverts any attempt at writing the history of 14th- to 16th-century philosophy.

One of the first attempts at writing a history of philosophy in a modern way was Johann Jacob Brucker’s five-volume Historia critica philosophiae (1742-44) published in Leipzig. He didn’t use the terms ‘Renaissance’ or ‘humanism’, but the term ‘scholastic’ was important for him. The narrative we still live with in philosophy, for the most part, was already laid down by him. It’s the familiar narrative that emphasises the ancient beginning of philosophy, followed by a collapse in the Middle Ages, and an eventual recovery of ancient wisdom in what much later became called ‘Renaissance philosophy’.

The US philosopher Brian Copenhaver, one of the foremost scholars of our time, develops this idea in his contribution to The Routledge Companion to Sixteenth-Century Philosophy (2017). In ‘Philosophy as Descartes Found It: Humanists v Scholastics?’, he explains how Brucker’s ideal was developed from Cicero and called by him ‘humanitatis litterae’ or ‘humanitatis studia’. For Brucker, these terms signified the works of the classical authors and the study of them. The Latin he used for the teaching of the classical authors was ‘humanior disciplina’. Brucker sees himself as completing a project he claims was started by Petrarch in the mid-14th century: a cultural renewal that would save philosophy from the darkness of scholasticism.

As we’ve come to know more about the period referred to by Brucker as the Middle Age, it has become clear that it’s simply wrong to call it a decline. It is instead extraordinarily rich philosophically, and should be celebrated as hugely innovative. It’s by no means a ‘dark age’. Quite the contrary. So the view that emerges in Brucker stems from a lack of knowledge and understanding of the philosophy of that time.

Read the full article in Aeon.

International thief thief

Lily Saint, Africa Is A Country, 27 May 2021

In 1909, Sir Ralph Denham Rayment Moor, British Consul General of the British Southern Nigerian Protectorate, took his life by ingesting cyanide. Eleven years earlier, following Britain’s “punitive” attack on Benin City’s Royal Court, Moor helped transfer loot taken from Benin City into Queen Victoria’s private collection and to the British Foreign Office. Pilfered materials taken by Moor and many others include the now famous brass reliefs depicting the history of the Benin Kingdom—known collectively as the Benin Bronzes. This is in addition to commemorative brass heads and tableaux; carved ivory tusks; decorative and bodily ornaments; healing, divining, and ceremonial objects; and helmets, altars, spoons, mirrors, and much else. Moor also kept things for himself, including the Queen Mother ivory hip-mask. After Moor’s suicide, British ethnologist Charles Seligman, famous for promoting the racist “Hamitic hypothesis” undergirding much early eugenicist thought, purchased this same mask, one of six known examples. With his wife Brenda Seligman, an anthropologist in her own right, Charles amassed a giant collection of “ethnographic objects.” In 1958, Brenda sold the Queen Mother mask for £20,000 to Nelson Rockefeller, who featured it in his now-defunct Museum of Primitive Art before gifting it in 1972 to the Metropolitan Museum of Art. That’s where I visited it this week in New York City—and as Dan Hicks pointed out in a recent tweet, if you are reading this in the global North, there’s a good chance that an item taken from the Benin Court is in the collection of a regional, university, or national museum near you, too.

For Hicks, author of The Brutish Museums: The Benin Bronzes, Colonial Violence and Cultural Restitution, this provenance history of the Queen Mother mask—and every single other history of the acquisition and transfer of objects from Benin City to museums across the “developed” world—is a history that begins and ends in violence. Indeed, the category of ethnographic museums emerged in the nineteenth century precisely out of the demonic alliance between anthropological inquiry and colonial pillage. In 1919, a German ethnologist observed that “the spoils of war [Kriegsbeute] made during the conquest of Benin … were the biggest surprise that the field of ethnology had ever received.” These so-called spoils buttressed these museums’ raison d’être: to collect and display non-Western cultures as evidence of “European victory over ‘primitive’, archaeological African cultures.” The formation of ethnographic museums, including the Pitt Rivers Museum at Oxford, where Hicks currently works, “have compounded killings, cultural destructions and thefts with the propaganda of race science [and] with the normalisation of the display of human cultures in material form” (my italics). For Hicks, the continued display of these stolen objects in poorly lit basement rooms, sophisticated modern vitrines, and private collections is an “enduring brutality … refreshed every day that an anthropology museum … opens its doors.”

After this book, there can be no more false justifications for holding Benin Bronzes in museums outside of Africa, nor further claims that changing times mean new approaches are sufficient for recontextualizing art objects. This book inaugurates its own paradigm shift in museum practices, collection, and ethics. While there are preceding arguments for returning Western museums’ holdings in African art (most notably Felwine Sarr and Bénédicte Savoy’s Restitution of African Cultural Heritage, or Rapport sur la restitution du patrimoine culturel africain), the comprehensiveness of Hicks’s argument is extraordinary. In chapter after chapter, shifting agilely between historical perspectives and conceptual frameworks, he revisits the siege on Benin and its afterlives in museums across the globe. From Hicks’s detailed appendix, we learn that these stolen objects can be found in approximately 161 different museums and galleries worldwide, from the British Museum to the Louvre in Abu Dhabi, with only 11 on the African continent.